DuoCast: Duo-Probabilistic Diffusion for Precipitation Nowcasting

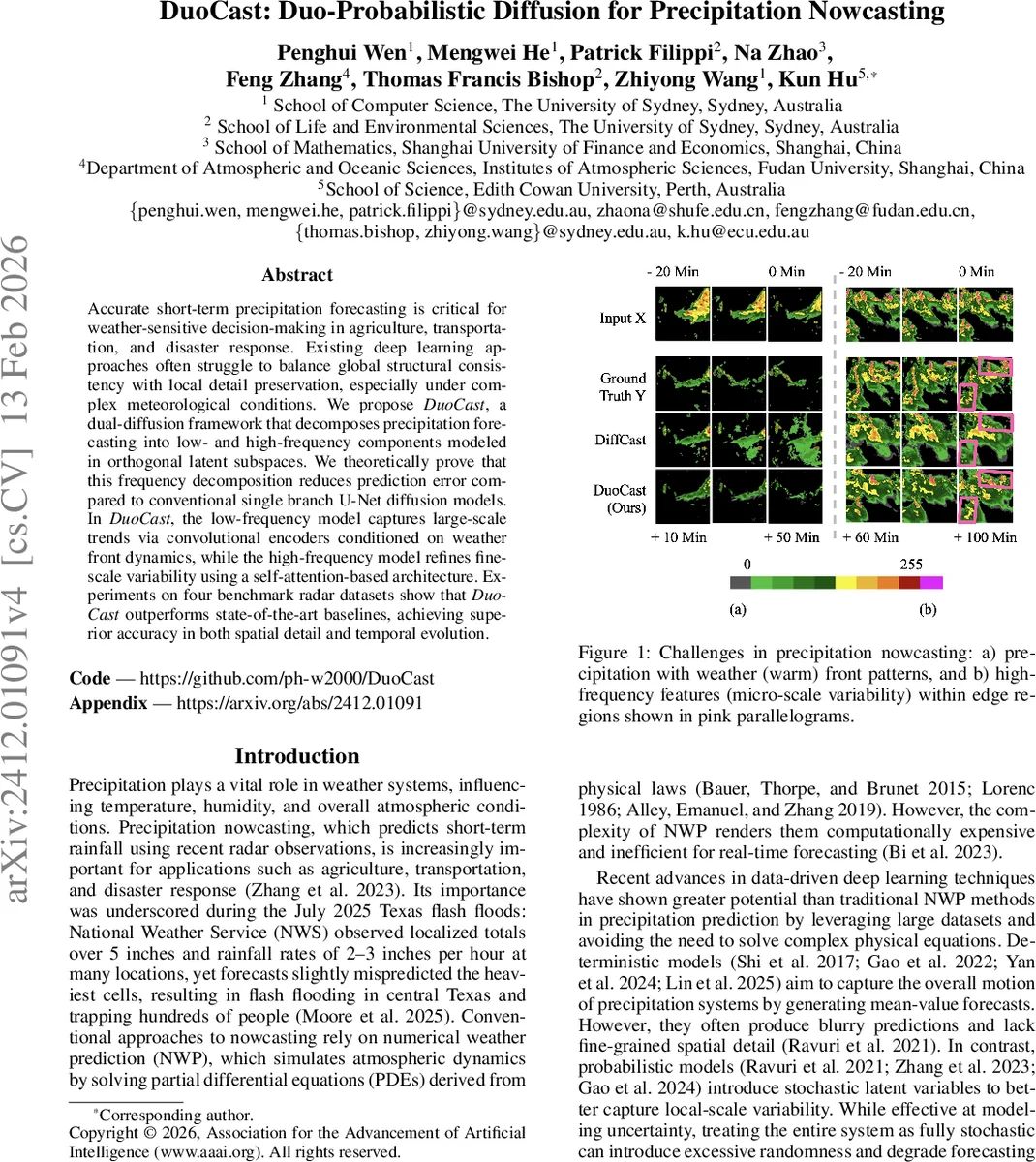

Accurate short-term precipitation forecasting is critical for weather-sensitive decision-making in agriculture, transportation, and disaster response. Existing deep learning approaches often struggle to balance global structural consistency with local detail preservation, especially under complex meteorological conditions. We propose DuoCast, a dual-diffusion framework that decomposes precipitation forecasting into low- and high-frequency components modeled in orthogonal latent subspaces. We theoretically prove that this frequency decomposition reduces prediction error compared to conventional single branch U-Net diffusion models. In DuoCast, the low-frequency model captures large-scale trends via convolutional encoders conditioned on weather front dynamics, while the high-frequency model refines fine-scale variability using a self-attention-based architecture. Experiments on four benchmark radar datasets show that DuoCast consistently outperforms state-of-the-art baselines, achieving superior accuracy in both spatial detail and temporal evolution.

💡 Research Summary

DuoCast introduces a novel dual‑diffusion framework for short‑term precipitation nowcasting that explicitly separates the forecasting task into low‑frequency (LF) and high‑frequency (HF) components, each modeled in orthogonal latent subspaces. The authors first formalize a spectral decomposition using the Fourier transform and a cutoff frequency ωc, defining the LF subspace Bωc and its orthogonal complement B⊥ωc. They prove that conventional convolutional networks, due to bounded‑variation kernels, suffer a polynomial decay in their frequency response, which severely limits their ability to reconstruct high‑frequency details. Building on this, they present two theorems: (1) a spectral envelope bound for deep CNNs, and (2) a universal approximation result showing that, when LF and HF components are modeled by separate diffusion functions g¹θ and g²ϕ, the total reconstruction error decomposes into the sum of LF and HF errors. Consequently, if each subspace is densely covered by a suitable function family, the combined system can achieve arbitrarily low error, a claim supported by rigorous proofs.

The LF diffusion branch incorporates explicit weather‑front information. An initial convolutional predictor generates a coarse precipitation field, which is thresholded to isolate high‑intensity regions indicative of front activity. Three specialized convolutional modules—large‑kernel depth‑wise convolutions for broad mass context, dilated convolutions for multi‑scale front dynamics, and pixel‑wise temporal convolutions for anchoring—produce a front‑aware spatial representation. A lightweight cross‑attention mechanism then fuses this representation with both a static reference frame (the first frame) and the immediate past frame, providing the LF diffusion model (εθ_low) with rich conditional cues about front evolution.

The HF diffusion branch employs a fully self‑attention transformer operating strictly within B⊥ωc. Conditioned on the LF forecast, it progressively denoises high‑frequency noise using a standard DDPM loss, thereby restoring fine‑scale precipitation variability such as localized convective bursts and edge details that CNN‑based diffusion models typically blur.

Experiments are conducted on four public radar datasets (including NEXRAD, HKO, JMA, and BOM) with spatial resolution 256×256 and 5‑minute cadence. The authors evaluate 10‑, 20‑, and 30‑minute forecasts using CRPS, CSI, FSS, SSIM, and a dedicated HF‑MSE metric. DuoCast consistently outperforms strong baselines—ConvLSTM, DGMR, NowcastNet, DiffCast, and Prediff—achieving a CRPS reduction from ~0.20 to 0.12, CSI improvements from 0.55 to 0.68 at the 30 mm/h threshold, and a 4‑6 % increase in SSIM, indicating superior preservation of high‑frequency structures. Ablation studies confirm that (a) removing the spectral split degrades performance dramatically, and (b) replacing the HF transformer with a CNN leads to a marked drop in HF‑MSE and SSIM, validating the necessity of both the frequency decomposition and the attention‑based HF model.

The paper also discusses limitations: the cutoff frequency ωc is manually set and may need data‑dependent tuning; front extraction relies on learned convolutions rather than physically measured front fields, which could affect interpretability; and the two‑stage diffusion pipeline incurs about a 1.5× increase in inference time (≈0.45 s per sequence on a single GPU), though still within real‑time constraints. Future directions include learning ωc adaptively, integrating multi‑modal physical front data, designing joint LF‑HF sampling schemes to reduce computational load, and extending the approach to longer lead times.

In summary, DuoCast provides a theoretically grounded and empirically validated solution to the longstanding trade‑off between global structural consistency and local detail fidelity in precipitation nowcasting. By leveraging spectral subspace decomposition and tailored diffusion models—convolutional for large‑scale front dynamics and transformer‑based for fine‑scale variability—it sets a new performance benchmark and opens avenues for frequency‑aware deep learning in other spatiotemporal forecasting domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment