Vulnerabilities in AI-generated Image Detection: The Challenge of Adversarial Attacks

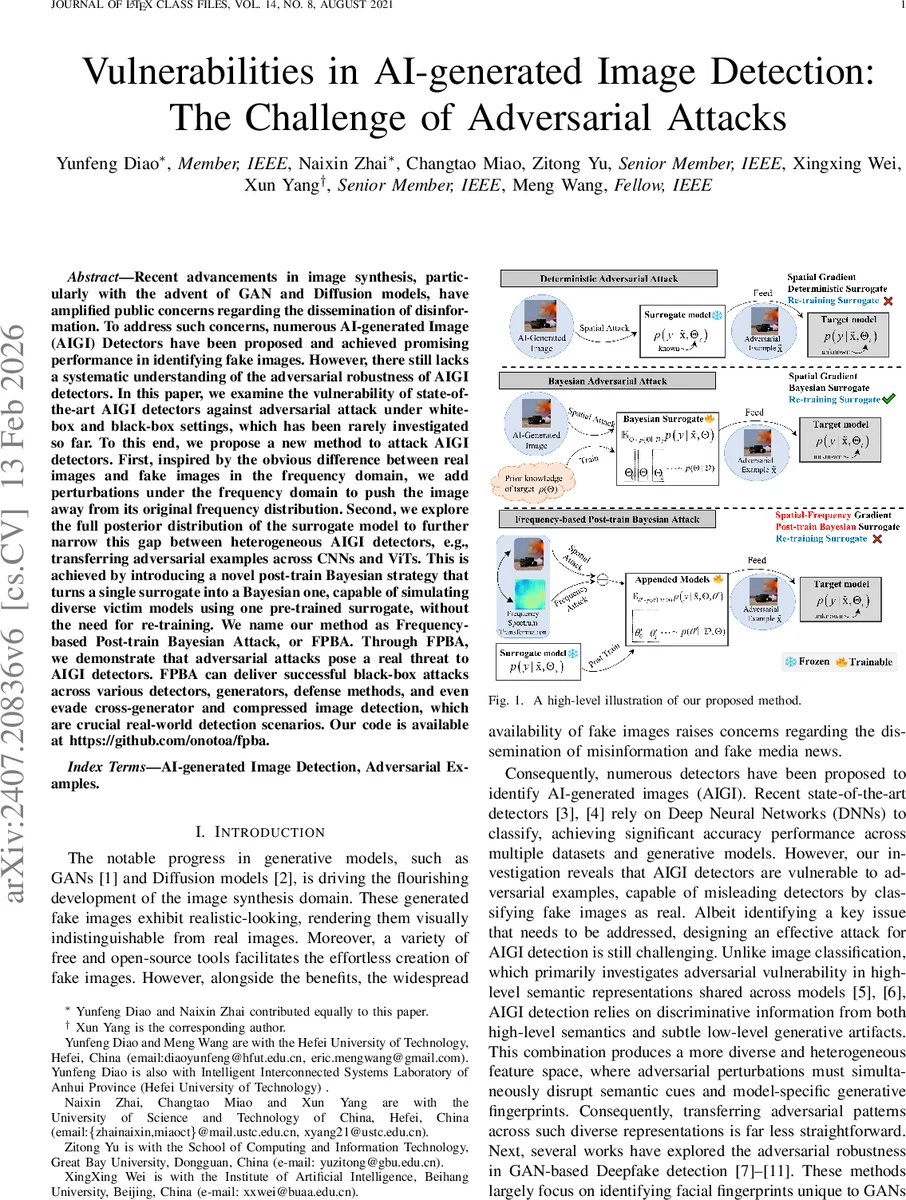

Recent advancements in image synthesis, particularly with the advent of GAN and Diffusion models, have amplified public concerns regarding the dissemination of disinformation. To address such concerns, numerous AI-generated Image (AIGI) Detectors have been proposed and achieved promising performance in identifying fake images. However, there still lacks a systematic understanding of the adversarial robustness of AIGI detectors. In this paper, we examine the vulnerability of state-of-the-art AIGI detectors against adversarial attack under white-box and black-box settings, which has been rarely investigated so far. To this end, we propose a new method to attack AIGI detectors. First, inspired by the obvious difference between real images and fake images in the frequency domain, we add perturbations under the frequency domain to push the image away from its original frequency distribution. Second, we explore the full posterior distribution of the surrogate model to further narrow this gap between heterogeneous AIGI detectors, e.g., transferring adversarial examples across CNNs and ViTs. This is achieved by introducing a novel post-train Bayesian strategy that turns a single surrogate into a Bayesian one, capable of simulating diverse victim models using one pre-trained surrogate, without the need for re-training. We name our method as Frequency-based Post-train Bayesian Attack, or FPBA. Through FPBA, we demonstrate that adversarial attacks pose a real threat to AIGI detectors. FPBA can deliver successful black-box attacks across various detectors, generators, defense methods, and even evade cross-generator and compressed image detection, which are crucial real-world detection scenarios. Our code is available at https://github.com/onotoa/fpba.

💡 Research Summary

This paper presents a comprehensive analysis of the vulnerabilities inherent in AI-Generated Image (AIGI) detectors, focusing on the threat posed by adversarial attacks. As generative models like GANs and Diffusion models become more sophisticated and accessible, the risk of disinformation through synthetic imagery increases. While numerous AIGI detectors have been developed with high accuracy, their robustness against malicious, intentionally crafted inputs (adversarial examples) remains largely unexplored. This work systematically investigates this blind spot and proposes a novel, highly effective attack method named Frequency-based Post-train Bayesian Attack (FPBA).

The core problem stems from the fundamental difference between AIGI detection and standard image classification. Detectors rely on a combination of high-level semantics and subtle, low-level generative artifacts (fingerprints) to distinguish real from fake. This creates a heterogeneous and complex feature space, making it challenging to craft adversarial perturbations that transfer effectively across different detector architectures (e.g., from Convolutional Neural Networks to Vision Transformers).

The FPBA framework is designed to overcome these challenges through three synergistic innovations:

-

Frequency-Domain Perturbation: The authors first observe a discernible statistical difference between real and AI-generated images in the frequency domain (e.g., via Discrete Cosine Transform). Capitalizing on this, FPBA operates directly in the frequency domain, adding perturbations that shift an image away from its original frequency distribution—essentially obscuring the tell-tale fingerprints detectors look for. To simulate diverse frequency responses from a single surrogate model, it employs random spectrum transformations, creating varied “views” of the input for attack gradient calculation.

-

Post-Train Bayesian Strategy: This is the paper’s key contribution for enhancing cross-architecture transferability. Instead of the computationally expensive process of training an ensemble of surrogate models, FPBA proposes a “post-train” Bayesian approach. It takes a single, pre-trained surrogate model, freezes its entire feature extraction backbone, and appends a small, trainable Bayesian component (e.g., an MLP layer) to its end. The parameters of this appended module are then sampled using Bayesian inference techniques like Stochastic Gradient Adaptive Hamiltonian Monte Carlo. This elegantly simulates an ensemble of classifiers on top of a fixed feature extractor, approximating the behavior of diverse victim models without any retraining of the large base network. It efficiently captures model uncertainty and significantly boosts attack transferability.

-

Hybrid Gradient Aggregation: Recognizing that some detectors also rely on spatial-domain features, FPBA further strengthens its attack by aggregating gradients from both the frequency domain (computed on randomly transformed inputs) and the original spatial domain. This hybrid approach ensures the adversarial perturbation is effective against detectors that exploit artifacts in either or both domains.

The authors conduct extensive experiments to validate FPBA’s efficacy. They evaluate against 17 state-of-the-art AIGI detectors based on various architectures (CNNs like ResNet, EfficientNet and Transformers like ViT, Swin Transformer), using images generated by diverse sources (Stable Diffusion, DALL-E 2, Midjourney). Evaluations cover both white-box (full knowledge of the surrogate) and practical black-box (unknown victim model) settings.

The results are striking. FPBA consistently and significantly outperforms all baseline attack methods (including I-FGSM, MIM, DIM, TIM) in terms of Attack Success Rate (ASR). It demonstrates remarkable transferability, especially across heterogeneous architectures (e.g., from CNN-based to Transformer-based detectors). Crucially, FPBA remains effective in challenging real-world scenarios: it successfully evades detectors trained on one type of generator when attacking images from a different generator (cross-generator setting), and it maintains high success rates even when the adversarial images are subjected to JPEG compression—a common post-processing defense.

In conclusion, this paper sounds a critical alarm for the field of AI-generated content detection. It demonstrates that current high-accuracy detectors are highly susceptible to carefully crafted adversarial attacks like FPBA. The proposed method is not only a powerful tool for vulnerability assessment but also sets a new benchmark for adversarial robustness. The findings underscore that for AIGI detectors to be trustworthy and deployable in safety-critical scenarios, adversarial robustness must be a primary design and evaluation criterion, not an afterthought. The open-source release of the code facilitates further research in developing more robust detectors and understanding the limits of synthetic media detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment