Stroke of Surprise: Progressive Semantic Illusions in Vector Sketching

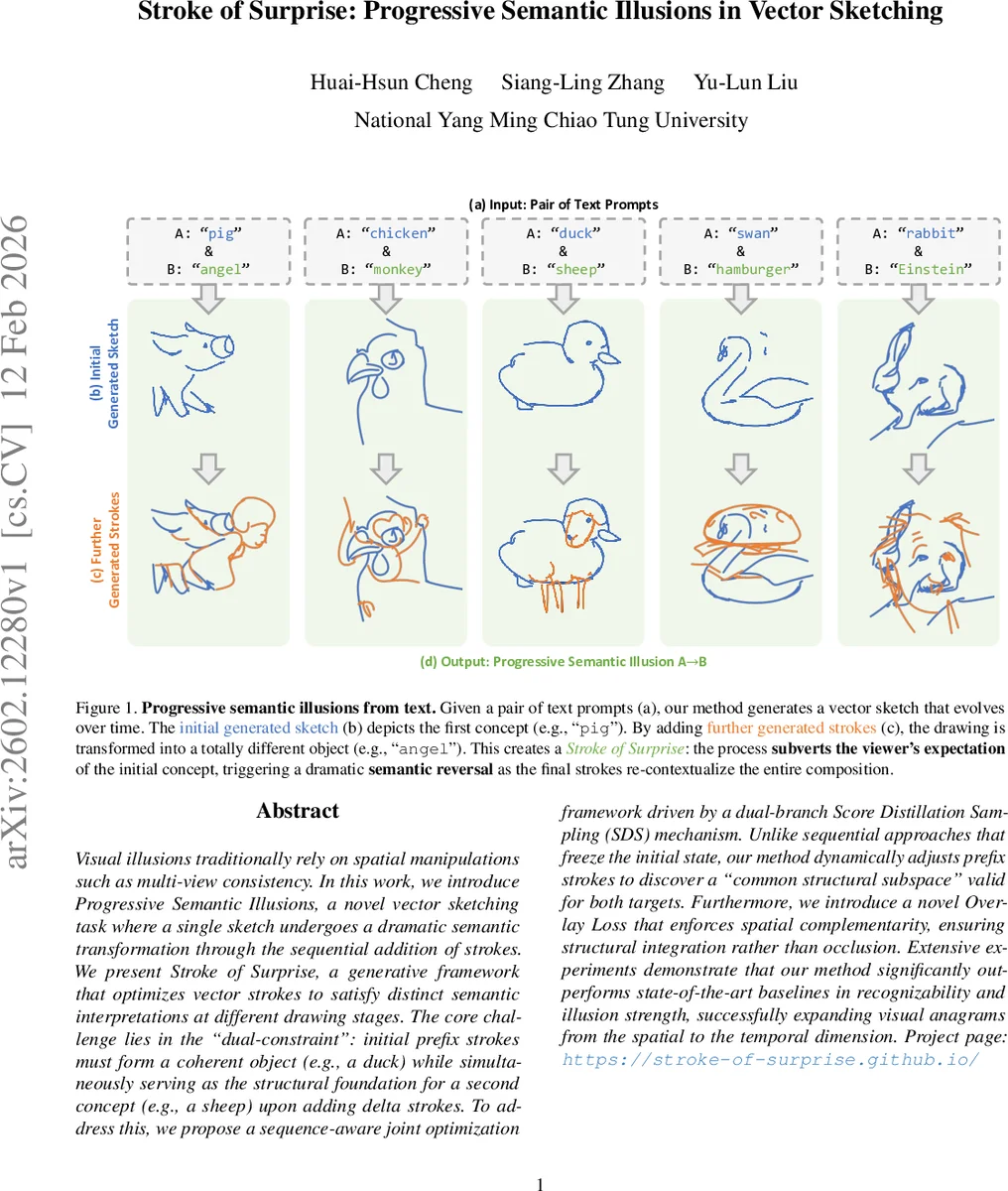

Visual illusions traditionally rely on spatial manipulations such as multi-view consistency. In this work, we introduce Progressive Semantic Illusions, a novel vector sketching task where a single sketch undergoes a dramatic semantic transformation through the sequential addition of strokes. We present Stroke of Surprise, a generative framework that optimizes vector strokes to satisfy distinct semantic interpretations at different drawing stages. The core challenge lies in the “dual-constraint”: initial prefix strokes must form a coherent object (e.g., a duck) while simultaneously serving as the structural foundation for a second concept (e.g., a sheep) upon adding delta strokes. To address this, we propose a sequence-aware joint optimization framework driven by a dual-branch Score Distillation Sampling (SDS) mechanism. Unlike sequential approaches that freeze the initial state, our method dynamically adjusts prefix strokes to discover a “common structural subspace” valid for both targets. Furthermore, we introduce a novel Overlay Loss that enforces spatial complementarity, ensuring structural integration rather than occlusion. Extensive experiments demonstrate that our method significantly outperforms state-of-the-art baselines in recognizability and illusion strength, successfully expanding visual anagrams from the spatial to the temporal dimension. Project page: https://stroke-of-surprise.github.io/

💡 Research Summary

The paper introduces a novel task called Progressive Semantic Illusion (PSI) in the domain of vector sketch generation. Unlike traditional visual illusions that rely on spatial tricks (multi‑view consistency, shadows, camouflaging, etc.), PSI requires a single SVG‑style sketch to convey one semantic concept at an early stage and, after the addition of further strokes, to be re‑interpreted as a completely different concept. The authors call this “Stroke of Surprise”.

The core technical challenge is the “dual‑constraint”: the initial prefix strokes must be recognizable as object A (e.g., a duck) while simultaneously forming a structural foundation that can be re‑contextualized into object B (e.g., a sheep) once the delta strokes are added. Existing raster‑based editing pipelines either overwrite the initial pixels (destructive editing) or treat the prefix as fixed, leading to semantic noise when later strokes are added. Vector‑based baselines such as SketchDreamer or SketchAgent use a greedy strategy that optimizes the prefix solely for A, causing clutter or loss of recognizability for B.

To solve this, the authors propose a dual‑branch Score Distillation Sampling (SDS) framework that jointly optimizes both the prefix and the full set of strokes. Two parallel branches are instantiated:

- Prefix branch renders only the prefix strokes, feeds the rasterized image into a frozen text‑to‑image diffusion model (e.g., Stable Diffusion) conditioned on prompt p₁ (object A), and computes an SDS loss Lₚ₍ₛₑₛ₎.

- Full branch renders the complete sketch (prefix + delta), conditions the same diffusion model on prompt p₂ (object B), and computes L_f₍ₛₑₛ₎.

The total SDS loss is L_SDS = Lₚ₍ₛₑₛ₎ + L_f₍ₛₑₛ₎, and gradients are back‑propagated to all learnable stroke parameters (Bezier control points, widths, colors). Because the prefix strokes belong to both branches, they receive simultaneous gradients from both objectives, encouraging configurations that satisfy both semantic roles.

A major failure mode of pure SDS is spatial overlap: delta strokes may simply occlude the prefix, destroying the illusion. The authors therefore introduce an Overlay Loss. They render the prefix and delta subsets separately, apply a Gaussian blur G_σ to each raster (producing soft masks \tilde I_pre and \tilde I_delta), and compute a normalized inner product:

L_overlay = 2 ⟨\tilde I_pre, \tilde I_delta⟩ / (‖\tilde I_pre‖₁ + ‖\tilde I_delta‖₁).

This penalizes excessive proximity and encourages spatial complementarity, ensuring that new strokes integrate structurally rather than hide the old ones. The final objective is L = L_SDS + λ_overlay·L_overlay.

Training starts with N randomly initialized strokes placed near the canvas center; the first k strokes form the prefix, the remaining N‑k become delta. At each iteration the two branches compute their respective SDS losses, the overlay loss is evaluated, and the combined gradient updates all stroke parameters. Because the prefix is continuously refined under both objectives, the optimization discovers a “common structural subspace” that can be interpreted as both A and B.

Evaluation is performed with a GPT‑4o‑based visual‑language model (VLM) that scores four dimensions: (1) Phase‑1 recognizability, (2) Phase‑2 recognizability, (3) Illusion quality (ensuring the full sketch is more recognizable than the delta alone), and (4) Sketch quality (penalizing clutter). Additionally, quantitative metrics such as CLIP similarity, Inception‑ResNet (IR) similarity, and HPS (high‑level perceptual similarity) are combined into a composite score:

R = (CLIP_p1·CLIP_p2 / CLIP_δ²) × (Φ(IR_p1)² + Φ(IR_p2)² – Φ(IR_δ)²) × (HPS_p1² + HPS_p2² – HPS_δ²).

Experiments on a variety of text‑prompt pairs (e.g., “pig → angel”, “duck → sheep”, “rabbit → Einstein”) show that the proposed method outperforms raster baselines (Nano Banana Pro) and vector baselines (SketchDreamer, SketchAgent) by a large margin. Human‑evaluated recognizability scores improve by roughly 15‑20 %, and the overlay loss reduces spatial interference by over 30 % compared to a version without it.

The framework naturally scales to K‑phase (multi‑step) illusions. Strokes are partitioned into K disjoint subsets S₁,…,S_K; each cumulative set S₁:ᵢ = ⋃_{j=1}^{i} S_j renders concept A_i. Parallel SDS branches are instantiated for every phase, so early strokes receive gradients from all later phases, guaranteeing that the initial sketch remains a useful scaffold throughout the entire evolution (e.g., Apple → Sheep → Einstein).

In summary, the paper makes three major contributions: (1) defining the Progressive Semantic Illusion task, extending visual illusion research from spatial to temporal dimensions; (2) proposing a joint dual‑branch SDS optimization with an overlay loss that discovers a shared structural subspace for dual semantics; and (3) demonstrating scalability to multi‑phase sequences and achieving state‑of‑the‑art performance on recognizability and illusion strength. The work opens a new research direction at the intersection of generative vector graphics, diffusion guidance, and perceptual psychology.

Comments & Academic Discussion

Loading comments...

Leave a Comment