Community Concealment from Unsupervised Graph Learning-Based Clustering

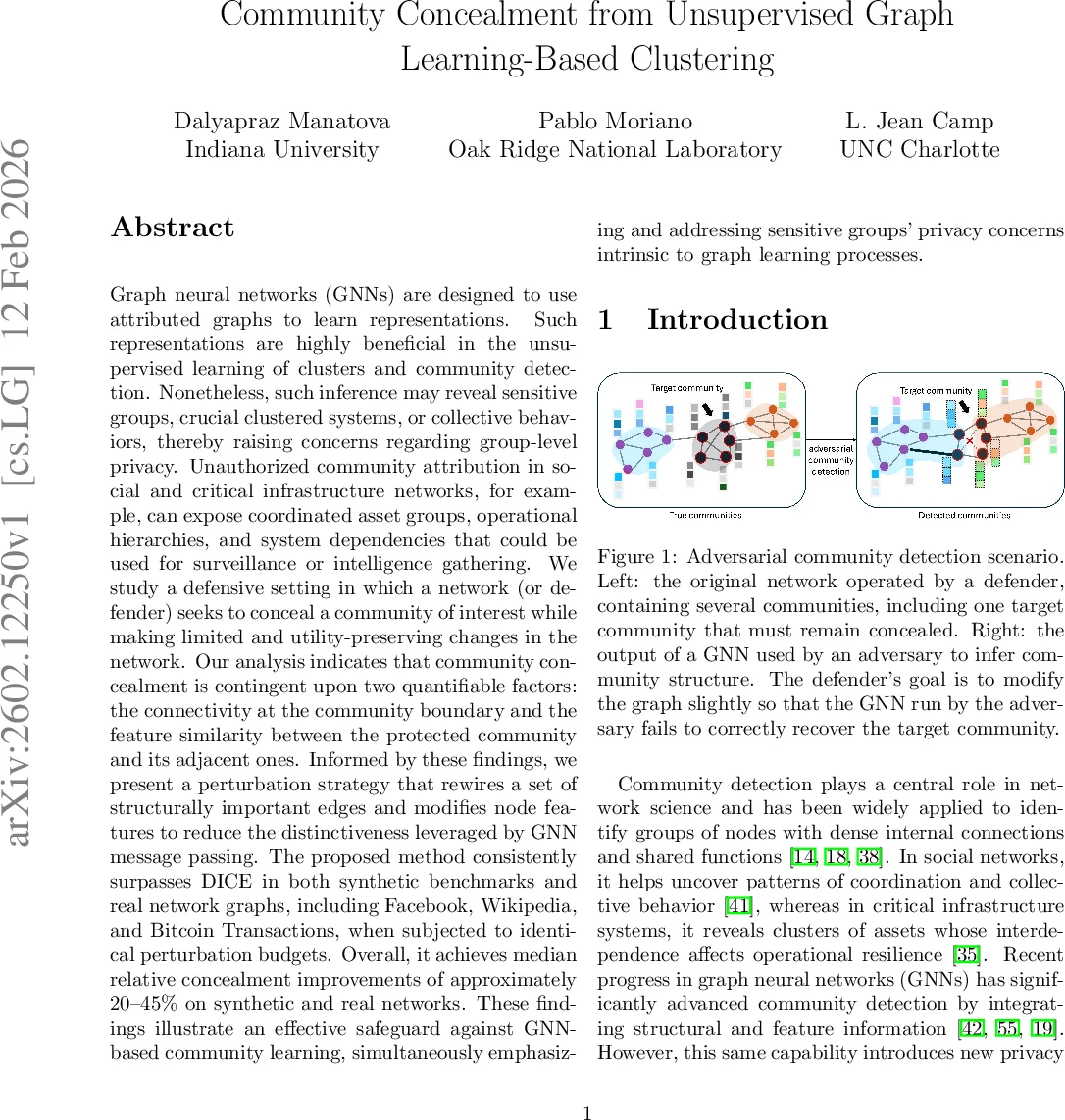

Graph neural networks (GNNs) are designed to use attributed graphs to learn representations. Such representations are beneficial in the unsupervised learning of clusters and community detection. Nonetheless, such inference may reveal sensitive groups, clustered systems, or collective behaviors, raising concerns regarding group-level privacy. Community attribution in social and critical infrastructure networks, for example, can expose coordinated asset groups, operational hierarchies, and system dependencies that could be used for profiling or intelligence gathering. We study a defensive setting in which a data publisher (defender) seeks to conceal a community of interest while making limited, utility-aware changes in the network. Our analysis indicates that community concealment is strongly influenced by two quantifiable factors: connectivity at the community boundary and feature similarity between the protected community and adjacent communities. Informed by these findings, we present a perturbation strategy that rewires a set of selected edges and modifies node features to reduce the distinctiveness leveraged by GNN message passing. The proposed method outperforms DICE in our experiments on synthetic benchmarks and real network graphs under identical perturbation budgets. Overall, it achieves median relative concealment improvements of approximately 20-45% across the evaluated settings. These findings demonstrate a mitigation strategy against GNN-based community learning and highlight group-level privacy risks intrinsic to graph learning.

💡 Research Summary

This paper addresses a novel privacy threat that arises when graph neural networks (GNNs) are employed for unsupervised community detection. While GNNs dramatically improve clustering performance by jointly exploiting graph topology and node attributes, they also enable adversaries to infer sensitive groups—such as coordinated user clusters, operational units, or critical asset groups—by analyzing the learned node embeddings. The authors formalize a defensive scenario in which a data publisher (the defender) wishes to hide a target community from a GNN‑based adversary while making only limited, utility‑preserving modifications to the graph.

Through extensive controlled experiments on synthetic LFR benchmarks and real‑world graphs (Facebook, Wikipedia, Bitcoin transaction networks), the study identifies two quantitative factors that dominate “hidability”: (1) the ratio of external to internal edges at the community boundary, and (2) the similarity of node feature vectors between the target community and its neighboring communities. Communities that are weakly connected to the rest of the graph or that possess feature vectors markedly different from their surroundings are easier to conceal; conversely, moderately connected communities with overlapping feature distributions are the most challenging to hide.

Guided by these insights, the authors propose FCom‑DICE (Feature‑Community‑guided DICE), an extension of the classic DICE heuristic. Traditional DICE rewires edges by deleting internal links and adding external ones, assuming a purely structural detector. FCom‑DICE augments this process in two ways: (i) it selects a small set of “influential” boundary edges for rewiring, and (ii) it performs minimal, budget‑constrained adjustments to the feature vectors of the incident nodes. The feature adjustments deliberately reduce the distinguishability of the target community in the message‑passing steps of GNNs, thereby degrading the quality of the learned embeddings used for clustering.

The evaluation uses two concealment metrics (M₁ and M₂) that measure, respectively, the dispersion of target nodes across other detected communities and the degree to which target nodes are hidden among non‑target nodes. Under identical perturbation budgets, FCom‑DICE consistently outperforms vanilla DICE, achieving median relative improvements of roughly 20 %–45 % across all datasets. The advantage is most pronounced when the boundary connectivity is moderate and feature similarity is high—precisely the regimes where pure structural perturbations fail. Importantly, the overall network modularity and connectivity remain largely unchanged, demonstrating that the defense preserves the graph’s functional utility.

Beyond empirical results, the paper discusses practical implications for protecting high‑value groups in operational and critical‑infrastructure networks. It highlights that defending against GNN‑based inference requires joint consideration of topology and attributes, and it suggests future work to integrate formal privacy guarantees (e.g., differential privacy) with the proposed perturbation framework. In sum, the work provides the first systematic study of group‑level privacy in graph learning, introduces a principled, feature‑aware perturbation strategy, and empirically validates its superiority over existing structural‑only baselines.

Comments & Academic Discussion

Loading comments...

Leave a Comment