Any House Any Task: Scalable Long-Horizon Planning for Abstract Human Tasks

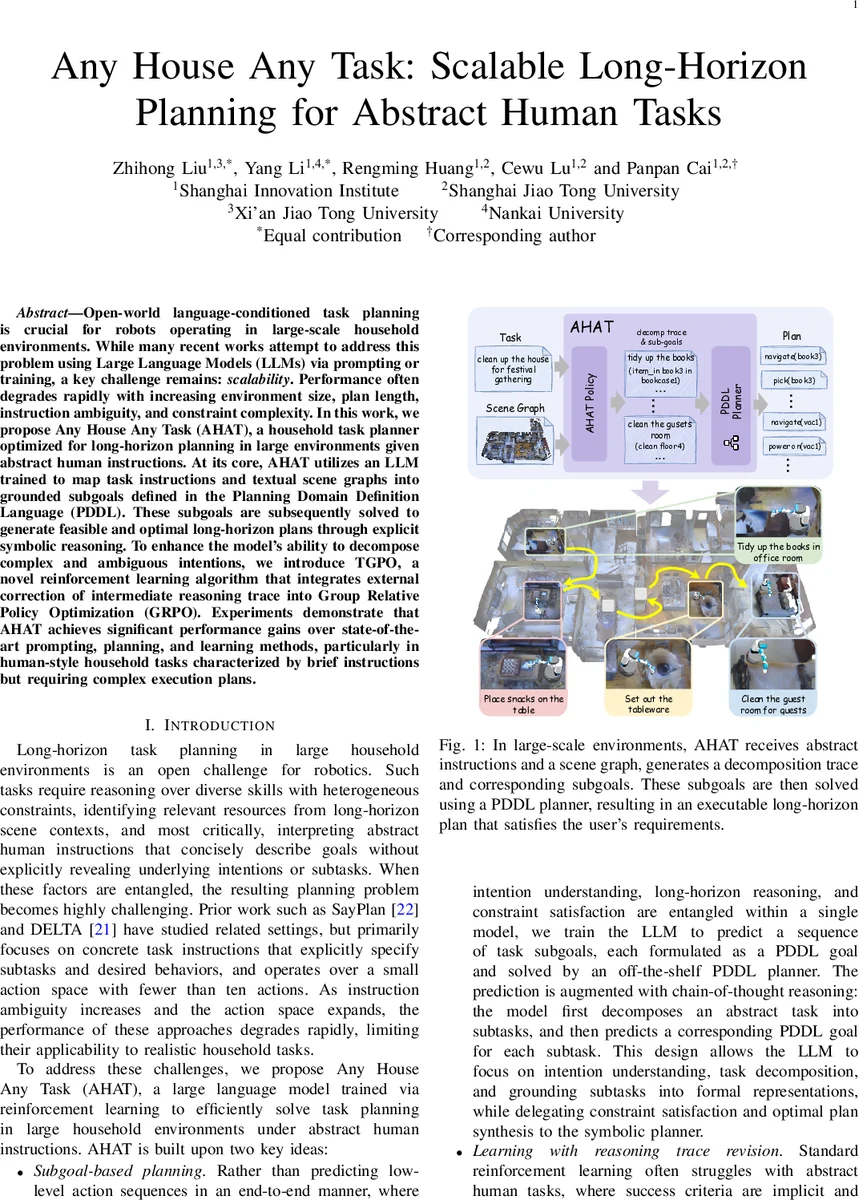

Open world language conditioned task planning is crucial for robots operating in large-scale household environments. While many recent works attempt to address this problem using Large Language Models (LLMs) via prompting or training, a key challenge remains scalability. Performance often degrades rapidly with increasing environment size, plan length, instruction ambiguity, and constraint complexity. In this work, we propose Any House Any Task (AHAT), a household task planner optimized for long-horizon planning in large environments given ambiguous human instructions. At its core, AHAT utilizes an LLM trained to map task instructions and textual scene graphs into grounded subgoals defined in the Planning Domain Definition Language (PDDL). These subgoals are subsequently solved to generate feasible and optimal long-horizon plans through explicit symbolic reasoning. To enhance the model’s ability to decompose complex and ambiguous intentions, we introduce TGPO, a novel reinforcement learning algorithm that integrates external correction of intermediate reasoning traces into Group Relative Policy Optimization (GRPO). Experiments demonstrate that AHAT achieves significant performance gains over state-of-the-art prompting, planning, and learning methods, particularly in human-style household tasks characterized by brief instructions but requiring complex execution plans.

💡 Research Summary

The paper tackles the problem of long‑horizon, language‑conditioned task planning for household robots operating in large‑scale homes. Existing approaches either rely on prompting large language models (LLMs) or train end‑to‑end planners, but they quickly lose performance as the environment grows, the plan lengthens, instructions become ambiguous, or constraints become more complex. To address these scalability issues, the authors introduce Any House Any Task (AHAT), a hybrid system that combines an LLM with a symbolic PDDL planner.

AHAT works in two stages. First, given a textual scene graph describing the house and an abstract human instruction, an LLM (initialized from Qwen‑2.5‑7B) generates a decomposition trace: a sequence of natural‑language sub‑tasks that break down the high‑level goal. Second, each sub‑task is grounded into one or more PDDL sub‑goals together with the relevant objects. These sub‑goals are fed to an off‑the‑shelf PDDL planner, which solves each sub‑problem while handling all logical and spatial constraints. The resulting sub‑plans are concatenated into a complete executable plan. This hierarchical design lets the LLM focus on language understanding and task decomposition, while the symbolic planner guarantees feasibility and optimality under complex constraints.

Training proceeds in two phases. A large synthetic dataset of 50 k household planning tasks is built from 308 scene graphs (HSSD and Gibson) and 1.6 k user personas that vary in age, occupation, and cultural background. Supervised fine‑tuning (SFT) uses high‑quality traces and PDDL goals generated by a strong LLM (e.g., GPT‑5) as ground truth. After a few SFT epochs, the authors switch to reinforcement learning with a novel algorithm called Trace‑Guided Policy Optimization (TGPO). TGPO augments Group Relative Policy Optimization (GRPO) by introducing an external “trace improver” that revises failed decomposition traces. During each RL iteration, the policy first produces a batch of candidate outputs; failed candidates are corrected, and the corrected trace is injected via token‑level constrained sampling while sub‑goal generation remains autoregressive. Rewards are computed from two components: (1) feasibility (whether the PDDL planner can solve the sub‑goals) and (2) task completion (verified by an auxiliary reviewer LLM). Group‑relative rewards are then used to compute robust policy gradients. This mechanism improves credit assignment for the intermediate reasoning steps and stabilizes learning on long‑horizon tasks.

Extensive evaluation covers in‑distribution held‑out scenes, out‑of‑distribution human‑generated instructions collected via crowdsourcing, and two public benchmarks (Behavior‑1K and PARTNR). AHAT consistently outperforms the strongest baselines, including state‑of‑the‑art general‑purpose LLMs (GPT‑5, Gemini‑3), prompting‑based planners (SayPlan, Delta), and learning‑based methods (SFT, GRPO, Reinforce++). The performance gap widens on tasks with highly abstract instructions, long plan horizons, and dense constraints, where baseline methods degrade sharply.

In summary, AHAT demonstrates that a carefully trained LLM can serve as an effective high‑level planner when paired with a symbolic engine for low‑level constraint handling, and that incorporating external trace correction via TGPO yields a scalable solution for long‑horizon household task planning. This work advances the feasibility of robots that can understand vague human requests and execute complex, multi‑step chores across large, realistic home environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment