Best of Both Worlds: Multimodal Reasoning and Generation via Unified Discrete Flow Matching

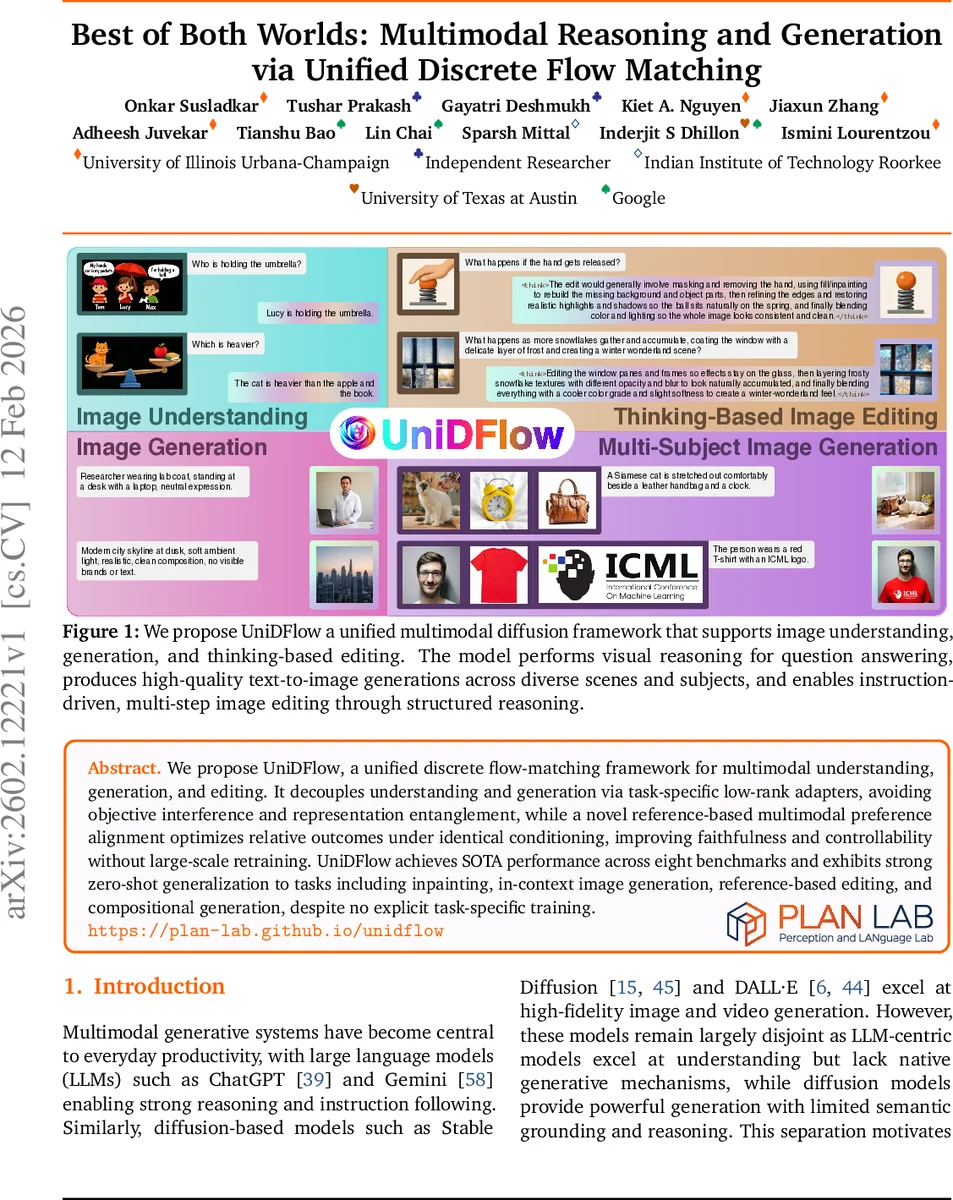

We propose UniDFlow, a unified discrete flow-matching framework for multimodal understanding, generation, and editing. It decouples understanding and generation via task-specific low-rank adapters, avoiding objective interference and representation entanglement, while a novel reference-based multimodal preference alignment optimizes relative outcomes under identical conditioning, improving faithfulness and controllability without large-scale retraining. UniDFlpw achieves SOTA performance across eight benchmarks and exhibits strong zero-shot generalization to tasks including inpainting, in-context image generation, reference-based editing, and compositional generation, despite no explicit task-specific training.

💡 Research Summary

UniDFlow introduces a unified discrete‑flow‑matching framework that simultaneously handles multimodal understanding, generation, and editing while avoiding the common pitfalls of existing multimodal models. The core idea is to keep a large pretrained vision‑language transformer (VLT) frozen and attach two lightweight low‑rank LoRA adapters: one dedicated to understanding (text alignment) and another to generation (vision alignment). By separating these adapters, UniDFlow prevents objective interference and representation entanglement that typically degrade performance when a single set of parameters is shared across tasks.

Training proceeds in three stages. Stage I (text alignment) adapts the frozen VLT to the discrete flow‑matching (DFM) objective for text tokens. A KL‑regularization term anchors the diffusion‑based output distribution to the original autoregressive language model, preserving the pretrained linguistic manifold. Stage II (vision alignment) maps images to discrete visual tokens via a pretrained tokenizer and trains only the generation LoRA to denoise these tokens under the same DFM loss, leaving the understanding adapters untouched. Stage III (reference‑based multimodal preference alignment, mRefDPo) introduces a pairwise log‑likelihood margin loss that compares “good” versus “bad” edited outputs conditioned on the same instruction and visual reference. This relative preference training directly optimizes faithfulness and controllability without requiring large‑scale retraining or additional RL stages.

A novel time‑conditioned RMSNorm (TSG‑RMSNorm) injects time information by modulating the RMSNorm scale parameters rather than altering activations, thereby preserving the pretrained weight distribution while enabling efficient few‑step sampling. UniDFlow can generate high‑quality images in as few as 20 denoising steps, a significant speedup over conventional diffusion models.

Empirically, UniDFlow achieves state‑of‑the‑art results on eight benchmarks covering VQA, text‑to‑image synthesis, and image editing, outperforming larger unified models by up to 13 % and beating strong baselines such as Qwen‑3 and DeepSeek‑VL2 by up to 24 %. Zero‑shot experiments demonstrate strong generalization to tasks like inpainting, in‑context generation, reference‑based editing, and compositional generation, all without task‑specific fine‑tuning.

In summary, UniDFlow’s contributions are threefold: (1) a unified discrete diffusion framework that repurposes a pretrained VLT as a generator over multimodal tokens, (2) a decoupled adapter architecture that isolates understanding from generation, and (3) a reference‑guided preference alignment that optimizes relative outcomes under identical conditioning. These innovations collectively deliver efficient training, fast inference, and superior performance across a wide range of multimodal tasks, while highlighting future directions such as scaling to ultra‑high‑resolution images and refining the definition of “good” versus “bad” edits for domain‑specific applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment