SAM3-LiteText: An Anatomical Study of the SAM3 Text Encoder for Efficient Vision-Language Segmentation

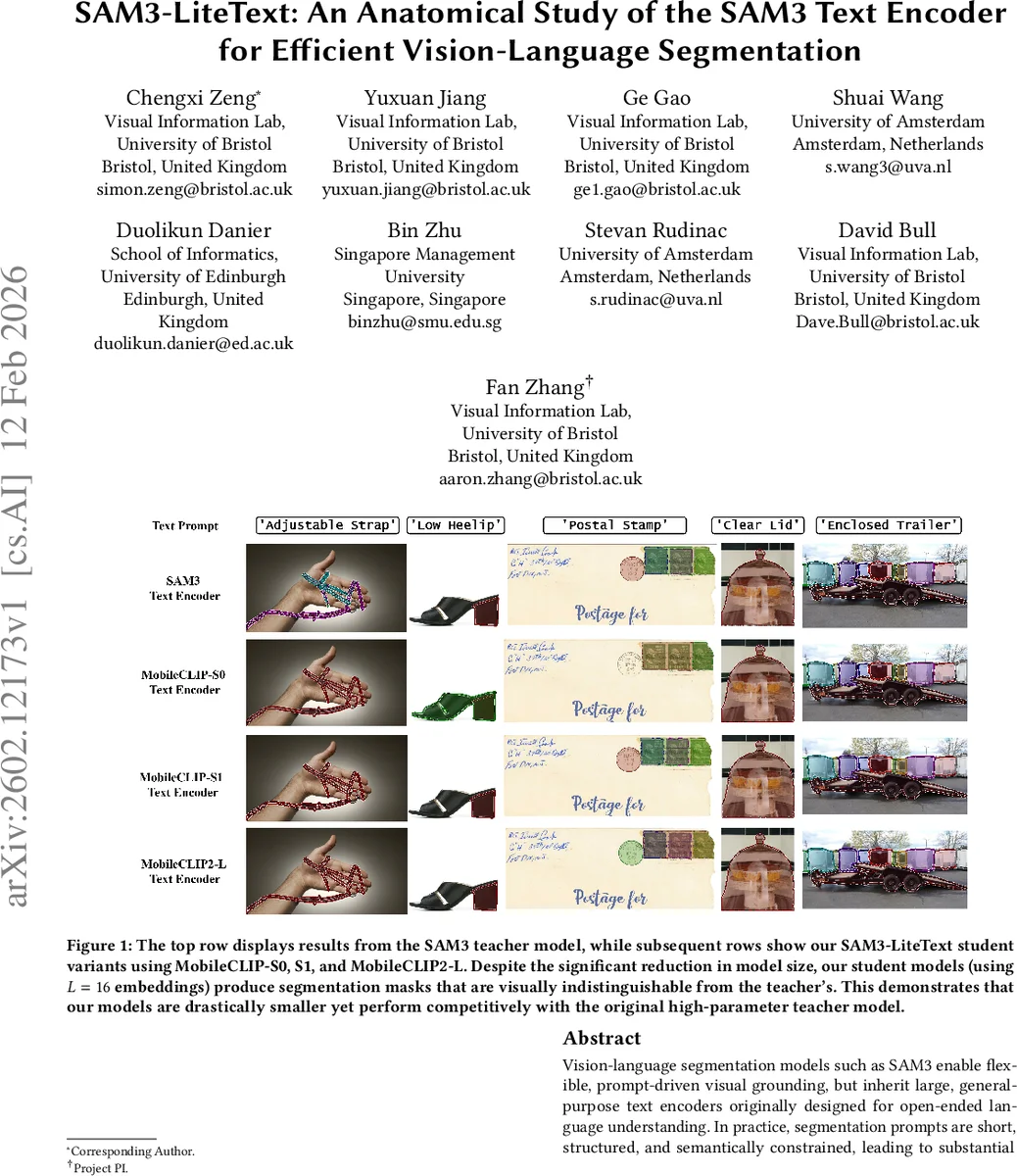

Vision-language segmentation models such as SAM3 enable flexible, prompt-driven visual grounding, but inherit large, general-purpose text encoders originally designed for open-ended language understanding. In practice, segmentation prompts are short, structured, and semantically constrained, leading to substantial over-provisioning in text encoder capacity and persistent computational and memory overhead. In this paper, we perform a large-scale anatomical analysis of text prompting in vision-language segmentation, covering 404,796 real prompts across multiple benchmarks. Our analysis reveals severe redundancy: most context windows are underutilized, vocabulary usage is highly sparse, and text embeddings lie on low-dimensional manifold despite high-dimensional representations. Motivated by these findings, we propose SAM3-LiteText, a lightweight text encoding framework that replaces the original SAM3 text encoder with a compact MobileCLIP student that is optimized by knowledge distillation. Extensive experiments on image and video segmentation benchmarks show that SAM3-LiteText reduces text encoder parameters by up to 88%, substantially reducing static memory footprint, while maintaining segmentation performance comparable to the original model. Code: https://github.com/SimonZeng7108/efficientsam3/tree/sam3_litetext.

💡 Research Summary

**

The paper presents a comprehensive anatomical study of the text encoder used in SAM3, a vision‑language segmentation model that enables prompt‑driven visual grounding. By collecting and analyzing 404,796 real prompts from six diverse segmentation benchmarks (RF100‑VL, LVIS, Ref‑COCO, and the SA‑Co suite), the authors demonstrate that the current CLIP‑style text encoder is dramatically over‑provisioned for the task at hand.

Key findings include: (1) Prompt length is short, with an average of 7.9 tokens; even the longest referring expressions average only 9.4 tokens. (2) Vocabulary usage is extremely sparse: only 35 % of the 49,408 BPE tokens ever appear in the corpus, and the top 100 tokens account for 58.5 % of all token occurrences. (3) The default context window of L = 32 results in 75.5 % of attention computation being wasted on padding, because most prompts occupy fewer than half the slots. Reducing the context length to L = 16 raises the information density to 0.48, incurs negligible truncation for short prompts, and only a modest 8 % truncation for the longest Ref‑COCO expressions. (4) Singular‑value decomposition of the token embedding matrix shows that the embeddings remain high‑rank (≈ 90 % variance requires 834 dimensions), indicating that low‑rank factorization would hurt semantic fidelity. In contrast, the positional embedding matrix exhibits high cosine similarity among positions 8‑31, reflecting under‑training of these slots because most prompts never reach them.

Motivated by these observations, the authors propose SAM3‑LiteText, a lightweight text‑encoding framework that replaces the original SAM3 encoder with a compact MobileCLIP student model (variants MobileCLIP‑S0, S1, and MobileCLIP2‑L). The distillation pipeline is domain‑aware: it fixes the context length to L = 16, treats prompts as “bags of concepts” to enforce permutation invariance, and adds a consistency loss that penalizes changes in the semantic embedding when token order is shuffled. Knowledge distillation is performed using both L2 distance between teacher and student embeddings and the proposed consistency term, ensuring that the student retains the semantic richness of the teacher while being far smaller.

Experiments on a suite of image segmentation benchmarks (COCO‑Ref, LVIS, RF100‑VL) and video segmentation datasets (YouTube‑VOS, DAVIS) show that the 42 M‑parameter student achieves 98.1 % of the teacher’s performance (average IoU drop < 0.3 %). Memory consumption drops from > 350 MB to ~45 MB, a reduction of over 87 %, and inference speed improves by roughly 1.8× on GPU and reaches real‑time rates on CPU/mobile hardware. The results confirm that aggressive compression of the text encoder does not compromise grounding accuracy when the compression respects the intrinsic properties of segmentation prompts.

The study’s contributions are threefold: (i) it provides the first quantitative characterization of text‑encoder redundancy in vision‑language segmentation, (ii) it introduces a domain‑specific distillation strategy that leverages short, sparse prompts and positional redundancy, and (iii) it demonstrates that a lightweight text encoder can effectively solve the static memory bottleneck for on‑device segmentation, paving the way for more sustainable, privacy‑preserving AI applications on edge devices. Future work may explore even smaller vocabularies, dynamic context‑length adaptation, and multi‑modal distillation that jointly compresses image and text encoders.

Comments & Academic Discussion

Loading comments...

Leave a Comment