Sci-CoE: Co-evolving Scientific Reasoning LLMs via Geometric Consensus with Sparse Supervision

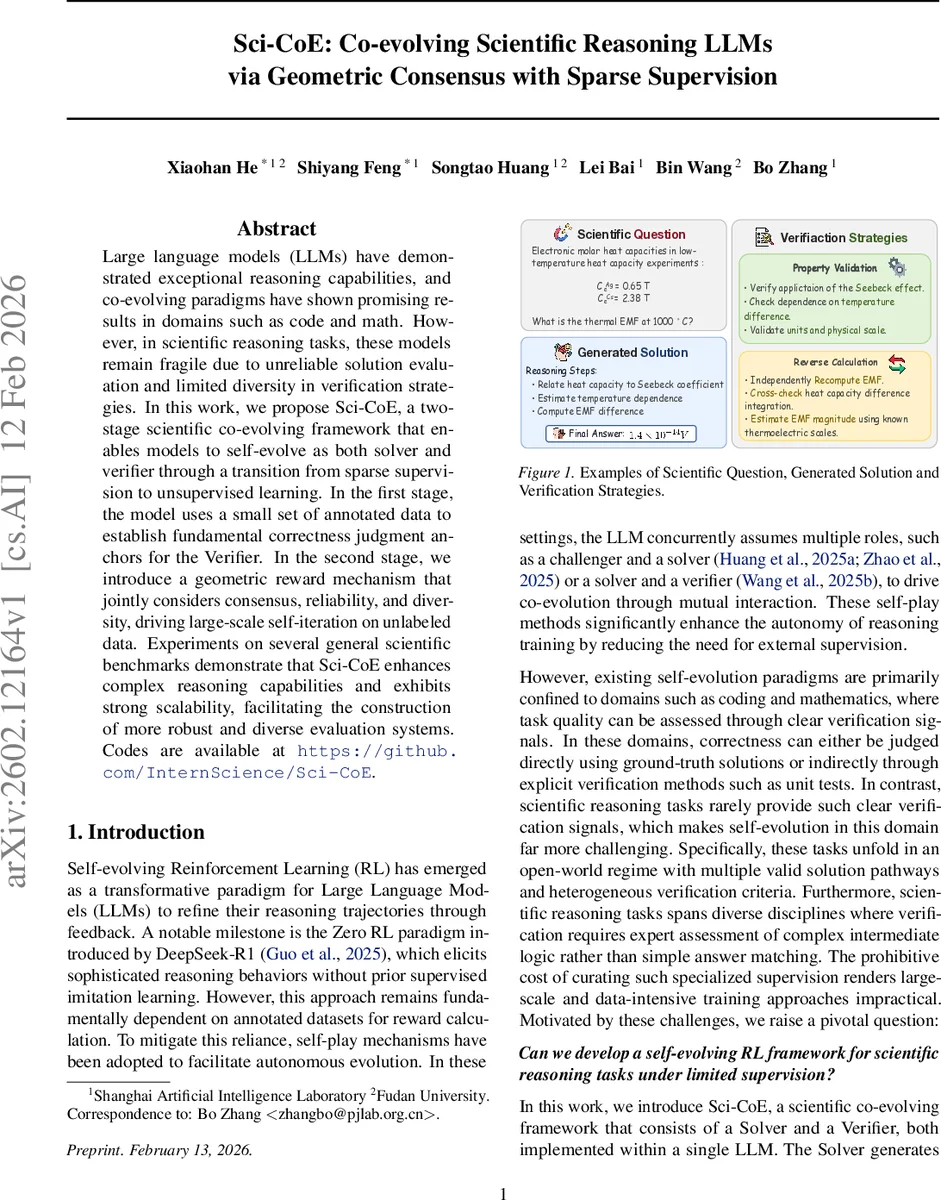

Large language models (LLMs) have demonstrated exceptional reasoning capabilities, and co-evolving paradigms have shown promising results in domains such as code and math. However, in scientific reasoning tasks, these models remain fragile due to unreliable solution evaluation and limited diversity in verification strategies. In this work, we propose Sci-CoE, a two-stage scientific co-evolving framework that enables models to self-evolve as both solver and verifier through a transition from sparse supervision to unsupervised learning. In the first stage, the model uses a small set of annotated data to establish fundamental correctness judgment anchors for the Verifier. In the second stage, we introduce a geometric reward mechanism that jointly considers consensus, reliability, and diversity, driving large-scale self-iteration on unlabeled data. Experiments on several general scientific benchmarks demonstrate that Sci-CoE enhances complex reasoning capabilities and exhibits strong scalability, facilitating the construction of more robust and diverse evaluation systems. Codes are available at https://github.com/InternScience/Sci-CoE.

💡 Research Summary

SciCoE (Scientific Co‑evolving) introduces a two‑stage self‑evolving framework for large language models (LLMs) to master scientific reasoning with only sparse supervision. The core challenge addressed is the lack of clear correctness signals and the heterogeneous verification criteria inherent to scientific problems, which makes existing self‑play approaches (successful in code and math) ineffective. SciCoE tackles this by embedding both a Solver and a Verifier within a single LLM, allowing the model to generate candidate solutions and, simultaneously, natural‑language verification strategies that assess those solutions from multiple scientific perspectives (e.g., physical constraints, logical consistency, reverse calculations).

Stage 1 – Anchored Learning – uses a tiny fraction (1‑10 %) of labeled scientific questions. The Solver receives a binary reward (1 for exact match with the ground‑truth answer, 0 otherwise), providing a minimal alignment signal that anchors the model’s reasoning trajectory. The Verifier is trained to produce discriminative strategies: a strategy receives a positive sign if it accepts all correct solutions and a negative sign otherwise; its final reward additionally penalizes acceptance of incorrect solutions. Because Solver and Verifier share parameters, the authors adopt a sequential PPO update (first on solution data, then on strategy data) to avoid destabilizing interference.

Stage 2 – Unsupervised Co‑evolution – scales to a large corpus of unlabeled scientific questions. Without ground‑truth answers, the Solver’s reward is redefined as the consensus rate across multiple verification strategies: r_sol_i = (1/M) Σ_j Eval(s_i, v_j). Solutions whose pass‑rate exceeds a threshold τ (e.g., 0.8) are collected as a high‑consensus set S⁺(q). The Verifier’s reward now comprises three components:

- Consistency – derived from Eq. (6) using the high‑consensus set, encouraging strategies that accept S⁺(q) while rejecting others.

- Reliability – measured by the proximity of a strategy’s embedding (obtained via a pretrained encoder such as Qwen3‑Embedding‑8B) to the centroid of its semantic cluster, under the assumption that strategies close to the cluster center are less likely to hallucinate.

- Diversity – explicitly rewarded by encouraging embeddings to spread across multiple clusters, preventing the verifier from collapsing to a single generic strategy.

The geometric reward mechanism thus balances the pull toward reliable, high‑consensus verification with a push for diverse, complementary strategies. Both Solver and Verifier are optimized jointly via PPO on the combined reward signal, maintaining a closed feedback loop where better solutions improve verification learning and richer verification strategies, in turn, guide the solver toward more robust reasoning.

Experiments on a suite of general scientific benchmarks (including physics, chemistry, biology, and mathematics QA sets) demonstrate that SciCoE consistently outperforms baseline LLMs and prior self‑evolving methods that lack dedicated verification. Accuracy gains range from 5 % to 12 % absolute points, with the most pronounced improvements on multi‑step, open‑ended questions. Analysis of the verifier’s strategy embeddings shows progressive formation of high‑reliability clusters while preserving inter‑cluster diversity, confirming the effectiveness of the geometric reward design.

In summary, SciCoE provides a practical pathway for LLMs to acquire both solution generation and rigorous verification capabilities in scientific domains, even when expert annotations are scarce. By jointly evolving these capabilities through consensus‑based and geometry‑aware rewards, the framework achieves stronger, more reliable scientific reasoning and lays groundwork for future autonomous scientific AI systems. The authors release code and data to facilitate further research.

Comments & Academic Discussion

Loading comments...

Leave a Comment