3DGSNav: Enhancing Vision-Language Model Reasoning for Object Navigation via Active 3D Gaussian Splatting

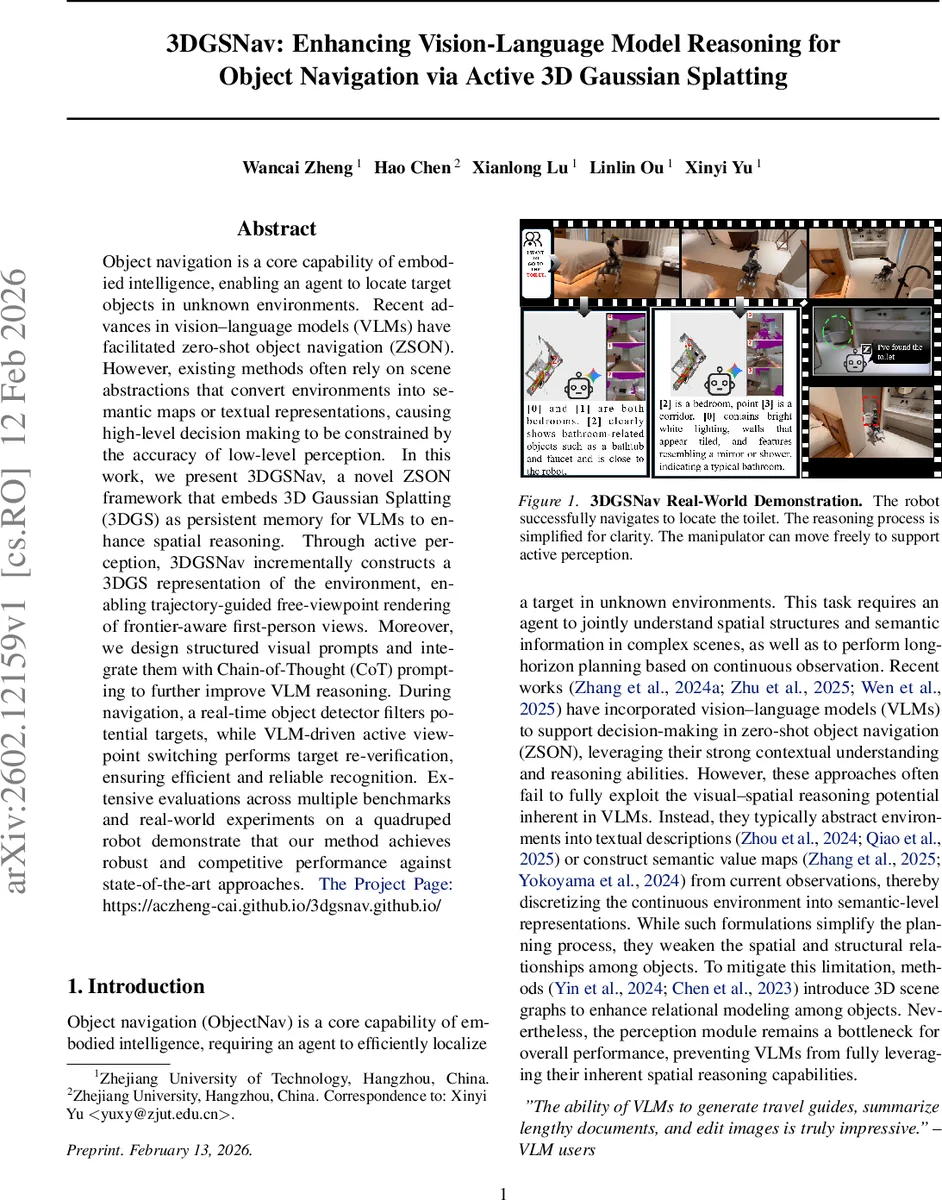

Object navigation is a core capability of embodied intelligence, enabling an agent to locate target objects in unknown environments. Recent advances in vision-language models (VLMs) have facilitated zero-shot object navigation (ZSON). However, existing methods often rely on scene abstractions that convert environments into semantic maps or textual representations, causing high-level decision making to be constrained by the accuracy of low-level perception. In this work, we present 3DGSNav, a novel ZSON framework that embeds 3D Gaussian Splatting (3DGS) as persistent memory for VLMs to enhance spatial reasoning. Through active perception, 3DGSNav incrementally constructs a 3DGS representation of the environment, enabling trajectory-guided free-viewpoint rendering of frontier-aware first-person views. Moreover, we design structured visual prompts and integrate them with Chain-of-Thought (CoT) prompting to further improve VLM reasoning. During navigation, a real-time object detector filters potential targets, while VLM-driven active viewpoint switching performs target re-verification, ensuring efficient and reliable recognition. Extensive evaluations across multiple benchmarks and real-world experiments on a quadruped robot demonstrate that our method achieves robust and competitive performance against state-of-the-art approaches.The Project Page:https://aczheng-cai.github.io/3dgsnav.github.io/

💡 Research Summary

3DGSNav introduces a novel zero‑shot object navigation (ZSON) framework that leverages 3D Gaussian Splatting (3DGS) as a persistent, queryable memory for large vision‑language models (VLMs). The system continuously builds a dense 3D representation from RGB‑D observations, where each Gaussian primitive stores position, opacity, color, and covariance. By rendering these primitives directly into images, the method preserves fine‑grained geometry and visual detail that are typically lost in semantic‑map or textual abstractions.

Active perception is a cornerstone of the approach. Instead of rotating the robot to scan the environment, the method computes an opacity field from the current 3DGS view, clusters low‑opacity regions with DBSCAN, and selects pitch‑yaw angles that maximize coverage of unseen space. The robot then virtually re‑orients its camera to these angles, efficiently filling perceptual gaps without costly mechanical motions.

Frontier points—boundaries between explored and unexplored regions—are extracted from the 3DGS‑based occupancy map. To avoid redundant rendering of many nearby frontiers, the authors construct a distance field, detect local maxima as skeleton seeds, and apply watershed segmentation. The centroid of each segment becomes a representative frontier, reducing computational load while preserving contextual information.

For planning, a guidance trajectory is computed with Dijkstra’s algorithm on a cost map that penalizes proximity to obstacles exponentially. This trajectory informs a free‑viewpoint optimization that starts from a pose selected by balancing curvature and distance to the frontier. The final viewpoint is refined by minimizing a composite loss that includes (1) opacity balance (ensuring both observed and unobserved regions appear), (2) view‑coverage loss, (3) cosine similarity between the rendered view and the target description, and (4) trajectory conformity. The result is a first‑person view (FPV) that is information‑dense and well‑aligned with the navigation goal.

Structured visual prompts are generated from the optimized FPV and a bird’s‑eye view (BEV). Online annotations—such as bounding boxes, distance tags, and confidence scores—are embedded directly into the images. These visual prompts are combined with Chain‑of‑Thought (CoT) textual prompts, guiding the VLM to reason step‑by‑step about spatial relationships, semantic similarity, and frontier selection. The VLM thus produces a ranked list of candidate frontiers or directly issues action commands.

During execution, a lightweight real‑time object detector filters potential target instances. When detection confidence is low or multiple candidates appear, the VLM is invoked to request an active viewpoint change, rendering a new FPV from the 3DGS memory for re‑verification. The verified observation is fed back into the map, closing the perception‑planning loop.

Extensive experiments on several simulated benchmarks and real‑world trials with a quadruped robot demonstrate that 3DGSNav matches or exceeds state‑of‑the‑art ZSON methods. Ablation studies confirm that (i) 3DGS memory dramatically improves spatial reasoning, (ii) active perception reduces the number of required viewpoints, and (iii) the combination of structured visual prompts with CoT substantially boosts VLM decision quality.

In summary, the paper contributes: (1) a persistent 3DGS memory that preserves continuous geometry for VLM consumption, (2) an active perception pipeline that selects informative viewpoints based on opacity, (3) a frontier clustering and free‑viewpoint optimization framework that yields high‑quality visual inputs, and (4) a prompt engineering strategy that unlocks the spatial reasoning potential of large multimodal models without resorting to coarse scene abstractions. This work opens a promising path toward integrating advanced 3D scene representations with large language‑vision models for robust embodied intelligence.

Comments & Academic Discussion

Loading comments...

Leave a Comment