FAIL: Flow Matching Adversarial Imitation Learning for Image Generation

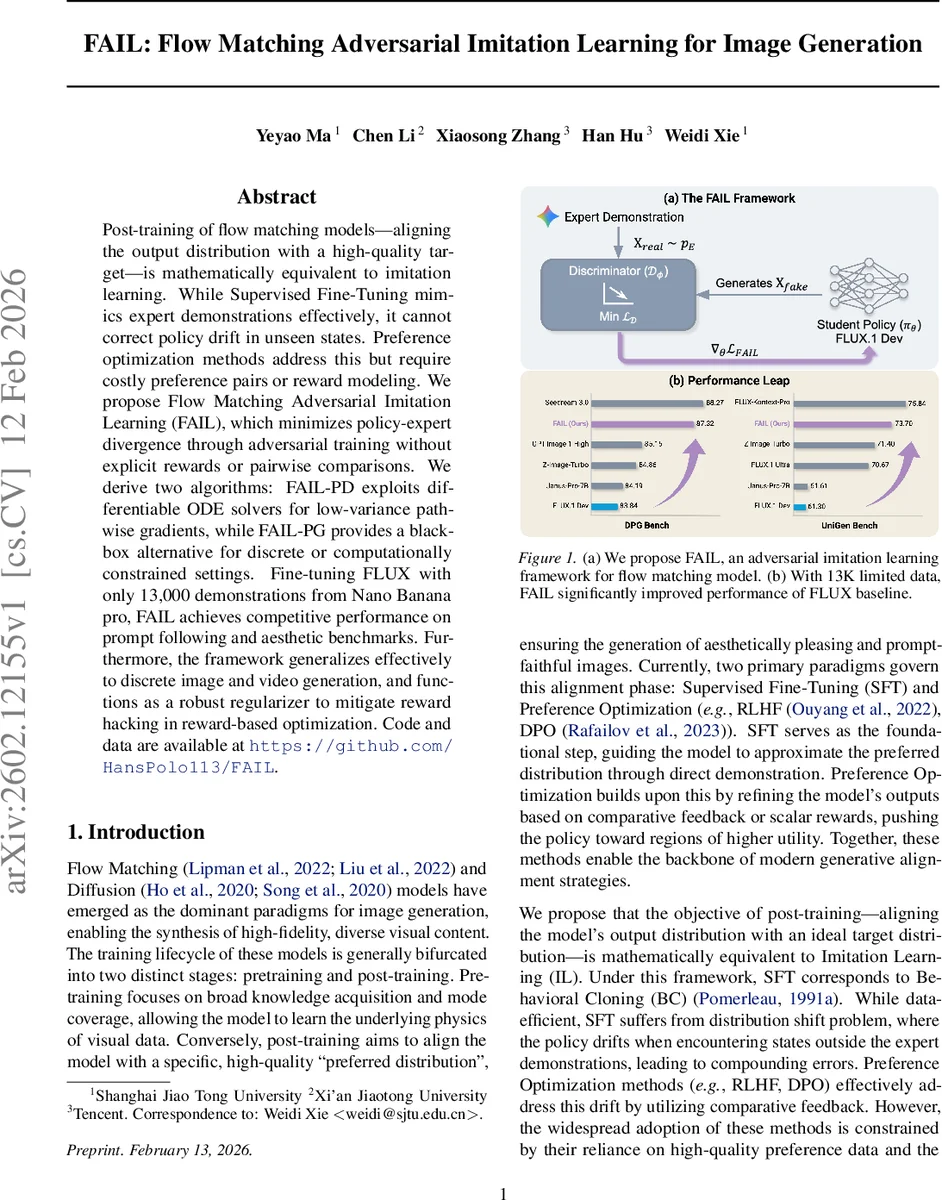

Post-training of flow matching models-aligning the output distribution with a high-quality target-is mathematically equivalent to imitation learning. While Supervised Fine-Tuning mimics expert demonstrations effectively, it cannot correct policy drift in unseen states. Preference optimization methods address this but require costly preference pairs or reward modeling. We propose Flow Matching Adversarial Imitation Learning (FAIL), which minimizes policy-expert divergence through adversarial training without explicit rewards or pairwise comparisons. We derive two algorithms: FAIL-PD exploits differentiable ODE solvers for low-variance pathwise gradients, while FAIL-PG provides a black-box alternative for discrete or computationally constrained settings. Fine-tuning FLUX with only 13,000 demonstrations from Nano Banana pro, FAIL achieves competitive performance on prompt following and aesthetic benchmarks. Furthermore, the framework generalizes effectively to discrete image and video generation, and functions as a robust regularizer to mitigate reward hacking in reward-based optimization. Code and data are available at https://github.com/HansPolo113/FAIL.

💡 Research Summary

The paper reframes the post‑training alignment of flow‑matching image generators as an adversarial imitation‑learning problem. In this view, the generator policy πθ is the deterministic ODE‑based sampling process of a flow‑matching model, while a discriminator Dω distinguishes generated samples from expert demonstrations. The objective becomes a minimax game that minimizes the Jensen‑Shannon divergence between the policy distribution and the expert distribution, thereby eliminating the need for explicit reward modeling or costly pairwise preference data.

Two concrete algorithms are derived. FAIL‑PD (Pathwise Derivative) exploits the differentiable nature of the ODE solver. Instead of back‑propagating through the entire integration trajectory, a random time t is sampled, a single denoising step is performed, and an approximate clean image x′₀ is obtained. The discriminator’s log‑sigmoid output is then differentiated with respect to the flow parameters, yielding a low‑variance, dense gradient. This approach is theoretically equivalent to a deterministic policy‑gradient method and inherits the local convergence guarantees of GANs, while dramatically reducing memory and compute overhead.

FAIL‑PG (Policy Gradient) provides a black‑box alternative for settings where back‑propagation through the dynamics is infeasible (e.g., discrete token generation or massive discriminators). The discriminator output is treated as a scalar reward r(x)=−log(1−σ(Dω(x))). A REINFORCE‑style score‑function estimator updates the policy, and the likelihood ratio needed for the policy‑gradient is approximated by the difference of Conditional Flow Matching (CFM) losses, which serve as an ELBO surrogate. The update adopts a PPO‑style clipped surrogate objective with an explicit KL‑divergence constraint to a reference (pre‑trained) policy, forming the Flow Policy Optimization (FPO) procedure.

Three discriminator architectures are explored: (1) a vision‑foundation‑model (VFM) backbone such as CLIP/DINO, (2) a flow‑matching (FM) backbone that re‑uses the generator’s own feature extractor, and (3) a vision‑language model (VLM) based on Qwen3‑VL‑2B‑Instruct, which ingests both the image and its text prompt. The VLM variant yields the strongest semantic alignment, especially when only a single image per prompt is available.

Experiments use 13 k prompt‑image pairs generated by Gemini 3 Pro (one image per prompt) as expert data. The base policy is FLUX.1‑dev. Training runs on 32 NVIDIA H20 GPUs with a batch size of 128 (3 policy rollouts + 1 expert per prompt). FAIL‑PD raises UniGen‑Bench scores from 61.61 (baseline) to 73.70 and DPG‑Bench from 84.15 to 87.32, outperforming supervised fine‑tuning (SFT) and several RLHF configurations that use the same limited data. Moreover, integrating FAIL as a regularizer into standard RLHF pipelines mitigates reward‑hacking phenomena, demonstrating its utility beyond pure alignment.

Stability techniques include a hybrid imitation batch (mixing online policy samples with expert demonstrations), discriminator warm‑up (freezing the policy initially), and KL‑regularization to the pre‑trained reference policy. Ablations show that the VLM discriminator consistently outperforms VFM and FM variants, and that FAIL‑PD is preferable when the ODE solver is fully differentiable, while FAIL‑PG is essential for discrete or computationally constrained scenarios.

The contributions are threefold: (1) a formal equivalence between flow‑matching post‑training and adversarial imitation learning, (2) two practical algorithms covering both white‑box and black‑box settings, and (3) empirical evidence that a modest amount of high‑quality expert data suffices to achieve state‑of‑the‑art prompt fidelity and aesthetic quality. Limitations include reliance on a differentiable ODE solver for FAIL‑PD and potential instability of the discriminator, which can still misguide the policy if not properly regularized. Future work may explore larger multimodal discriminators, curriculum‑based expert data acquisition, and extensions to video generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment