OServe: Accelerating LLM Serving via Spatial-Temporal Workload Orchestration

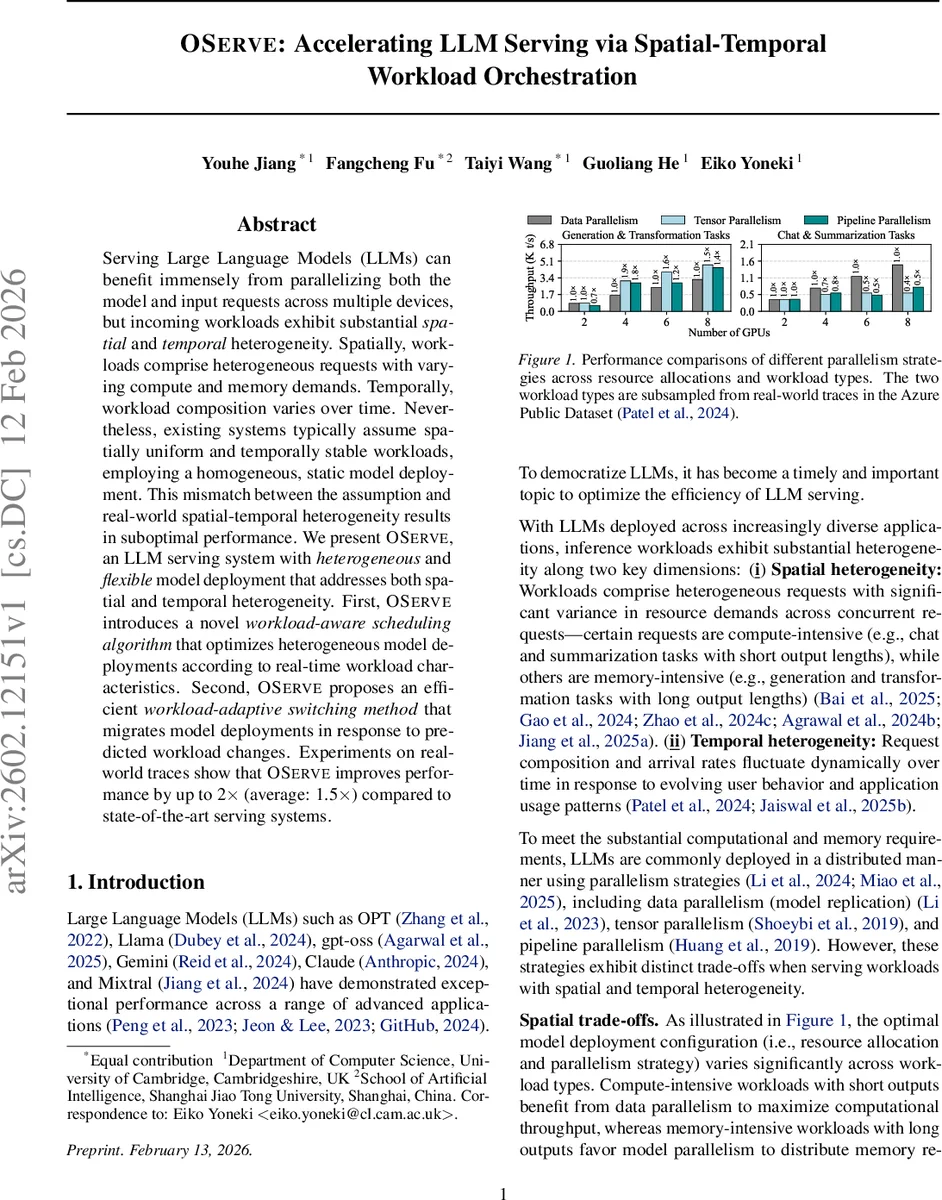

Serving Large Language Models (LLMs) can benefit immensely from parallelizing both the model and input requests across multiple devices, but incoming workloads exhibit substantial spatial and temporal heterogeneity. Spatially, workloads comprise heterogeneous requests with varying compute and memory demands. Temporally, workload composition varies over time. Nevertheless, existing systems typically assume spatially uniform and temporally stable workloads, employing a homogeneous, static model deployment. This mismatch between the assumption and real-world spatial-temporal heterogeneity results in suboptimal performance. We present OServe, an LLM serving system with heterogeneous and flexible model deployment that addresses both spatial and temporal heterogeneity. First, OServe introduces a novel workload-aware scheduling algorithm that optimizes heterogeneous model deployments according to real-time workload characteristics. Second, OServe proposes an efficient workload-adaptive switching method that migrates model deployments in response to predicted workload changes. Experiments on real-world traces show that OServe improves performance by up to 2$\times$ (average: 1.5$\times$) compared to state-of-the-art serving systems.

💡 Research Summary

The paper introduces OServe, a serving system for large language models (LLMs) that explicitly tackles two dimensions of workload heterogeneity: spatial heterogeneity (different requests have varying compute‑intensive or memory‑intensive characteristics) and temporal heterogeneity (the mix of request types and arrival rates changes over time). Existing serving platforms such as vLLM, Llumnix, and Dynamo assume a homogeneous, static deployment of model replicas, which leads to either over‑provisioned or under‑utilized resources when real‑world workloads deviate from this assumption.

OServe’s core contribution is a two‑level, flow‑network‑based optimization framework. The lower‑level problem takes a fixed model deployment (i.e., a set of replicas with specific GPU allocations and parallelism strategies) and determines the optimal assignment of incoming request types to those replicas. It builds a directed flow graph: source → workload nodes → intermediate nodes → replica input → replica output → sink. Edge capacities are derived from a one‑time profiling step that measures the maximum throughput each replica can achieve for each request type under a given parallelism configuration. To handle mixed workloads on a single replica, OServe normalizes capacities using the least common multiple of per‑type throughputs, ensuring a consistent accounting of resource consumption. The max‑flow (preflow‑push) algorithm then yields the optimal numbers of requests of each type assigned to each replica while respecting demand, per‑edge, and shared‑capacity constraints.

The upper‑level problem searches for the best overall deployment configuration (how many GPUs per replica and which combination of data, tensor, and pipeline parallelism to use). Starting from a uniform baseline (equal GPU counts, pure tensor parallelism), OServe iteratively refines the deployment based on feedback from the lower‑level flow solution. It identifies bottleneck replicas (fully saturated) and under‑utilized replicas (excess GPUs), reallocates GPUs from the latter to the former, and enumerates feasible parallelism strategies for the new allocation. This “flow‑network‑guided generation” continues until no further throughput gains are observed, effectively navigating the combinatorial space of possible deployments without exhaustive enumeration.

To address temporal heterogeneity, OServe incorporates a workload‑adaptive switching mechanism. A fine‑grained time‑series forecasting model predicts the composition and arrival rate of requests for the next interval. When a significant shift is anticipated, OServe triggers a migration to a newly optimized deployment. Rather than reloading the entire model from storage—a process that can take tens of seconds—the system leverages high‑speed GPU‑to‑GPU interconnects (PCIe/NVLink) to transfer model parameters directly between devices, dramatically reducing switch latency and avoiding service interruption. Ongoing requests on the old replicas are allowed to finish while the new replicas become active.

The authors evaluate OServe on real‑world traces from the Azure Public Dataset using up to 70‑billion‑parameter models. Compared against state‑of‑the‑art serving systems, OServe achieves up to 2× higher throughput (average 1.5×) and a noticeable reduction in P99 latency (≈30 % lower). The gains are most pronounced during periods when memory‑intensive generation tasks dominate; the mixed tensor‑pipeline parallelism selected by OServe outperforms pure data parallelism used by baseline systems by over 2× in those windows. GPU utilization stays above 85 % across the experiments, demonstrating that the heterogeneous deployment and dynamic reassignment effectively balance load.

In summary, OServe makes three key contributions: (1) a two‑level flow‑network optimization that jointly decides heterogeneous model deployments and request assignments, (2) a fast, prediction‑driven model‑switching technique that adapts to temporal workload shifts, and (3) extensive empirical validation showing substantial performance improvements over existing LLM serving frameworks. The work opens avenues for further research into more sophisticated workload forecasting, energy‑aware scheduling, and integration with heterogeneous hardware stacks (CPU‑GPU, accelerator‑GPU) in multi‑tenant cloud environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment