STAR : Bridging Statistical and Agentic Reasoning for Large Model Performance Prediction

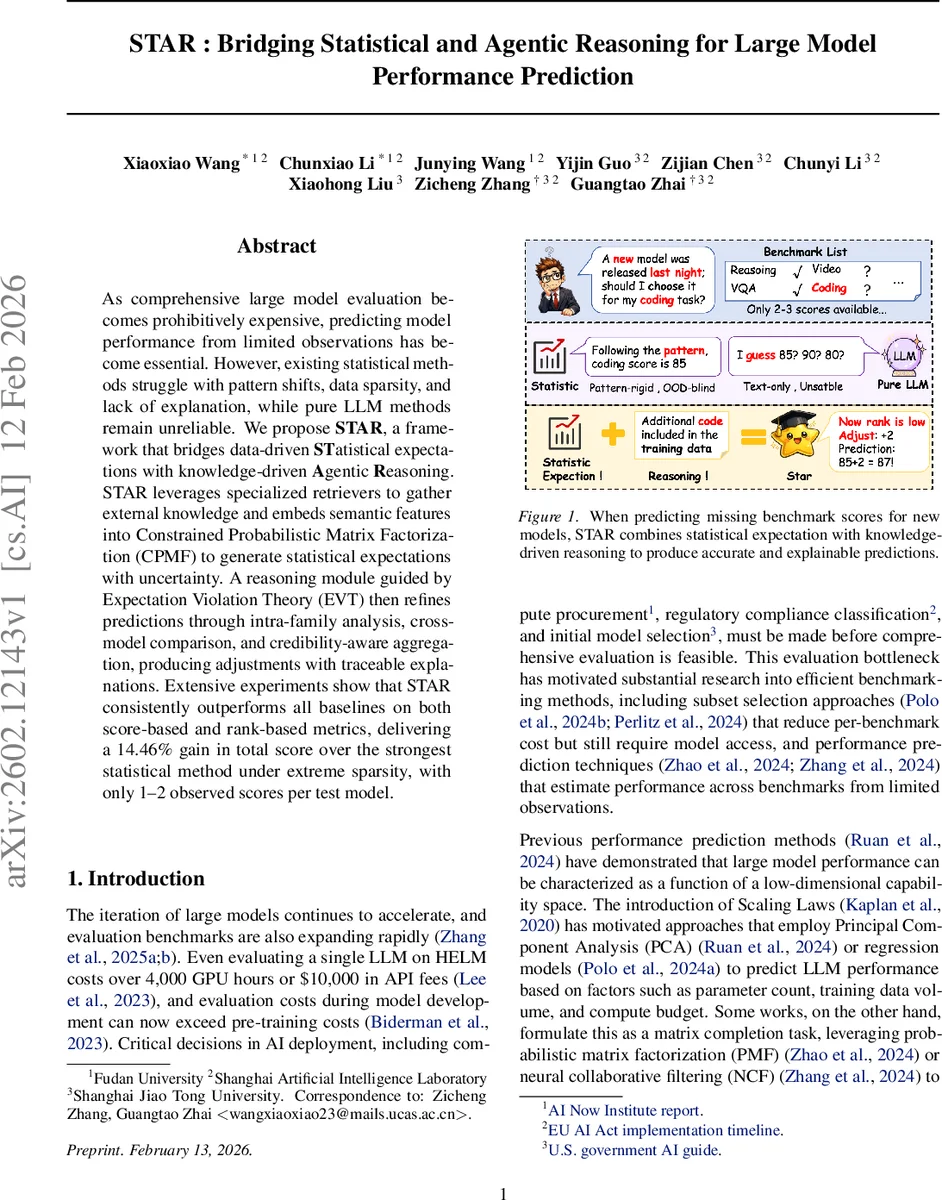

As comprehensive large model evaluation becomes prohibitively expensive, predicting model performance from limited observations has become essential. However, existing statistical methods struggle with pattern shifts, data sparsity, and lack of explanation, while pure LLM methods remain unreliable. We propose STAR, a framework that bridges data-driven STatistical expectations with knowledge-driven Agentic Reasoning. STAR leverages specialized retrievers to gather external knowledge and embeds semantic features into Constrained Probabilistic Matrix Factorization (CPMF) to generate statistical expectations with uncertainty. A reasoning module guided by Expectation Violation Theory (EVT) then refines predictions through intra-family analysis, cross-model comparison, and credibility-aware aggregation, producing adjustments with traceable explanations. Extensive experiments show that STAR consistently outperforms all baselines on both score-based and rank-based metrics, delivering a 14.46% gain in total score over the strongest statistical method under extreme sparsity, with only 1–2 observed scores per test model.

💡 Research Summary

The paper addresses the pressing need to predict the performance of large language and multimodal models on benchmark suites when only a handful of scores are available. Existing statistical approaches (e.g., scaling‑law‑based PCA, regression, probabilistic matrix factorization) suffer from three major drawbacks: (1) they assume stationary relationships and thus break down under pattern shifts caused by emerging architectures such as Mixture‑of‑Experts or RLHF‑trained models; (2) they require a relatively dense observation matrix, which is unrealistic for newly released models that report scores on only a few benchmarks; and (3) they provide no human‑readable justification for their predictions. Pure LLM‑based methods that simply prompt a language model with a description of the target model produce scores and explanations but are plagued by hallucinations and lack uncertainty quantification.

STAR (Statistical‑Agentic Reasoning) is proposed as a hybrid framework that unifies data‑driven statistical expectations with knowledge‑driven agentic reasoning, grounded in Expectation Violation Theory (EVT). The system consists of four modules:

-

Historical Memory – stores the observed performance matrix R_obs together with structured profiles for each model (metadata, family lineage, source references) and each benchmark (task description, evaluation protocol). This serves as the core knowledge base.

-

Retrieval Augmentation – employs two specialized retrievers (model‑retriever and benchmark‑retriever) that query search engines, arXiv, and model‑card repositories. The model retriever extracts a technical summary, base‑model analysis, and community feedback; the benchmark retriever gathers task specifications and evaluation details. Retrieved texts are encoded with a pretrained text encoder into dense vectors g_m (model) and h_n (benchmark), and also retained as raw evidence for later explanation generation.

-

Statistical Expectation – builds on Constrained Probabilistic Matrix Factorization (CPMF). Standard PMF factorizes the sparse score matrix into latent factors U (models) and V (benchmarks). STAR augments these factors with the semantic vectors via learnable projection matrices X and Y: U′_m = U_m + g_m X, V′_n = V_n + h_n Y. The predicted score is ˆR_mn = U′_mᵀ V′_n. To capture predictive confidence, the framework runs No‑U‑Turn Sampler (NUTS) MCMC, producing posterior samples of the latent variables and computing a per‑prediction standard deviation σ_mn. High σ indicates that the statistical signal is weak and that the reasoning module should rely more heavily on external evidence.

-

EVT‑guided Reasoning – mimics how a human expert revises expectations when confronted with contradictory evidence. It proceeds in two analytical steps: (a) Intra‑family analysis examines the target model’s position within its family (e.g., LLaVA‑2 vs. LLaVA‑1), comparing observed scores of sibling models and architectural changes; (b) Cross‑model comparison identifies a set of capability‑similar models (C_m) based on shared benchmark performance and contrasts their actual scores with ˆR_mn. The reasoning agent (implemented as an LLM chain) consumes ˆR_mn, σ_mn, the retrieved raw texts, and the scores from steps (a) and (b) to produce an adjustment Δ_mn, a credibility weight c_mn ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment