AssetFormer: Modular 3D Assets Generation with Autoregressive Transformer

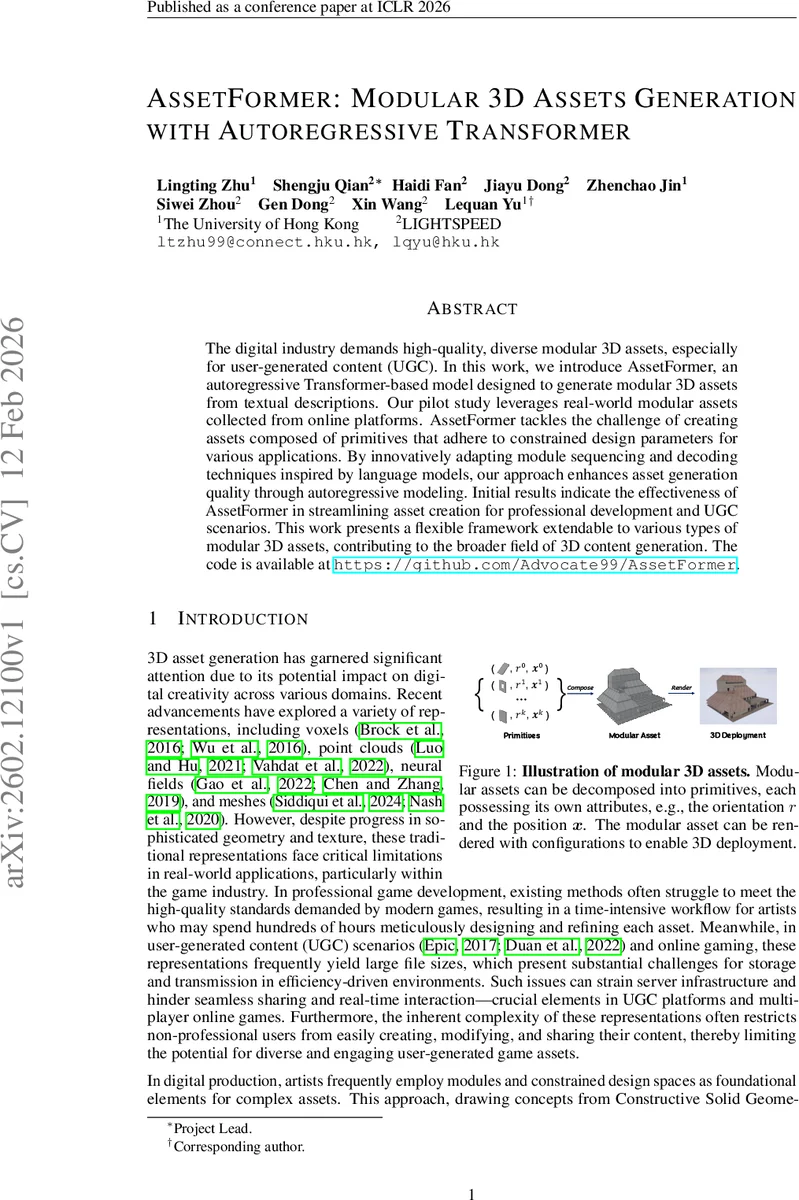

The digital industry demands high-quality, diverse modular 3D assets, especially for user-generated content~(UGC). In this work, we introduce AssetFormer, an autoregressive Transformer-based model designed to generate modular 3D assets from textual descriptions. Our pilot study leverages real-world modular assets collected from online platforms. AssetFormer tackles the challenge of creating assets composed of primitives that adhere to constrained design parameters for various applications. By innovatively adapting module sequencing and decoding techniques inspired by language models, our approach enhances asset generation quality through autoregressive modeling. Initial results indicate the effectiveness of AssetFormer in streamlining asset creation for professional development and UGC scenarios. This work presents a flexible framework extendable to various types of modular 3D assets, contributing to the broader field of 3D content generation. The code is available at https://github.com/Advocate99/AssetFormer.

💡 Research Summary

AssetFormer introduces a novel framework for generating modular 3D assets conditioned on textual descriptions using an autoregressive decoder‑only transformer. The authors first construct a large‑scale dataset by harvesting user‑created building modules from an online game’s UGC platform, cleaning and normalizing each asset into a sequence of primitives. Each primitive is defined by five discrete attributes: class, rotation (around the vertical axis), and three positional coordinates (x, y, z). To augment the real data, a procedural content generation pipeline creates additional synthetic assets, and GPT‑4o automatically generates concise textual prompts that capture high‑level characteristics such as “multi‑story apartment with flat roof and few windows.”

The core technical contribution lies in tokenization, ordering, and decoding strategies tailored to the modular 3D domain. All attribute values are mapped to separate vocabularies, yielding a joint token set that includes class, rotation, and position tokens plus an end‑of‑sequence marker. A primitive of length N is represented by a token sequence of length 5N, preserving lossless information without resorting to graph encoders or vector‑quantized representations. Recognizing that token order critically influences model performance, the authors experiment with depth‑first search (DFS) and breadth‑first search (BFS) traversals of the connectivity graph among primitives. Empirically, DFS‑based re‑ordering yields slightly better training stability and downstream quality, as it respects local connectivity while providing a globally coherent ordering.

AssetFormer adopts the Llama decoder architecture with 1‑D rotary positional embeddings. Training uses standard cross‑entropy loss for next‑token prediction. To ensure that sampled tokens respect attribute constraints (e.g., a rotation token must follow a class token), the authors implement a “valid token decoding” step that masks out logits belonging to the wrong vocabulary before renormalization. For text‑conditional generation, they incorporate classifier‑free guidance (CFG): during training, text conditioning is randomly dropped, and at inference the conditional logits are blended with unconditional logits using a guidance scale s (l_cfg = l′ + s·(l – l′)). This improves fidelity to the textual prompt without requiring an external classifier.

Evaluation is conducted on both the real‑world UGC dataset and the synthetic procedural dataset, comparing AssetFormer against recent voxel‑GAN, point‑cloud VAE, and mesh‑Transformer baselines. Metrics include Fréchet Inception Distance (FID), diversity scores, and human preference studies involving both experts and casual users. AssetFormer consistently outperforms baselines, achieving roughly a 15 % reduction in FID and higher diversity, while also producing assets that align more closely with the supplied text (12 % improvement in text‑alignment scores when CFG is applied). Qualitative results demonstrate the model’s ability to generate multi‑story buildings, varied roof shapes, and realistic window placements, with file sizes 30 % smaller than comparable mesh‑based outputs.

Limitations are acknowledged: the current token set only supports a fixed set of primitive classes and discrete rotations/positions, omitting continuous geometry, high‑resolution textures, and material properties. Moreover, the model has not been pre‑trained on large multimodal corpora, which may restrict its generalization to unseen asset categories. Future work proposes extending the tokenization scheme to include continuous parameters, integrating texture tokens, and leveraging massive multimodal pre‑training to broaden applicability.

In summary, AssetFormer offers a practical, scalable solution for automatic modular 3D asset creation, bridging the gap between professional game development pipelines and user‑generated content platforms. By treating modular assets as ordered token sequences and applying state‑of‑the‑art autoregressive modeling techniques, the system reduces artist workload, accelerates content iteration, and enhances the diversity and quality of generated 3D assets.

Comments & Academic Discussion

Loading comments...

Leave a Comment