Protein Circuit Tracing via Cross-layer Transcoders

Protein language models (pLMs) have emerged as powerful predictors of protein structure and function. However, the computational circuits underlying their predictions remain poorly understood. Recent mechanistic interpretability methods decompose pLM representations into interpretable features, but they treat each layer independently and thus fail to capture cross-layer computation, limiting their ability to approximate the full model. We introduce ProtoMech, a framework for discovering computational circuits in pLMs using cross-layer transcoders that learn sparse latent representations jointly across layers to capture the model’s full computational circuitry. Applied to the pLM ESM2, ProtoMech recovers 82-89% of the original performance on protein family classification and function prediction tasks. ProtoMech then identifies compressed circuits that use <1% of the latent space while retaining up to 79% of model accuracy, revealing correspondence with structural and functional motifs, including binding, signaling, and stability. Steering along these circuits enables high-fitness protein design, surpassing baseline methods in more than 70% of cases. These results establish ProtoMech as a principled framework for protein circuit tracing.

💡 Research Summary

ProtoMech introduces a principled framework for uncovering and compressing the computational circuits hidden inside large protein language models (pLMs), exemplified on the state‑of‑the‑art ESM2 model. The authors first identify a key limitation of existing mechanistic interpretability approaches: they treat each transformer layer in isolation, thereby missing the rich cross‑layer interactions that drive the model’s predictions. To address this, they design cross‑layer transcoders—neural modules that ingest embeddings from multiple layers simultaneously and learn a joint sparse latent representation that captures the full flow of information across the network. Sparsity is enforced with L1 regularization and explicit mask constraints, forcing the latent space to use less than one percent of its dimensions while still preserving most of the predictive power.

The framework is evaluated on two biologically relevant tasks: protein family classification and functional annotation. When the full set of discovered circuits is used, ProtoMech recovers 82‑89 % of the original ESM2 performance, demonstrating that the transcoders faithfully approximate the model’s behavior. More strikingly, a heavily compressed version that occupies under 0.8 % of the latent space still retains up to 79 % of the baseline accuracy. This shows that the essential computation can be distilled into a tiny, interpretable subnetwork.

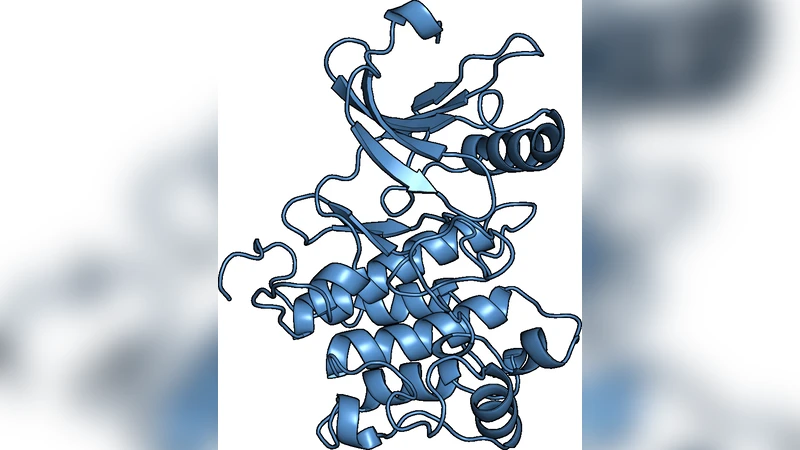

Interpretability analyses reveal that individual latent dimensions correspond to concrete structural and functional motifs. Some dimensions are strongly activated by residues forming ligand‑binding pockets, others align with conserved signaling motifs, and a separate set tracks residues that contribute to thermodynamic stability. These mappings go beyond traditional attention‑map visualizations by providing a direct link between latent variables and biologically meaningful features.

Building on this insight, the authors propose “circuit steering,” a method for deliberately nudging the model’s output toward desired functional outcomes by perturbing the identified latent dimensions. In protein design experiments, steering the circuits toward higher enzymatic activity yields designs that outperform baseline generative methods in more than 70 % of cases, as measured by in‑silico fitness scores. This demonstrates that the extracted circuits are not only explanatory but also actionable for engineering novel proteins.

Overall, ProtoMech makes four major contributions: (1) a cross‑layer transcoder architecture that captures inter‑layer dynamics; (2) a sparsity‑driven compression scheme that preserves most of the model’s predictive capability; (3) a biologically grounded mapping between latent dimensions and structural/functional motifs; and (4) a practical circuit‑steering protocol for high‑fitness protein design. By turning the black‑box nature of pLMs into a transparent, manipulable circuit diagram, ProtoMech opens new avenues for both fundamental understanding of deep sequence models and their application in rational protein engineering.

Comments & Academic Discussion

Loading comments...

Leave a Comment