CSEval: A Framework for Evaluating Clinical Semantics in Text-to-Image Generation

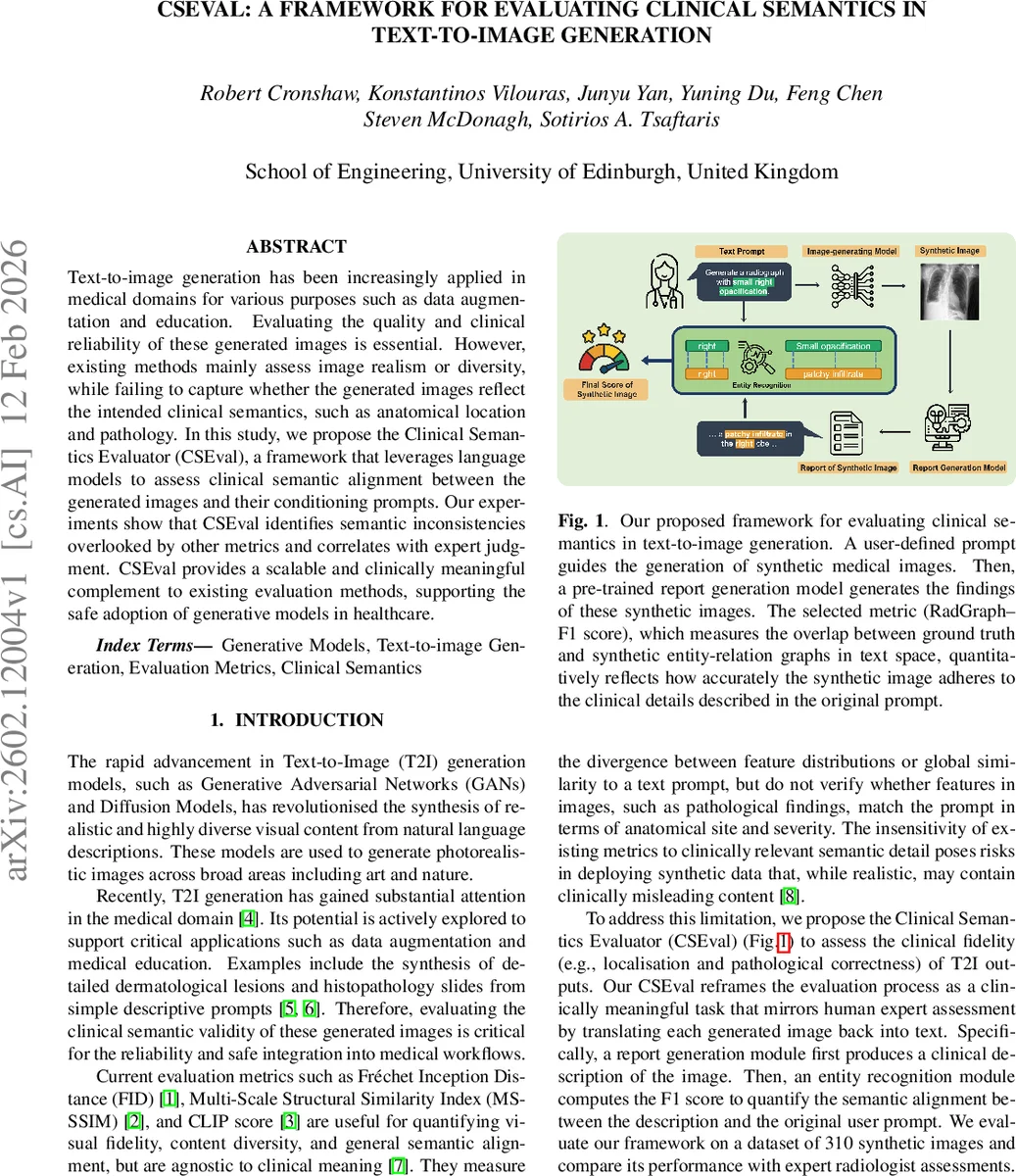

Text-to-image generation has been increasingly applied in medical domains for various purposes such as data augmentation and education. Evaluating the quality and clinical reliability of these generated images is essential. However, existing methods mainly assess image realism or diversity, while failing to capture whether the generated images reflect the intended clinical semantics, such as anatomical location and pathology. In this study, we propose the Clinical Semantics Evaluator (CSEval), a framework that leverages language models to assess clinical semantic alignment between the generated images and their conditioning prompts. Our experiments show that CSEval identifies semantic inconsistencies overlooked by other metrics and correlates with expert judgment. CSEval provides a scalable and clinically meaningful complement to existing evaluation methods, supporting the safe adoption of generative models in healthcare.

💡 Research Summary

The paper addresses a critical gap in the evaluation of medical text‑to‑image (T2I) generative models: existing metrics such as FID, MS‑SSIM, and CLIP‑score assess visual fidelity, diversity, or generic image‑text alignment but ignore whether the generated images faithfully convey the clinical semantics encoded in the conditioning prompts (e.g., anatomical location, pathology type, severity). To fill this gap, the authors introduce CSEval, a modular framework that translates the visual evaluation problem into a text‑centric one, mirroring how radiologists assess images.

CSEval’s pipeline consists of four stages. First, a user‑defined prompt template describing a chest pathology (e.g., “moderate right pleural effusion”) is fed to a latent diffusion model trained on MIMIC‑CXR, producing 310 synthetic chest X‑ray images (10 variations per prompt across four disease categories). Second, the MAIRA‑2 report generation model processes each synthetic image and outputs a radiology report. MAIRA‑2 is capable of spatial grounding, attaching bounding boxes to described findings, which improves the clinical relevance of the generated text. Third, the RadGraph‑XL entity‑relation extractor parses both the original prompt and the generated report, producing structured clinical graphs composed of observations, anatomical locations, and their relationships. Finally, a RadGraph‑F1 score is computed by comparing the two graphs, providing a quantitative measure of how well the image reproduces the clinical details specified in the prompt.

The authors benchmark CSEval against three conventional metrics (FID, MS‑SSIM, BioVIL‑T/CLIP‑score) and against expert radiologist ratings on a three‑point alignment scale (0 = no alignment, 1 = partial, 2 = strong). Results show that traditional metrics often mis‑rank disease categories; for instance, MS‑SSIM and CLIP‑score give similar values for pneumothorax and opacification, yet experts rate pneumothorax far lower in clinical fidelity. CSEval’s RadGraph‑F1 uniquely mirrors expert rankings across all four pathologies. Kendall’s τ correlation with expert scores is 0.375 for CSEval versus 0.291 for the best competing metric, confirming superior alignment with human judgment. Qualitative examples illustrate that CSEval assigns near‑zero scores to completely mismatched cases (e.g., “large left apical pneumothorax” generated incorrectly) and intermediate scores to partially correct outputs, demonstrating sensitivity to both presence and severity of findings.

The paper acknowledges two primary limitations. First, the reliability of CSEval hinges on the accuracy of the report generation model; errors in MAIRA‑2 can propagate to the final score. Second, the RadGraph‑F1 metric may be influenced by textual artifacts such as sentence length or formatting, which are unrelated to clinical content. The authors propose future work to refine entity similarity calculations, extend the framework to other imaging modalities (CT, MRI), and incorporate human‑in‑the‑loop feedback to further improve robustness.

In conclusion, CSEval offers a scalable, automated, and clinically meaningful complement to existing image‑centric evaluation methods. By grounding assessment in the textual domain and leveraging state‑of‑the‑art language models for report generation and entity extraction, it provides a practical tool for ensuring that synthetic medical images are not only visually realistic but also semantically faithful—a prerequisite for safe deployment of generative AI in healthcare.

Comments & Academic Discussion

Loading comments...

Leave a Comment