Spatial Chain-of-Thought: Bridging Understanding and Generation Models for Spatial Reasoning Generation

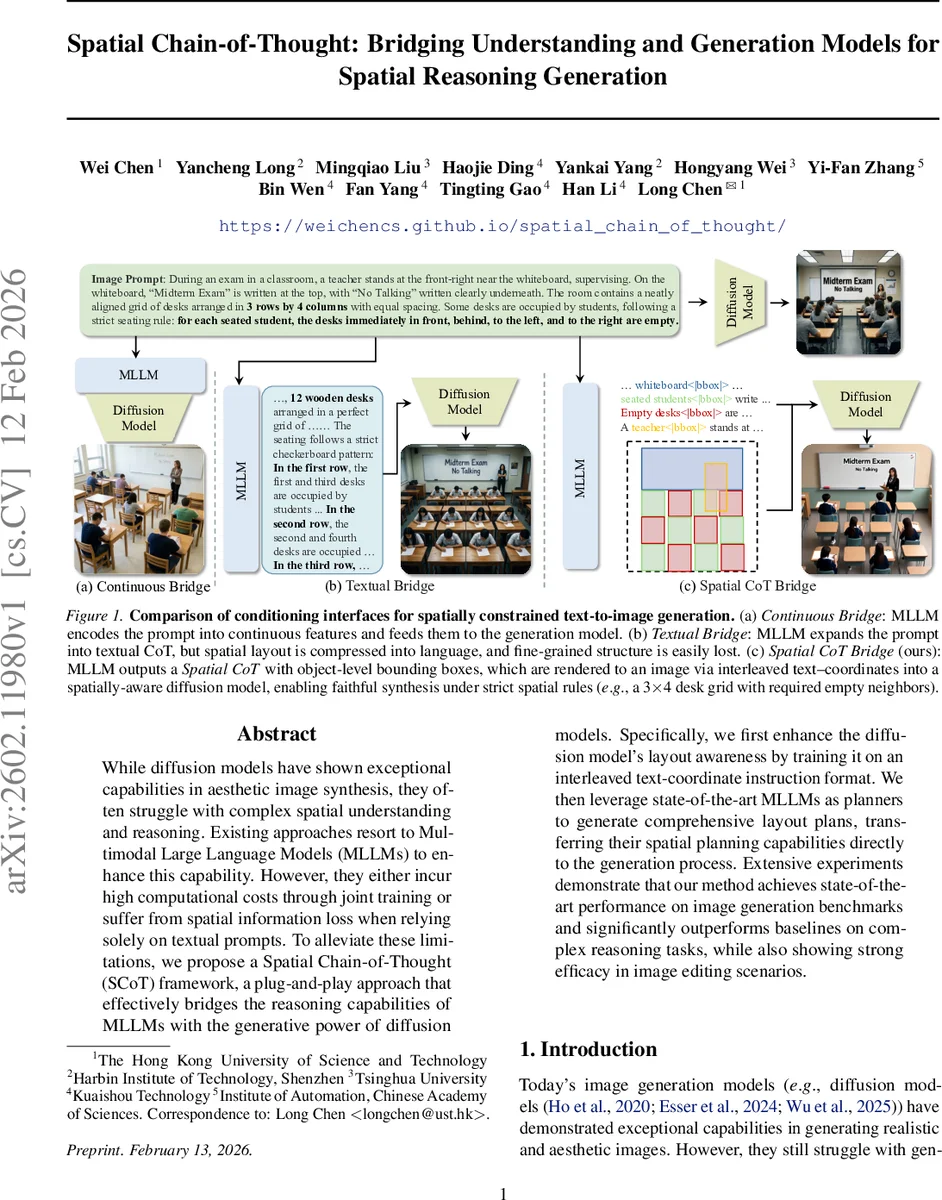

While diffusion models have shown exceptional capabilities in aesthetic image synthesis, they often struggle with complex spatial understanding and reasoning. Existing approaches resort to Multimodal Large Language Models (MLLMs) to enhance this capability. However, they either incur high computational costs through joint training or suffer from spatial information loss when relying solely on textual prompts. To alleviate these limitations, we propose a Spatial Chain-of-Thought (SCoT) framework, a plug-and-play approach that effectively bridges the reasoning capabilities of MLLMs with the generative power of diffusion models. Specifically, we first enhance the diffusion model’s layout awareness by training it on an interleaved text-coordinate instruction format. We then leverage state-of-the-art MLLMs as planners to generate comprehensive layout plans, transferring their spatial planning capabilities directly to the generation process. Extensive experiments demonstrate that our method achieves state-of-the-art performance on image generation benchmarks and significantly outperforms baselines on complex reasoning tasks, while also showing strong efficacy in image editing scenarios.

💡 Research Summary

The paper introduces Spatial Chain‑of‑Thought (SCoT), a plug‑and‑play framework that bridges multimodal large language models (MLLMs) and diffusion‑based image generators for tasks requiring precise spatial reasoning. Existing methods fall into two categories: (1) continuous feature bridges that jointly train MLLM and diffusion models, incurring high computational cost and data hunger, and (2) text‑based bridges that let MLLMs expand prompts into textual chain‑of‑thoughts, which inevitably compresses spatial constraints and loses fine‑grained positional information. SCoT overcomes these drawbacks by having the MLLM output an explicit, structured layout plan in an interleaved text‑coordinate format, where each object description is immediately followed by its bounding‑box coordinates (e.g., “whiteboard<|bbox|> … teacher<|bbox|> …”). This intermediate representation preserves dense spatial information while remaining fully compatible with existing diffusion pipelines.

To enable diffusion models to consume such instructions without architectural overhaul, the authors train a spatially‑aware generation model (SA‑Gen) on a newly constructed dataset, SCoT‑DenseBox. They automatically annotate millions of high‑resolution images with detailed captions and object‑level boxes using the visual‑grounding capabilities of Qwen‑3‑VL. Because pure dense‑box training can degrade aesthetic quality, a second fine‑tuning stage on a curated high‑quality subset (SCoT‑AestheticSFT) restores visual fidelity while retaining coordinate execution ability. The resulting interleaved text‑coordinate instruction format provides an unambiguous mapping between textual entities and their spatial locations, crucial for crowded scenes.

The MLLM planner operates in three steps: (i) scene parsing – extracting entities, attributes, and constraints from the user prompt; (ii) spatial planning – reasoning over relational constraints (e.g., “neighbors of a seated student must be empty”) to produce a globally consistent layout; and (iii) grounded specification – assigning concrete bounding boxes to each entity and emitting the interleaved text‑coordinate sequence. Off‑the‑shelf MLLMs such as Gemini‑3, OpenAI‑GPT‑4V, or other state‑of‑the‑art vision‑language models can be swapped without retraining the diffusion backbone, preserving the plug‑and‑play nature.

During inference, the pipeline follows c = M(t) (where M is the MLLM planner) and then generates an image x ∼ pθ(x|c) using the SA‑Gen diffusion model conditioned on the layout‑augmented caption c. This decoupling allows independent upgrades of either component.

Extensive experiments on several benchmarks—GenEval, OneIG‑Bench, and the particularly challenging T2ICoReBench—show that SCoT surpasses both continuous and text‑based baselines by over 10% on complex spatial reasoning metrics. The method also excels in image editing tasks (IVEdit), where it maintains precise object placement while allowing seamless modifications. Ablation studies confirm that the interleaved format, the two‑stage training (DenseBox then AestheticSFT), and the explicit planner all contribute significantly to performance gains.

Limitations include reliance on 2‑D bounding boxes (no pixel‑level masks or depth cues), sensitivity to planner errors (a mis‑parsed constraint propagates downstream), and a focus on planar layouts without explicit handling of perspective or 3‑D transformations. Future work could extend SCoT to mask‑level grounding, incorporate 3‑D coordinate reasoning, and embed self‑verification mechanisms within the planner to improve robustness. Overall, SCoT offers an efficient, scalable, and highly effective solution for integrating sophisticated spatial reasoning from language models into high‑fidelity image synthesis.

Comments & Academic Discussion

Loading comments...

Leave a Comment