MING: An Automated CNN-to-Edge MLIR HLS framework

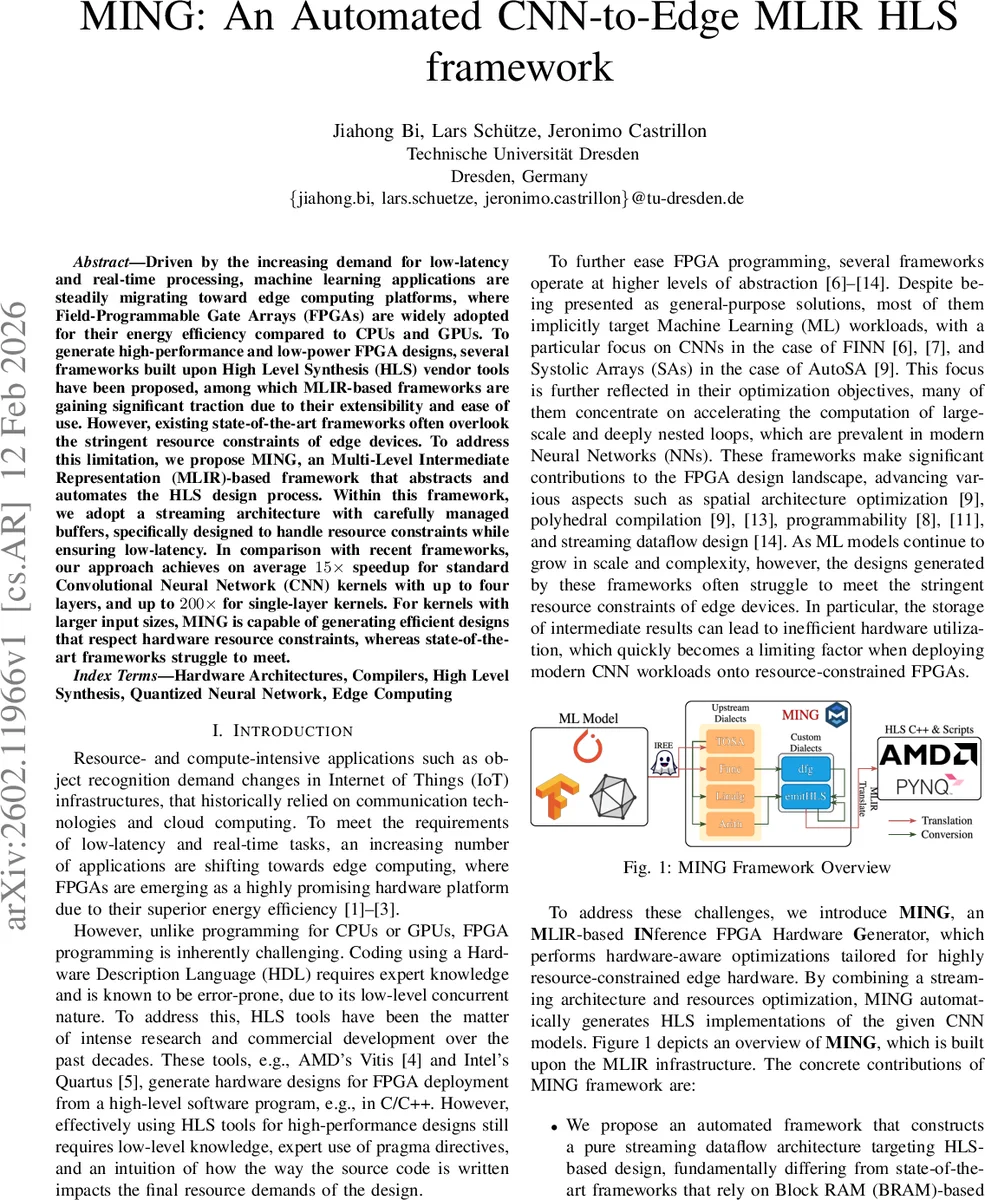

Driven by the increasing demand for low-latency and real-time processing, machine learning applications are steadily migrating toward edge computing platforms, where Field-Programmable Gate Arrays (FPGAs) are widely adopted for their energy efficiency compared to CPUs and GPUs. To generate high-performance and low-power FPGA designs, several frameworks built upon High Level Synthesis (HLS) vendor tools have been proposed, among which MLIR-based frameworks are gaining significant traction due to their extensibility and ease of use. However, existing state-of-the-art frameworks often overlook the stringent resource constraints of edge devices. To address this limitation, we propose MING, an Multi-Level Intermediate Representation (MLIR)-based framework that abstracts and automates the HLS design process. Within this framework, we adopt a streaming architecture with carefully managed buffers, specifically designed to handle resource constraints while ensuring low-latency. In comparison with recent frameworks, our approach achieves on average 15x speedup for standard Convolutional Neural Network (CNN) kernels with up to four layers, and up to 200x for single-layer kernels. For kernels with larger input sizes, MING is capable of generating efficient designs that respect hardware resource constraints, whereas state-of-the-art frameworks struggle to meet.

💡 Research Summary

The paper introduces MING, a novel MLIR‑based framework that automates the generation of high‑performance, low‑latency CNN accelerators for resource‑constrained edge FPGAs. Existing HLS‑driven frameworks such as StreamHLS, ScaleHLS, and POM achieve impressive speeds on cloud‑grade devices but often ignore the strict BRAM, DSP, and LUT limits of edge hardware. Their typical approach stores intermediate tensors in on‑chip memory, causing BRAM usage to grow linearly with input size and network depth, eventually exceeding the device’s capacity.

MING tackles this problem by adopting a pure streaming data‑flow architecture. Instead of materializing large intermediate arrays, data are produced into streams and consumed immediately by the next computation node. The only on‑chip memory required are small line buffers that temporarily hold sliding‑window data. To realize this, the authors extend the MLIR infrastructure with two new dialects: a data‑flow graph (dfg‑mlir) dialect that models Kahn Process Network primitives (processes and FIFO channels) and an emitHLS dialect that captures both standard C++ syntax and HLS‑specific pragmas.

The compilation flow begins with a CNN model expressed in ONNX/TensorFlow/PyTorch, which is lowered to the linalg.generic operation. MING classifies each generic operation into one of three kernel types—pure parallel, regular reduction, or sliding‑window—based on iterator types and indexing maps. This classification drives the automatic insertion of appropriate streams, line buffers, and HLS pragmas (STREAM, UNROLL, PIPELINE, ARRAY_PARTITION, BIND_STORAGE). A lightweight design‑space exploration (DSE) module, built as an MLIR pass, evaluates resource usage (LUT, BRAM, DSP) using a refined integer‑arithmetic model and selects the optimal unroll factors, initiation intervals, and buffer depths that satisfy the target device constraints.

Experimental results on a set of benchmark CNN kernels (single‑layer and up to four‑layer networks) demonstrate dramatic performance gains. MING achieves an average 15× speedup over the state‑of‑the‑art, with up to 200× improvement for single‑layer kernels. Crucially, for large input dimensions (e.g., 224×224), MING’s BRAM consumption remains modest because only line buffers are allocated, whereas StreamHLS’s BRAM usage grows almost linearly and often exceeds the device’s capacity, leading to synthesis failure.

In summary, MING provides a fully automated, hardware‑aware compilation pipeline that bridges the gap between high‑level CNN models and efficient edge FPGA implementations. By eliminating intermediate storage, integrating precise resource estimation, and performing DSE within the MLIR ecosystem, it delivers both speed and feasibility on devices with tight resource budgets. The authors suggest future extensions to support more complex network topologies (e.g., ResNet, MobileNet) and dynamic reconfiguration, further broadening the applicability of the framework in edge AI deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment