Manifold-Aware Temporal Domain Generalization for Large Language Models

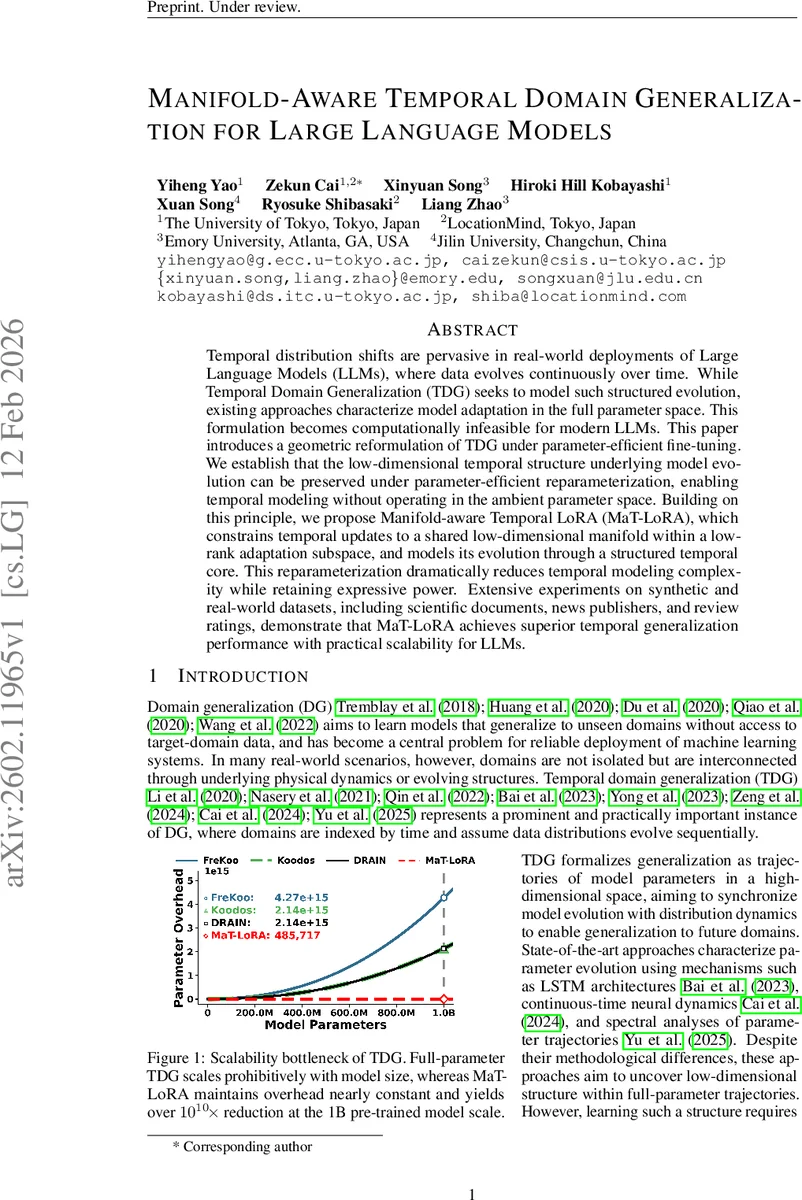

Temporal distribution shifts are pervasive in real-world deployments of Large Language Models (LLMs), where data evolves continuously over time. While Temporal Domain Generalization (TDG) seeks to model such structured evolution, existing approaches characterize model adaptation in the full parameter space. This formulation becomes computationally infeasible for modern LLMs. This paper introduces a geometric reformulation of TDG under parameter-efficient fine-tuning. We establish that the low-dimensional temporal structure underlying model evolution can be preserved under parameter-efficient reparameterization, enabling temporal modeling without operating in the ambient parameter space. Building on this principle, we propose Manifold-aware Temporal LoRA (MaT-LoRA), which constrains temporal updates to a shared low-dimensional manifold within a low-rank adaptation subspace, and models its evolution through a structured temporal core. This reparameterization dramatically reduces temporal modeling complexity while retaining expressive power. Extensive experiments on synthetic and real-world datasets, including scientific documents, news publishers, and review ratings, demonstrate that MaT-LoRA achieves superior temporal generalization performance with practical scalability for LLMs.

💡 Research Summary

Temporal distribution shifts are a pervasive challenge for large language models (LLMs) deployed in real‑world applications, where the underlying data distribution evolves continuously over time. Existing Temporal Domain Generalization (TDG) methods attempt to model this evolution by learning trajectories of the full model parameters. While conceptually sound, such approaches become computationally prohibitive for modern LLMs that contain billions of parameters. This paper introduces a fundamentally different perspective: instead of operating in the ambient parameter space, the authors reformulate TDG in the parameter‑increment space induced by parameter‑efficient fine‑tuning (PEFT), specifically Low‑Rank Adaptation (LoRA).

The key theoretical insight is that if the sequence of optimal full‑parameter models {Wₜ} lies on a low‑dimensional manifold M in ℝᵖ, then the corresponding increments ΔWₜ = Wₜ – W_pre also lie on a translated manifold M′ = M – W_pre. This follows from the fact that translation is a diffeomorphism, preserving manifold dimensionality. Consequently, modeling temporal dynamics can be safely confined to the low‑dimensional manifold of increments, regardless of the massive size of the base model.

Building on this, the authors propose Manifold‑aware Temporal LoRA (MaT‑LoRA). In standard LoRA each time‑step t is equipped with an independent low‑rank update ΔWₜ = BₜAₜ (rank r). MaT‑LoRA observes that all Bₜ share a common column subspace and all Aₜ share a common row subspace. By constructing fixed basis matrices B (for columns) and A (for rows) that span these global subspaces, each per‑time update can be expressed as

ΔWₜ = B·Fₜ·A,

where Fₜ = CₜDₜ ∈ ℝ^{r′×r′} is a small “temporal core” matrix that captures all time‑varying information, while B and A are time‑invariant. This manifold‑constrained factorization has several crucial benefits: (1) the number of trainable parameters grows only with the size of the core (r′²) and the fixed bases, not with the total number of model parameters; (2) the updates are guaranteed to lie on a shared low‑dimensional submanifold, making extrapolation to unseen future domains mathematically well‑posed; (3) the representation retains the full expressive power of independent LoRA adapters as long as the updates truly share the same support.

The temporal core Fₜ can be parameterized in multiple ways to match the nature of the underlying data dynamics. The paper presents three instantiations:

- Linear Continuous Dynamics (Lin‑dym MaT‑LoRA) – assumes the core evolves under a constant linear flow, modeled as Fₜ = exp(t·W)·F₀ where W is a learnable matrix. This imposes a strong inductive bias for smooth, predictable shifts.

- Non‑linear Continuous Dynamics – employs neural ordinary differential equations (Neural ODE) or other continuous‑time neural networks to model dF/dt = g(F, t), allowing for complex, non‑linear temporal patterns.

- Arbitrary Function Approximation – uses sequence models such as Transformers or MLPs to directly map timestamps to core matrices, offering maximal flexibility when the temporal pattern is irregular or unknown.

The authors evaluate MaT‑LoRA on four benchmarks: (a) synthetic data where the ground‑truth manifold is known, (b) a news article corpus spanning a decade, (c) scientific abstracts organized by publication year, and (d) product review ratings that drift over time. Across all settings, MaT‑LoRA consistently outperforms strong baselines—including full‑parameter TDG methods based on LSTMs, continuous‑time neural dynamics, and spectral analysis—by 3–7% absolute improvement in accuracy or F1 score. Importantly, the computational overhead of MaT‑LoRA remains essentially constant as model size grows; for a 1‑billion‑parameter LLM the method reduces effective temporal‑modeling FLOPs by more than ten orders of magnitude compared to full‑parameter TDG, enabling training on a single GPU where prior methods would exhaust memory.

Ablation studies demonstrate that (i) sharing the bases B and A is crucial: when each time step is allowed its own independent bases, performance drops and extrapolation becomes unstable; (ii) the dimensionality r′ of the shared subspace controls the trade‑off between expressiveness and efficiency, with r′≈rank(ΔW) yielding the best results; (iii) the choice of core parameterization matters: linear dynamics work best when the distribution shift is smooth, while non‑linear or arbitrary function cores excel on the more erratic review‑rating data.

In summary, the paper makes four major contributions:

- Theoretical foundation showing that low‑dimensional manifold structure survives the translation to parameter‑increment space under PEFT.

- Manifold‑constrained factorization that decouples time‑invariant bases from a compact temporal core, guaranteeing that all updates reside on a shared low‑dimensional manifold.

- Flexible core modeling via linear, non‑linear continuous, or arbitrary function dynamics, allowing the method to adapt to diverse temporal regimes.

- Extensive empirical validation confirming superior temporal generalization, massive scalability, and practical applicability to billion‑parameter LLMs.

MaT‑LoRA thus bridges the gap between the need for sophisticated temporal modeling and the computational constraints of modern LLMs, offering a viable path toward continuously adaptive language models that remain efficient and robust in ever‑changing real‑world environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment