Do Large Language Models Adapt to Language Variation across Socioeconomic Status?

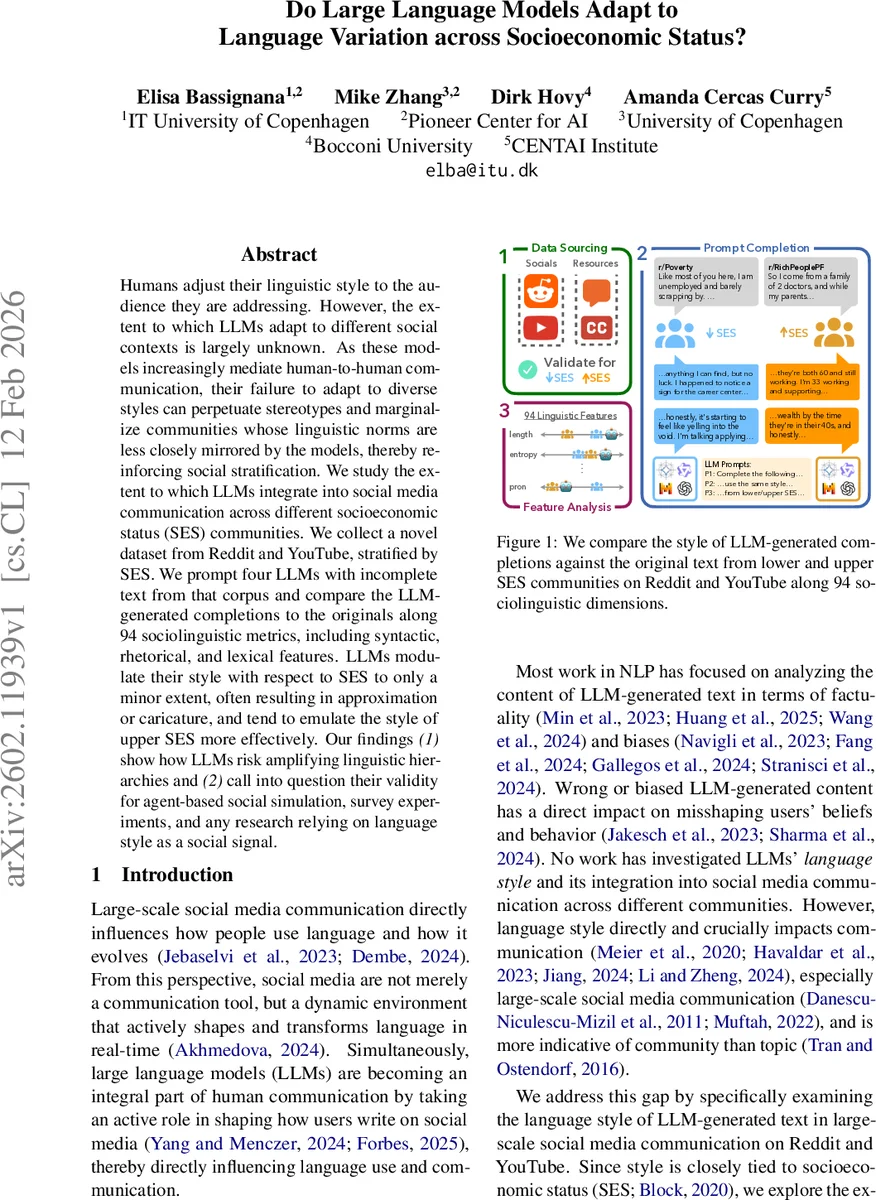

Humans adjust their linguistic style to the audience they are addressing. However, the extent to which LLMs adapt to different social contexts is largely unknown. As these models increasingly mediate human-to-human communication, their failure to adapt to diverse styles can perpetuate stereotypes and marginalize communities whose linguistic norms are less closely mirrored by the models, thereby reinforcing social stratification. We study the extent to which LLMs integrate into social media communication across different socioeconomic status (SES) communities. We collect a novel dataset from Reddit and YouTube, stratified by SES. We prompt four LLMs with incomplete text from that corpus and compare the LLM-generated completions to the originals along 94 sociolinguistic metrics, including syntactic, rhetorical, and lexical features. LLMs modulate their style with respect to SES to only a minor extent, often resulting in approximation or caricature, and tend to emulate the style of upper SES more effectively. Our findings (1) show how LLMs risk amplifying linguistic hierarchies and (2) call into question their validity for agent-based social simulation, survey experiments, and any research relying on language style as a social signal.

💡 Research Summary

This paper investigates whether state‑of‑the‑art large language models (LLMs) can adapt their linguistic style to reflect the socioeconomic status (SES) of the communities they are interacting with. To this end, the authors construct a novel, publicly released dataset drawn from Reddit and YouTube, explicitly stratified into lower‑SES and upper‑SES subsets. Lower‑SES data are collected using keywords related to financial struggle, poverty, and frugality (e.g., “poverty”, “frugal”) and by targeting subreddits such as r/povertyfinance and r/Frugal, as well as first‑person vlog captions on YouTube. Upper‑SES data focus on wealth, lifestyle, and leisure activities associated with higher socioeconomic groups (e.g., “golf”, “sailing”), pulling from subreddits like r/Rich and r/millionaire and corresponding YouTube videos. After cleaning (removing bots, ensuring minimum length of 50 words, filtering for first‑person pronouns, etc.), the final corpus contains roughly 2 000 Reddit posts and 1 500 YouTube captions per SES tier, with token counts in the millions.

The authors validate the SES split by replicating prior findings on readability: metrics such as Automated Readability Index, Coleman‑Liau, Dale‑Chall, Flesch‑Kincaid, Gunning Fog, and Linsear Write all show statistically significant differences (Mann‑Whitney U, p < 0.05) between lower and upper groups, confirming that the language of the two corpora diverges in expected ways.

For the experimental setup, each instance is split into a 25‑word “prompt” segment and a remaining “completion” segment. The prompt is fed to four LLMs—Gemma‑3‑27B‑it, Mistral‑Small‑3.2‑24B‑Instruct, Qwen3‑30B‑A3B‑Instruct, and OpenAI’s GPT‑5—under three prompting conditions: (1) Implicit (IMP) – a simple “complete the following text”, (2) Explicit Language Style (ELS) – instruction to match the style, tone, and diction of the prompt, and (3) Explicit Language Style + SES (ELS‑SES) – the same as ELS but also specifying whether the author is lower‑ or upper‑SES. One completion is generated per prompt using the default temperature.

To quantify style, the authors compute 94 sociolinguistic features covering three broad categories: (a) Biber’s 67 grammatical‑lexical categories (pronouns, tense markers, discourse particles, etc.), (b) part‑of‑speech ratios (nouns vs. pronouns, adverbs, etc.) and length‑based metrics (word count, syllable count, sentence count, polysyllable rate), and (c) higher‑level style measures (concreteness scores, entropy, maximum dependency‑tree depth, named‑entity density, hapax‑legomena rate). All counts are normalized by text length. Statistical significance of differences between model outputs and the original SES‑specific texts is assessed with Mann‑Whitney U tests.

Key findings:

- Limited SES‑aware adaptation – Across all models and prompting styles, the generated completions only partially mirror the target SES style. Features that strongly differentiate lower‑SES from upper‑SES in the human data (e.g., higher pronoun/adverb ratios, shorter sentences, lower concreteness) are often not reproduced; the model outputs tend to converge toward a more “neutral” or upper‑SES‑like style.

- Upper‑SES bias – The models more accurately reproduce the linguistic profile of upper‑SES texts. Formality markers (higher noun/adjective ratios, deeper syntactic trees, higher concreteness) align closely with the human upper‑SES baseline, suggesting that training data contain a disproportionate amount of higher‑SES language.

- Prompt specificity matters modestly – Adding explicit style instructions (ELS) yields modest improvements over the implicit baseline, but specifying SES (ELS‑SES) does not substantially close the gap for lower‑SES style. The effect size is small, indicating that current instruction‑following capabilities are insufficient for fine‑grained sociolinguistic adaptation.

- Context length effect – When the prompt segment is longer (more than 25 words, explored in an ablation), models show a stronger tendency to emulate upper‑SES style, implying that richer context amplifies existing biases rather than mitigating them.

The authors discuss broader implications: (i) LLMs that preferentially emulate higher‑SES language may reinforce existing linguistic hierarchies, marginalizing speakers from lower‑SES backgrounds in mediated communication (e.g., chat assistants, content recommendation). (ii) Social‑science methodologies that rely on LLM‑generated text as a proxy for human participants (e.g., survey experiments, agent‑based simulations) risk invalid conclusions if the models cannot faithfully reproduce SES‑specific linguistic cues.

Limitations are acknowledged: the SES labeling relies on community‑level heuristics rather than individual income or education data; the 94 features focus on structural style and may not capture nuanced pragmatic or discourse‑level differences; and the study does not control for model size, pre‑training corpus composition, or fine‑tuning differences that could explain the observed bias.

Future work is suggested along three axes: (a) richer, individually verified SES annotations and inclusion of additional platforms (e.g., forums, blogs) to test generalizability, (b) targeted fine‑tuning or low‑rank adaptation (e.g., LoRA) to improve lower‑SES style fidelity, and (c) probing the internal representations of LLMs to identify SES‑related token embeddings and to develop data‑balancing strategies.

In sum, the paper provides the first open‑access, SES‑stratified social‑media corpus and a comprehensive, metric‑driven evaluation of LLM style adaptation. The findings reveal a systematic bias toward upper‑SES language, limited capacity for SES‑aware style modulation, and raise important ethical and methodological concerns for the deployment of LLMs in socially diverse contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment