Cross-Modal Robustness Transfer (CMRT): Training Robust Speech Translation Models Using Adversarial Text

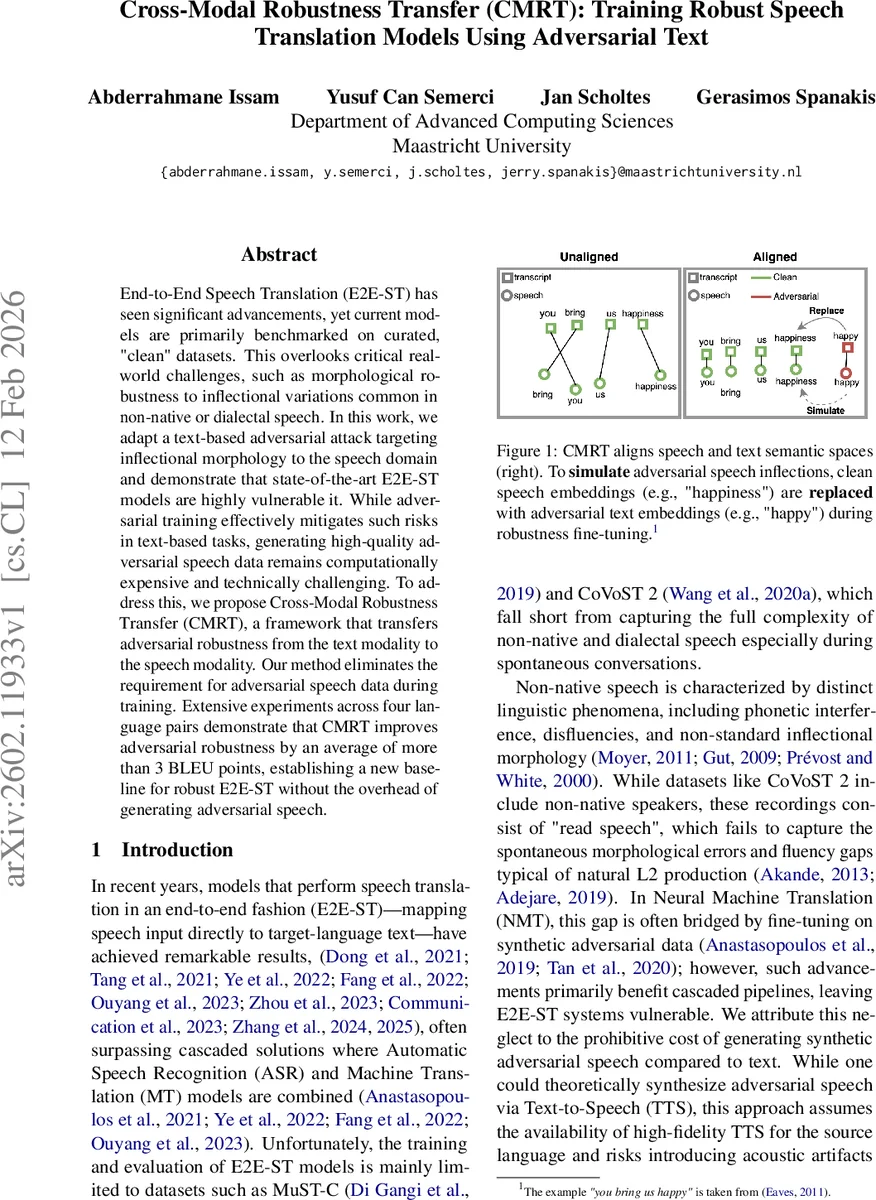

End-to-End Speech Translation (E2E-ST) has seen significant advancements, yet current models are primarily benchmarked on curated, “clean” datasets. This overlooks critical real-world challenges, such as morphological robustness to inflectional variations common in non-native or dialectal speech. In this work, we adapt a text-based adversarial attack targeting inflectional morphology to the speech domain and demonstrate that state-of-the-art E2E-ST models are highly vulnerable it. While adversarial training effectively mitigates such risks in text-based tasks, generating high-quality adversarial speech data remains computationally expensive and technically challenging. To address this, we propose Cross-Modal Robustness Transfer (CMRT), a framework that transfers adversarial robustness from the text modality to the speech modality. Our method eliminates the requirement for adversarial speech data during training. Extensive experiments across four language pairs demonstrate that CMRT improves adversarial robustness by an average of more than 3 BLEU points, establishing a new baseline for robust E2E-ST without the overhead of generating adversarial speech.

💡 Research Summary

The paper tackles a critical gap in End‑to‑End Speech Translation (E2E‑ST): while recent models achieve impressive results on clean benchmark datasets, they are largely untested against the morphological variations that frequently appear in non‑native or dialectal speech. To expose this weakness, the authors adapt the MORPHEUS adversarial attack—originally designed for text—to the speech domain (named Speech‑MORPHEUS) and demonstrate that state‑of‑the‑art E2E‑ST systems suffer substantial BLEU drops when faced with inflectional perturbations.

Generating adversarial speech at scale is prohibitively expensive because it requires high‑quality text‑to‑speech synthesis and introduces acoustic artifacts that can confound training. To avoid this bottleneck, the authors propose Cross‑Modal Robustness Transfer (CMRT), a two‑stage framework that transfers robustness from the text modality to the speech modality using only adversarial text data.

Stage 1 – Robustness Transfer (CMRT‑TR).

The goal is to align speech and text representations in a shared semantic space. The method relies on forced word‑level alignments between audio frames and transcription tokens. For each word, mean‑pooled speech embeddings and mean‑pooled text embeddings are treated as a positive pair, while all other words in the batch serve as negatives. Word‑Aligned Contrastive Learning (WA‑CO) maximizes cosine similarity between these pairs. In parallel, a mixup strategy randomly selects, for each word, either its speech embedding or its text embedding with probability p*. The selected segments are concatenated to form a mixed representation m. The model is trained jointly with (i) the standard speech‑translation (ST) loss, (ii) a machine‑translation (MT) loss on text, (iii) the contrastive WA‑CO loss, (iv) a cross‑entropy loss on the mixed representation, and (v) symmetric KL‑divergence terms that force the output distribution of m to match those of pure speech and pure text inputs. This combination forces the encoder‑decoder to become agnostic to the modality of its input while preserving a tight speech‑text alignment.

Stage 2 – Robustness Fine‑tuning (CMRT‑FN).

Instead of generating adversarial audio, the authors use adversarial text examples produced by MORPHEUS. For each training instance, they identify the set of words altered by the attack (e_I). The embeddings of these altered words are taken directly from the adversarial text and injected into the aligned speech latent space; all other words follow the standard mixup rule. The speech encoder is frozen to keep the alignment intact, and the rest of the network is fine‑tuned on these “adversarial mixup” sequences using (i) a cross‑entropy loss and (ii) asymmetric KL‑divergence that pushes the model’s output for the adversarial mixup to resemble the outputs for clean speech and clean text. This mirrors virtual adversarial training, encouraging the model to be robust to perturbations without ever seeing adversarial audio.

Experiments.

The authors evaluate CMRT on four language pairs: English‑German, English‑Catalan, English‑Arabic, and French‑English. They construct a Speech‑MORPHEUS test set that introduces realistic inflectional errors. Compared to baseline E2E‑ST models, CMRT yields an average improvement of more than 3 BLEU points on the adversarial test set. Importantly, when compared with models that are fine‑tuned on synthetically generated adversarial speech, CMRT achieves comparable robustness while maintaining or even improving performance on the original clean CoVoST2 test set. This demonstrates that the method avoids the typical robustness‑accuracy trade‑off.

Key insights and contributions.

- A strong speech‑text semantic alignment (via WA‑CO and mixup) is essential for transferring robustness across modalities.

- Injecting adversarial text embeddings directly into the speech latent space is sufficient to teach the model to handle inflectional noise.

- The approach eliminates the need for costly adversarial speech generation, making robustness training scalable.

The paper concludes that CMRT provides a practical, low‑cost pathway to more morphologically robust speech translation and opens avenues for extending the technique to other types of acoustic perturbations (e.g., prosody, speed) and to a broader set of languages and domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment