TADA! Tuning Audio Diffusion Models through Activation Steering

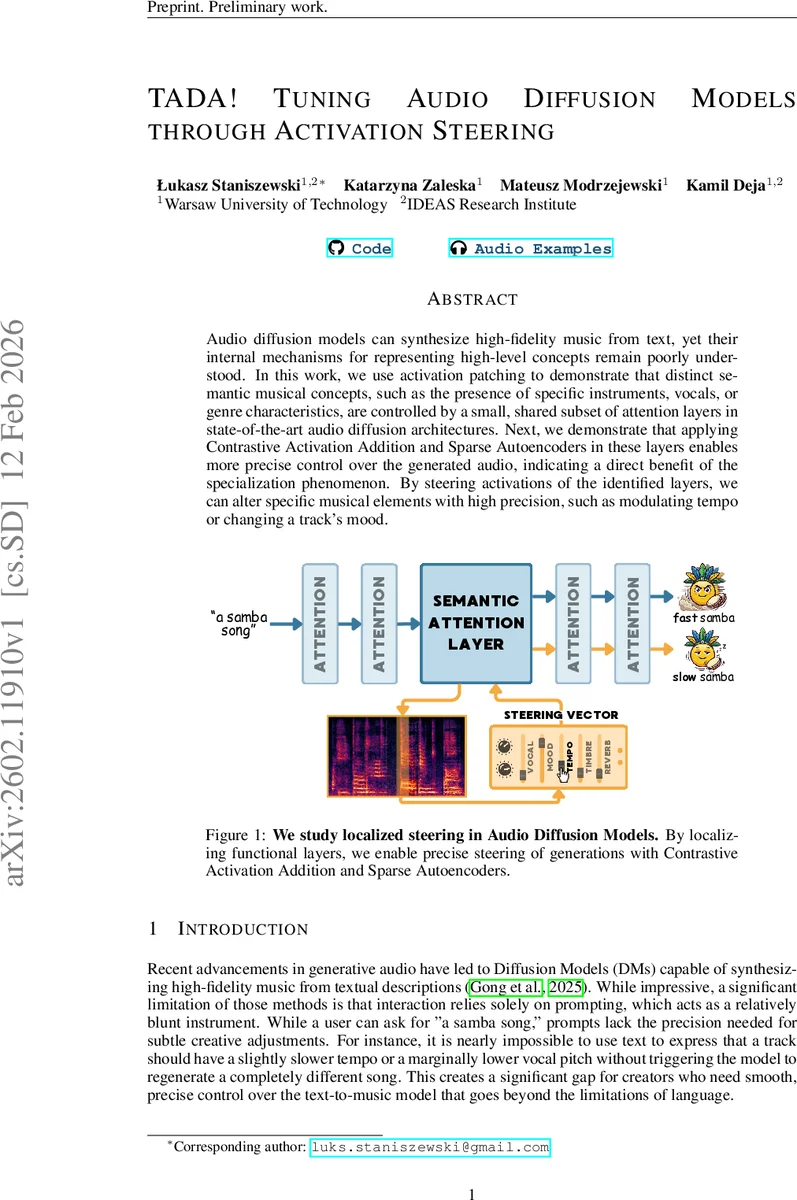

Audio diffusion models can synthesize high-fidelity music from text, yet their internal mechanisms for representing high-level concepts remain poorly understood. In this work, we use activation patching to demonstrate that distinct semantic musical concepts, such as the presence of specific instruments, vocals, or genre characteristics, are controlled by a small, shared subset of attention layers in state-of-the-art audio diffusion architectures. Next, we demonstrate that applying Contrastive Activation Addition and Sparse Autoencoders in these layers enables more precise control over the generated audio, indicating a direct benefit of the specialization phenomenon. By steering activations of the identified layers, we can alter specific musical elements with high precision, such as modulating tempo or changing a track’s mood.

💡 Research Summary

This paper investigates the internal mechanisms of state‑of‑the‑art text‑to‑music diffusion models and proposes a method for fine‑grained control of generated audio through activation steering. The authors first apply activation patching, a causal mediation technique, to locate the specific cross‑attention layers that encode high‑level musical concepts such as instrument presence, vocal gender, tempo, mood, and genre. By constructing counterfactual prompt pairs (e.g., “female vocalist” vs. “male vocalist”) and swapping the cached key‑value pairs of a single layer during generation, they identify a small, shared subset of layers—typically 2‑4 out of 64 (U‑Net) or 24 (Transformer) cross‑attention blocks—that act as semantic bottlenecks.

Having isolated these functional layers across three models (AudioLDM2, Stable Audio Open, and Ace‑Step), the authors introduce two steering techniques. The first, Contrastive Activation Addition (CAA), computes a normalized difference vector between the averaged cross‑attention outputs of many contrastive prompt pairs and adds this vector, scaled by a strength parameter α, to the identified layers. A re‑normalization step preserves activation magnitude. The second technique trains a Top‑K Sparse Autoencoder (SAE) on the most responsive layer, extracts a sparse latent code, and ranks features using a TF‑IDF‑like importance score. The decoder columns of the top‑scoring features are summed to form a steering vector v_SAE, which is added directly to the layer’s output.

Evaluation uses four metrics: Preservation (LPIPS and FAD against the unsteered baseline), Δ Alignment (change in CLAP or MuQ similarity between extreme α values), Smoothness (standard deviation of alignment differences across α), and Audio Quality (Audiobox Aesthetics). Results show that steering confined to the functional layers yields substantially higher alignment gains (30‑45 % increase) while keeping preservation loss below 5 % and maintaining high aesthetic scores. Specific experiments demonstrate precise tempo modulation, vocal gender swapping, instrument replacement, and mood shifts, with smooth interpolation as α varies.

The study reveals a “specialization phenomenon”: despite the massive parameter count, audio diffusion models concentrate semantic control in a narrow set of cross‑attention blocks, mirroring findings in vision and language models. Targeted activation steering thus offers a more efficient and less destructive alternative to global interventions or reliance on textual prompts alone. Moreover, the SAE‑based approach provides interpretable, sparse features that can be mapped to musical attributes, opening the door to user‑friendly sliders or real‑time editing tools.

Limitations include the focus on short (10‑30 s) audio clips, a limited set of concepts, and the lack of experiments on simultaneous multi‑concept steering or real‑time deployment. Future work could extend the methodology to longer compositions, explore compositional interactions between concepts, and integrate reinforcement learning for adaptive steering.

In conclusion, by pinpointing semantic bottleneck layers and applying contrastive activation addition or sparse autoencoder‑derived vectors, the authors achieve precise, high‑fidelity manipulation of generated music. This advances the controllability of diffusion‑based audio synthesis and provides a solid foundation for interactive music creation systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment