Talk2DM: Enabling Natural Language Querying and Commonsense Reasoning for Vehicle-Road-Cloud Integrated Dynamic Maps with Large Language Models

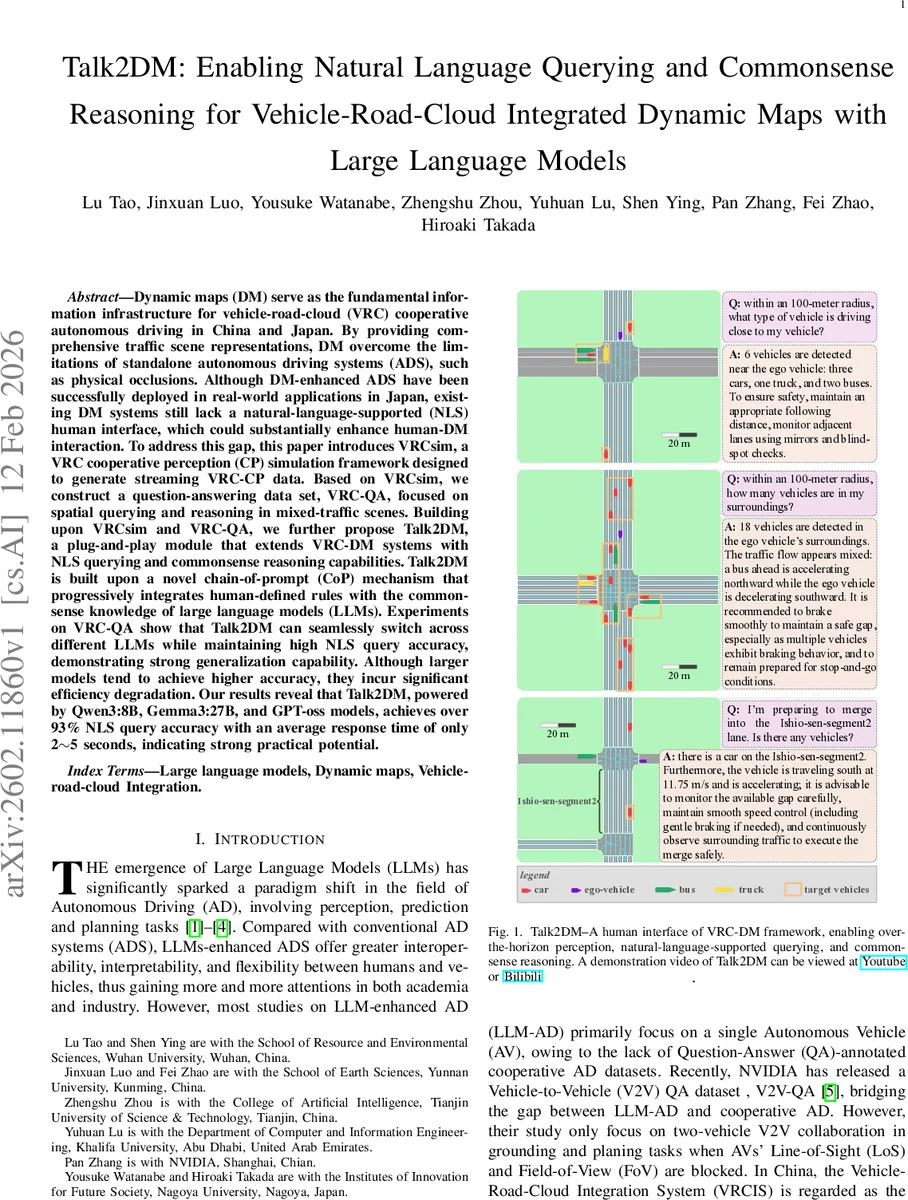

Dynamic maps (DM) serve as the fundamental information infrastructure for vehicle-road-cloud (VRC) cooperative autonomous driving in China and Japan. By providing comprehensive traffic scene representations, DM overcome the limitations of standalone autonomous driving systems (ADS), such as physical occlusions. Although DM-enhanced ADS have been successfully deployed in real-world applications in Japan, existing DM systems still lack a natural-language-supported (NLS) human interface, which could substantially enhance human-DM interaction. To address this gap, this paper introduces VRCsim, a VRC cooperative perception (CP) simulation framework designed to generate streaming VRC-CP data. Based on VRCsim, we construct a question-answering data set, VRC-QA, focused on spatial querying and reasoning in mixed-traffic scenes. Building upon VRCsim and VRC-QA, we further propose Talk2DM, a plug-and-play module that extends VRC-DM systems with NLS querying and commonsense reasoning capabilities. Talk2DM is built upon a novel chain-of-prompt (CoP) mechanism that progressively integrates human-defined rules with the commonsense knowledge of large language models (LLMs). Experiments on VRC-QA show that Talk2DM can seamlessly switch across different LLMs while maintaining high NLS query accuracy, demonstrating strong generalization capability. Although larger models tend to achieve higher accuracy, they incur significant efficiency degradation. Our results reveal that Talk2DM, powered by Qwen3:8B, Gemma3:27B, and GPT-oss models, achieves over 93% NLS query accuracy with an average response time of only 2-5 seconds, indicating strong practical potential.

💡 Research Summary

The paper addresses a critical gap in vehicle‑road‑cloud (VRC) cooperative autonomous driving: the lack of a natural‑language‑supported (NLS) interface for dynamic maps (DM). While DMs provide a unified, relational representation of traffic scenes across vehicles, roadside units (RSUs), and cloud servers, interaction with them has traditionally relied on SQL‑like queries, which are rigid and unintuitive for human operators. To bridge this gap, the authors propose Talk2DM, a plug‑and‑play module that equips existing VRC‑DM platforms with NLS querying and commonsense reasoning capabilities powered by large language models (LLMs).

The contribution is built on three pillars. First, VRCsim, a simulation framework that integrates SUMO (microscopic traffic simulation), ROS2 (middleware for data distribution), and Qt6 (GUI). VRCsim generates streaming cooperative perception (CP) data from AVs and RSUs, models perception with simple geometric rules (Equations 1 and 2), and publishes object information (OI) via a DDS‑based “/OI” topic. The cloud DM node aggregates OI, reconstructs a digital vector scene, and renders it into textual descriptions suitable for LLM input.

Second, the authors construct VRC‑QA, a large‑scale question‑answer dataset tailored to VRC‑DM. It contains over 10 K mixed‑traffic scenes and more than 100 K QA pairs covering three categories: (i) spatial queries (e.g., “What vehicles are within 10 m of the ego vehicle?”), (ii) traffic‑flow and safety reasoning (e.g., “Is it safe to merge when a bus is accelerating?”), and (iii) scenario‑based decision making (e.g., “What speed adjustment is needed to enter a merging lane?”). Answers are derived from simulation logs and validated by domain experts, providing a reliable benchmark for LLM‑based reasoning in cooperative driving contexts.

Third, Talk2DM itself implements a novel Chain‑of‑Prompt (CoP) mechanism. CoP consists of three stages: (1) a rule‑based pre‑processing step where human‑crafted domain rules (e.g., “If line‑of‑sight is blocked, return the nearest detectable object”) are embedded into the prompt; (2) the raw natural‑language query from the user is passed to the LLM, which leverages its pretrained commonsense knowledge together with the contextual information from VRC‑QA; (3) a post‑processing/validation stage that enforces structured output constraints (integer vehicle counts, speed units, etc.). This design makes Talk2DM model‑agnostic: any off‑the‑shelf LLM can be swapped without retraining, and the system automatically adapts to the model’s capabilities.

Experiments evaluate six contemporary LLMs (Qwen‑3:8B, Gemma‑3:27B, GPT‑oss, DeepSeek‑r1, LLaMA‑3.1, and a proprietary model) within the same CoP pipeline. Accuracy improves with model size, reaching 96.2 % for the largest Gemma‑3:27B, while smaller models still achieve >93 % accuracy. Average response latency ranges from 2 to 5 seconds, acceptable for real‑time driver assistance. Compared with a baseline SQL‑based DM query interface (≈78 % accuracy), Talk2DM demonstrates a substantial gain in both usability and performance.

The paper also discusses limitations. The rule‑based component requires domain experts to craft and maintain prompts, which may become burdensome when extending to novel traffic modalities such as autonomous trams or aerial drones. Moreover, deploying large LLMs in the cloud introduces network latency; future work should explore edge‑optimized lightweight models and automatic rule extraction to reduce human effort.

In summary, Talk2DM showcases how integrating LLMs with dynamic map infrastructures can transform VRC‑DM from a rigid data store into an interactive, reasoning‑capable assistant. The open‑source VRCsim and VRC‑QA resources, together with the demonstrated CoP framework, provide a solid foundation for subsequent research on natural‑language interfaces, multimodal perception‑LLM fusion, and real‑world validation in cooperative autonomous driving ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment