Mask What Matters: Mitigating Object Hallucinations in Multimodal Large Language Models with Object-Aligned Visual Contrastive Decoding

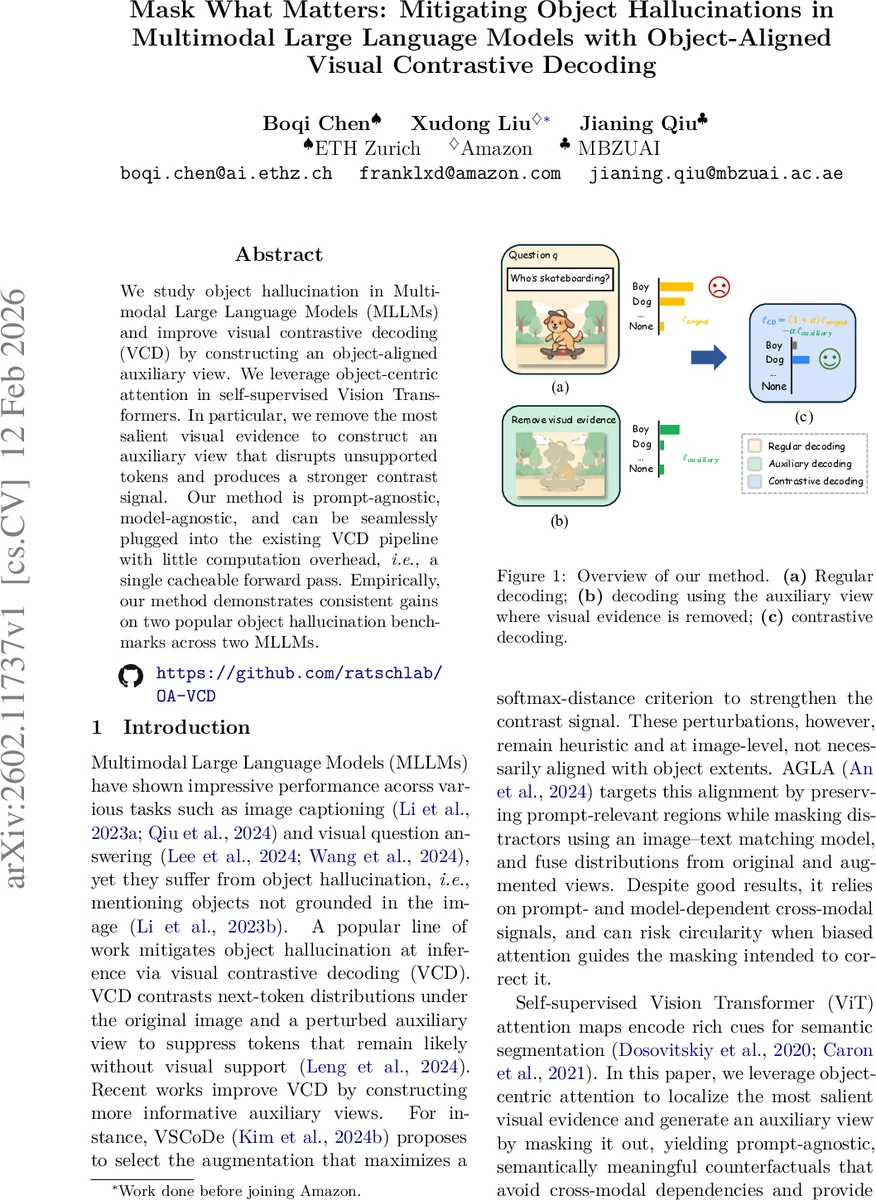

We study object hallucination in Multimodal Large Language Models (MLLMs) and improve visual contrastive decoding (VCD) by constructing an object-aligned auxiliary view. We leverage object-centric attention in self-supervised Vision Transformers. In particular, we remove the most salient visual evidence to construct an auxiliary view that disrupts unsupported tokens and produces a stronger contrast signal. Our method is prompt-agnostic, model-agnostic, and can be seamlessly plugged into the existing VCD pipeline with little computation overhead, i.e., a single cacheable forward pass. Empirically, our method demonstrates consistent gains on two popular object hallucination benchmarks across two MLLMs.

💡 Research Summary

The paper tackles the pervasive problem of object hallucination in Multimodal Large Language Models (MLLMs), where the model mentions objects that are not present in the visual input. While Visual Contrastive Decoding (VCD) has emerged as a promising inference‑time technique—contrasting the token distribution generated from the original image with that from a perturbed auxiliary view—the existing methods for creating the auxiliary view rely on heuristic image‑level perturbations (blur, crop, color shift) that are not aligned with object boundaries. Consequently, the contrast signal is often weak and may not effectively suppress hallucinated tokens.

To address this, the authors propose an object‑aligned auxiliary view generated by exploiting the object‑centric attention maps of a self‑supervised Vision Transformer (ViT), specifically DINO. The process is as follows: (1) extract the

Comments & Academic Discussion

Loading comments...

Leave a Comment