OMEGA-Avatar: One-shot Modeling of 360° Gaussian Avatars

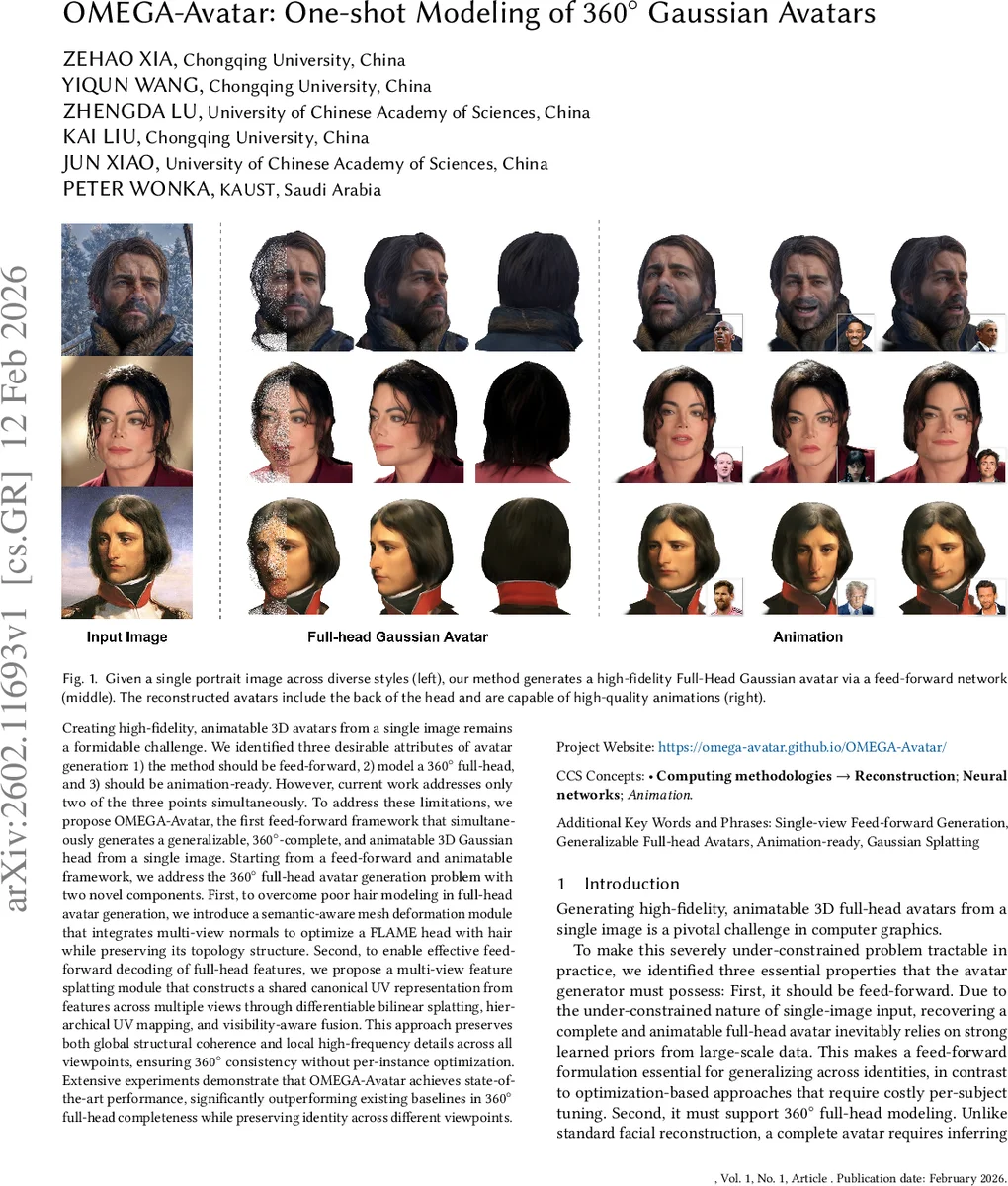

Creating high-fidelity, animatable 3D avatars from a single image remains a formidable challenge. We identified three desirable attributes of avatar generation: 1) the method should be feed-forward, 2) model a 360° full-head, and 3) should be animation-ready. However, current work addresses only two of the three points simultaneously. To address these limitations, we propose OMEGA-Avatar, the first feed-forward framework that simultaneously generates a generalizable, 360°-complete, and animatable 3D Gaussian head from a single image. Starting from a feed-forward and animatable framework, we address the 360° full-head avatar generation problem with two novel components. First, to overcome poor hair modeling in full-head avatar generation, we introduce a semantic-aware mesh deformation module that integrates multi-view normals to optimize a FLAME head with hair while preserving its topology structure. Second, to enable effective feed-forward decoding of full-head features, we propose a multi-view feature splatting module that constructs a shared canonical UV representation from features across multiple views through differentiable bilinear splatting, hierarchical UV mapping, and visibility-aware fusion. This approach preserves both global structural coherence and local high-frequency details across all viewpoints, ensuring 360° consistency without per-instance optimization. Extensive experiments demonstrate that OMEGA-Avatar achieves state-of-the-art performance, significantly outperforming existing baselines in 360° full-head completeness while robustly preserving identity across different viewpoints.

💡 Research Summary

OMEGA‑Avatar tackles the long‑standing problem of generating a high‑fidelity, animatable 3D head from a single portrait image while satisfying three desiderata: feed‑forward inference, full 360° coverage, and animation readiness. The authors observe that prior works either lack full‑head completeness, require costly per‑instance optimization, or cannot be driven by explicit expression/pose parameters. To bridge this gap, OMEGA‑Avatar introduces two novel modules that operate within a feed‑forward pipeline.

First, a semantic‑aware mesh deformation stage enriches a FLAME template with detailed geometry for the entire head, including hair and the back of the skull. The method leverages a pre‑trained multi‑view diffusion model to synthesize six consistent RGB views and corresponding normal maps from the single input image. These normal maps serve as multi‑view geometric priors. An optimization over per‑vertex offsets ΔV is performed, guided by a normal consistency loss, a landmark projection loss, and a spatially varying Laplacian regularizer that heavily penalizes deviations in hair and cranial regions while preserving the facial topology. A semantic mask restricts normal‑based deformation to non‑facial areas, ensuring that the underlying FLAME parametric structure remains intact for later animation.

Second, the multi‑view feature splatting module aggregates high‑level image features from the generated views into a shared canonical UV space. Features are extracted with a DINOv3‑based backbone plus a Feature Pyramid Network, then projected onto UV coordinates via differentiable bilinear splatting. Hierarchical UV mapping builds a coarse‑to‑fine pyramid to fill missing regions, while a visibility‑aware fusion computes per‑pixel weights from view confidence and sampling density. The result is a dense, view‑consistent UV feature map that captures both global structure and fine‑grained texture details across all viewpoints.

The avatar representation combines two Gaussian branches. The vertex Gaussian branch anchors a set of 3D Gaussians directly to FLAME vertices; each vertex receives a learned local embedding concatenated with a global identity token extracted from the frontal view. This branch guarantees that expression and pose parameters can be applied instantly, preserving geometric stability during animation. The UV Gaussian branch decodes the canonical UV feature map into a dense set of Gaussians that model surface appearance, ensuring view‑consistent color, opacity, and high‑frequency detail. The two branches are merged, yielding a hybrid Gaussian representation that is both structurally sound and visually rich.

Training optimizes the composite loss described above, and animation is achieved by feeding expression and pose parameters derived from a target image into the deformed FLAME mesh. A lightweight neural renderer refines the final render, correcting minor artifacts and enhancing realism.

Extensive quantitative experiments on the A‑va256 dataset (derived from FFHQ) and a proprietary multi‑view head dataset demonstrate that OMEGA‑Avatar outperforms state‑of‑the‑art baselines such as GAGA‑Avatar, SOAP, and FATE. Metrics improve by up to 2.95% in PSNR, 0.30% in SSIM, and significant reductions in LPIPS and depth‑error, especially for side and rear views where prior methods typically collapse. Qualitative results show faithful identity preservation, realistic hair geometry, and smooth animation driven by FLAME parameters.

In summary, OMEGA‑Avatar delivers a fully feed‑forward, generalizable pipeline that produces complete 360° Gaussian head avatars from a single image, integrates multi‑view geometric priors for accurate hair and cranial modeling, and employs a differentiable UV‑space feature splatting mechanism to maintain high‑frequency detail across all viewpoints. This work establishes a new benchmark for one‑shot, animation‑ready avatar creation, opening avenues for real‑time virtual avatars in telepresence, gaming, and AR/VR applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment