Clutt3R-Seg: Sparse-view 3D Instance Segmentation for Language-grounded Grasping in Cluttered Scenes

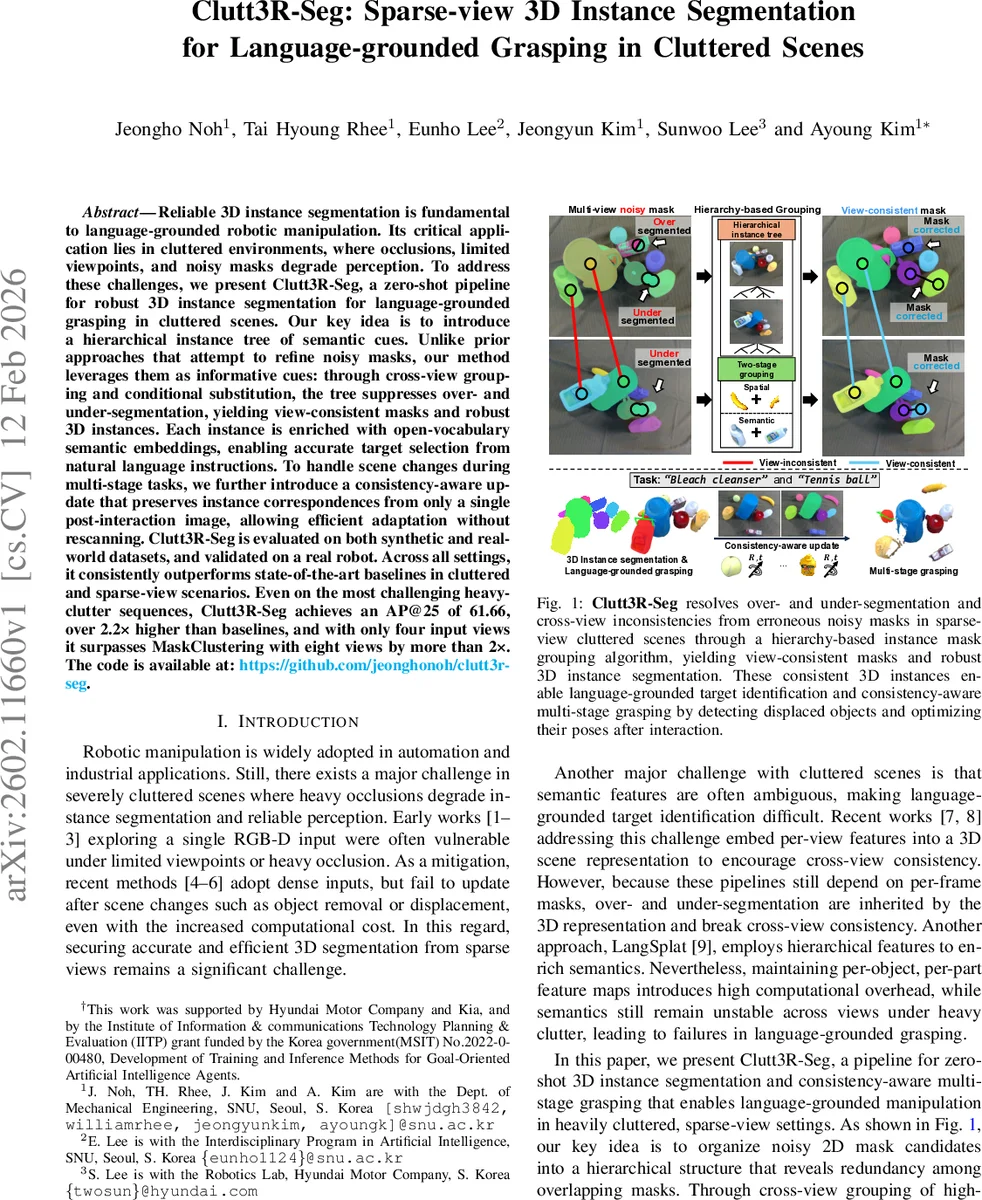

Reliable 3D instance segmentation is fundamental to language-grounded robotic manipulation. Its critical application lies in cluttered environments, where occlusions, limited viewpoints, and noisy masks degrade perception. To address these challenges, we present Clutt3R-Seg, a zero-shot pipeline for robust 3D instance segmentation for language-grounded grasping in cluttered scenes. Our key idea is to introduce a hierarchical instance tree of semantic cues. Unlike prior approaches that attempt to refine noisy masks, our method leverages them as informative cues: through cross-view grouping and conditional substitution, the tree suppresses over- and under-segmentation, yielding view-consistent masks and robust 3D instances. Each instance is enriched with open-vocabulary semantic embeddings, enabling accurate target selection from natural language instructions. To handle scene changes during multi-stage tasks, we further introduce a consistency-aware update that preserves instance correspondences from only a single post-interaction image, allowing efficient adaptation without rescanning. Clutt3R-Seg is evaluated on both synthetic and real-world datasets, and validated on a real robot. Across all settings, it consistently outperforms state-of-the-art baselines in cluttered and sparse-view scenarios. Even on the most challenging heavy-clutter sequences, Clutt3R-Seg achieves an AP@25 of 61.66, over 2.2x higher than baselines, and with only four input views it surpasses MaskClustering with eight views by more than 2x. The code is available at: https://github.com/jeonghonoh/clutt3r-seg.

💡 Research Summary

Clutt3R‑Seg tackles two intertwined challenges in robotic manipulation: robust 3D instance segmentation from a few sparse RGB‑D views in heavily cluttered scenes, and direct grounding of natural‑language commands to the segmented objects. The pipeline begins by feeding posed RGB images to Grounded SAM with the generic prompt “object”, obtaining a large set of noisy 2D masks that include proper, over‑segmented, and under‑segmented candidates. Simultaneously, a depth‑estimation network (MVSAnything) reconstructs a point cloud, which is down‑sampled and clustered into super‑voxels using a distance‑plus‑normal similarity metric.

The core contribution is a hierarchical instance tree that organizes the noisy masks into three levels—object‑cluster, object, and sub‑object—such that each root‑to‑leaf path contains at most one proper mask. This structural constraint makes it possible to treat leaf nodes as the only viable proper‑segment candidates. All leaf nodes from every view are then assembled into a global graph. A two‑stage grouping algorithm merges nodes first by spatial similarity (a weighted Jaccard index over super‑voxel occupancy) and then by semantic similarity (cosine similarity of Duoduo‑CLIP embeddings). Spatial similarity stabilizes grouping under sparse viewpoints and heavy occlusion, while semantic similarity resolves ambiguities across different appearances.

After the initial grouping, residual nodes that still cause over‑segmentation are corrected through conditional parent substitution: a residual node is replaced by its parent mask, effectively merging over‑segmented fragments into the correct object mask. The result is a set of view‑consistent 2D masks that, when projected back onto the 3D point cloud, yield robust 3D instance segments despite the original noise.

Each 3D instance is enriched with an open‑vocabulary CLIP embedding, enabling zero‑shot language grounding. A user can issue arbitrary text commands such as “bleach cleanser” or “tennis ball”; the system computes cosine similarity between the command embedding and each instance embedding, selecting the most likely target without any pre‑defined label set.

To support multi‑stage grasping, Clutt3R‑Seg introduces a consistency‑aware scene update that requires only a single post‑interaction image. After a grasp, the new image is processed to obtain fresh masks, which are matched to existing instances using the same hierarchical grouping and semantic similarity. This preserves the identity and pose of unchanged objects while detecting displaced or newly exposed objects, allowing the robot to re‑plan without rescanning the entire scene.

Extensive experiments on synthetic YCB‑Clutter data and real‑world robot setups demonstrate the method’s superiority. In the most challenging heavy‑clutter sequences, Clutt3R‑Seg achieves an AP@25 of 61.66 %, more than twice the performance of state‑of‑the‑art baselines. With only four input views it outperforms MaskClustering that uses eight views by a factor of two. Ablation studies confirm that each component—hierarchical tree, two‑stage grouping, conditional substitution, and the single‑image update—contributes significantly to the final accuracy.

The paper’s contributions can be summarized as: (1) a novel hierarchical mask organization that turns noisy 2D outputs into reliable cross‑view groups, (2) a dual similarity grouping strategy that is robust to sparse viewpoints and heavy occlusion, (3) a conditional substitution step that eliminates over‑segmentation, (4) open‑vocabulary semantic enrichment for zero‑shot language grounding, and (5) an efficient post‑grasp update mechanism that maintains scene consistency with minimal perception overhead. The authors release code, simulation environments, and synthetic datasets, facilitating reproducibility and future research. Overall, Clutt3R‑Seg provides a practical, scalable solution for language‑guided manipulation in real‑world cluttered environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment