Neuro-Symbolic Multitasking: A Unified Framework for Discovering Generalizable Solutions to PDE Families

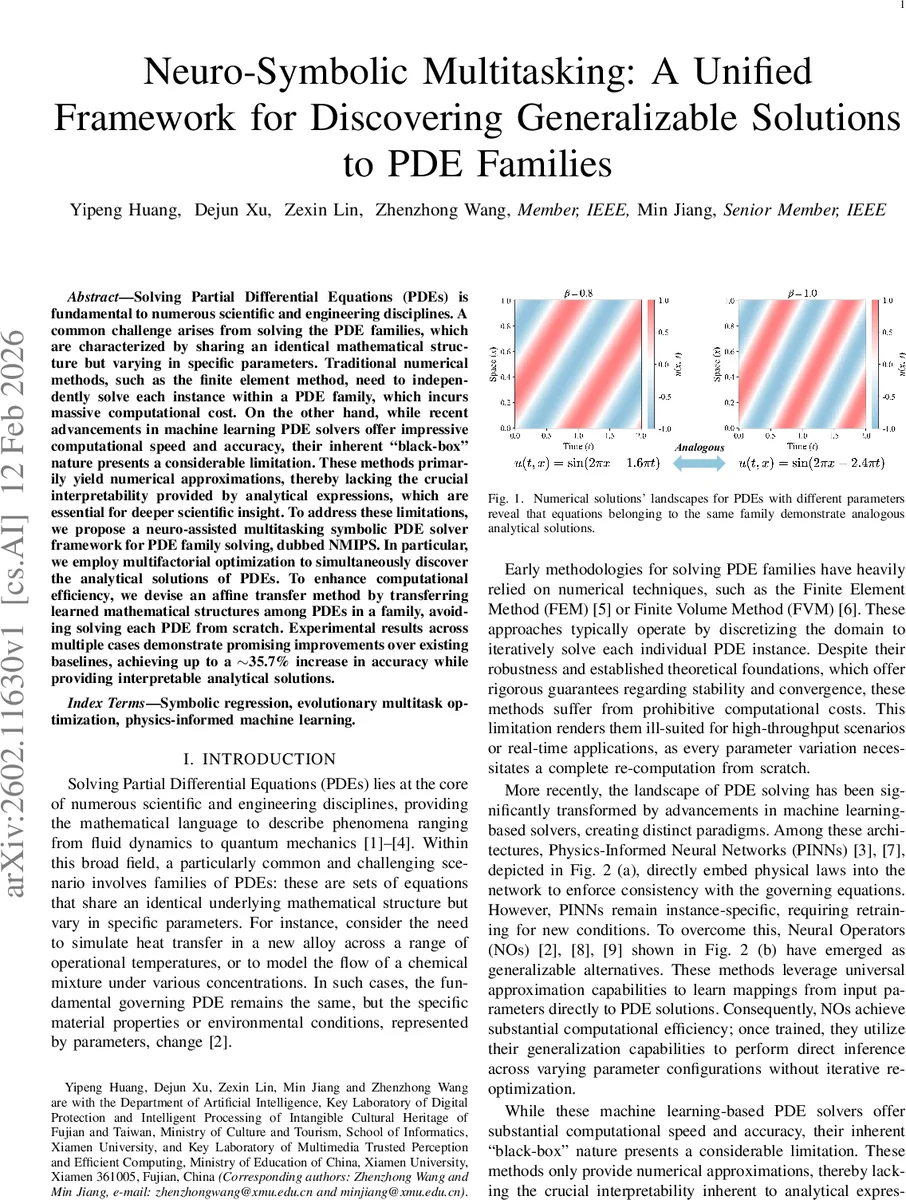

Solving Partial Differential Equations (PDEs) is fundamental to numerous scientific and engineering disciplines. A common challenge arises from solving the PDE families, which are characterized by sharing an identical mathematical structure but varying in specific parameters. Traditional numerical methods, such as the finite element method, need to independently solve each instance within a PDE family, which incurs massive computational cost. On the other hand, while recent advancements in machine learning PDE solvers offer impressive computational speed and accuracy, their inherent ``black-box" nature presents a considerable limitation. These methods primarily yield numerical approximations, thereby lacking the crucial interpretability provided by analytical expressions, which are essential for deeper scientific insight. To address these limitations, we propose a neuro-assisted multitasking symbolic PDE solver framework for PDE family solving, dubbed NMIPS. In particular, we employ multifactorial optimization to simultaneously discover the analytical solutions of PDEs. To enhance computational efficiency, we devise an affine transfer method by transferring learned mathematical structures among PDEs in a family, avoiding solving each PDE from scratch. Experimental results across multiple cases demonstrate promising improvements over existing baselines, achieving up to a $\sim$35.7% increase in accuracy while providing interpretable analytical solutions.

💡 Research Summary

The paper introduces NMIPS (Neuro‑assisted Multitasking Symbolic PDE Solver), a novel framework that simultaneously discovers analytical solutions for families of partial differential equations (PDEs) sharing the same mathematical skeleton but differing in parameter values. Traditional numerical solvers (FEM, FDM, etc.) must recompute each instance from scratch, leading to prohibitive costs in multi‑query scenarios. Recent data‑driven approaches such as Physics‑Informed Neural Networks (PINNs) and Neural Operators provide fast numerical approximations but remain black‑box models, offering no closed‑form expressions and thus limited scientific insight. Symbolic regression (SR), especially Genetic Programming Symbolic Regression (GPSR), can yield interpretable formulas but is limited to solving a single PDE at a time, making it unsuitable for PDE families.

NMIPS tackles these shortcomings by combining two key ideas: (1) Multifactorial Optimization (MFO) and (2) an Affine Transfer mechanism. In the MFO stage, each PDE instance (task) is encoded as a symbolic expression tree (operators, variables, constants). All tasks are placed into a unified search space, allowing a single evolutionary population to evolve solutions for all tasks concurrently. A “skill‑transfer” scheme converts task‑specific fitness (PDE residuals, boundary losses) into a scalar shared fitness, so that individuals performing well on one task can influence selection pressure on other tasks. This multi‑task evolutionary process exploits the structural similarity among tasks without requiring separate runs.

The Affine Transfer module further accelerates learning by extracting statistical descriptors (mean, variance) of each task’s population and training a small multilayer perceptron (MLP) to predict scaling (α) and shifting (β) parameters. The learned affine transformation (α·x + β) is applied to symbolic trees from one task before they are evaluated on another, effectively re‑using the discovered functional skeleton while adapting constant coefficients to new parameter settings. This approach captures the intuition that PDE families often have identical solution forms with only parameter‑dependent coefficients.

Experiments cover several benchmark families: 1‑D advection‑diffusion, 2‑D heat conduction, and nonlinear reaction‑diffusion systems. For each family, 5–10 parameter configurations are treated as separate tasks. NMIPS is compared against standard GPSR, PINNs, and Neural Operators. Accuracy is measured by L2 distance to known analytical solutions. NMIPS achieves up to a 35.7 % improvement in accuracy over the best baseline and demonstrates robust performance under noisy observations (Gaussian noise with σ = 0.05), where it still outperforms others by more than 20 % in error reduction. Computationally, NMIPS converges roughly 3.2 × faster per task because the affine transfer eliminates redundant exploration of the same functional structure.

Limitations are acknowledged: the current tree‑based GP representation struggles with high‑dimensional tensor operations and complex boundary conditions, and the affine transfer may overfit when the number of tasks grows large. Future work proposes extending the representation to graph‑based GP, integrating meta‑learning to automatically tune transfer parameters, and applying the framework to multi‑physics, multi‑scale PDE problems. The authors also suggest incorporating regularization or Bayesian optimization within the transfer module to maintain interpretability while scaling to more intricate nonlinear systems.

Overall, NMIPS offers a compelling blend of evolutionary multitasking and knowledge transfer, delivering interpretable, high‑fidelity analytical solutions for entire PDE families with significantly reduced computational effort.

Comments & Academic Discussion

Loading comments...

Leave a Comment