GP2F: Cross-Domain Graph Prompting with Adaptive Fusion of Pre-trained Graph Neural Networks

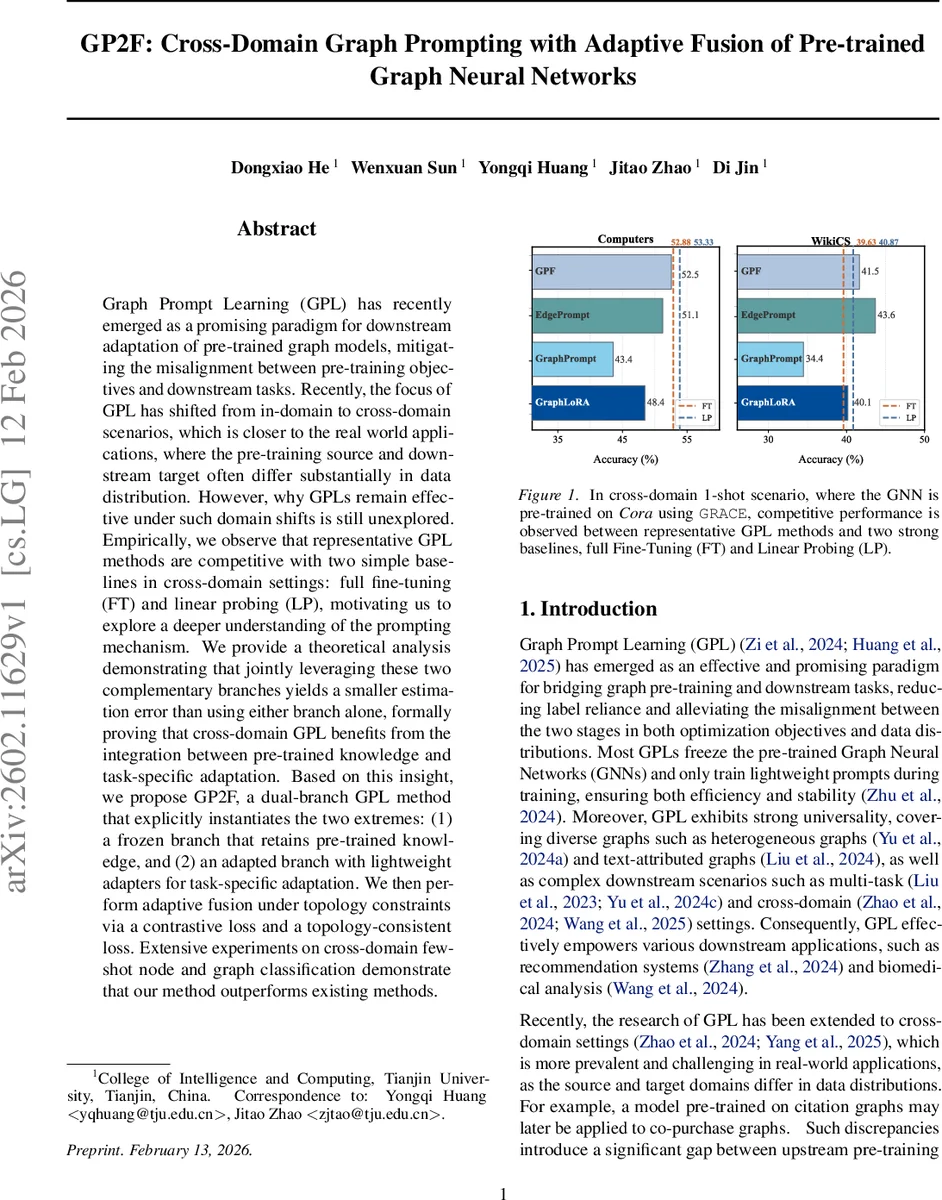

Graph Prompt Learning (GPL) has recently emerged as a promising paradigm for downstream adaptation of pre-trained graph models, mitigating the misalignment between pre-training objectives and downstream tasks. Recently, the focus of GPL has shifted from in-domain to cross-domain scenarios, which is closer to the real world applications, where the pre-training source and downstream target often differ substantially in data distribution. However, why GPLs remain effective under such domain shifts is still unexplored. Empirically, we observe that representative GPL methods are competitive with two simple baselines in cross-domain settings: full fine-tuning (FT) and linear probing (LP), motivating us to explore a deeper understanding of the prompting mechanism. We provide a theoretical analysis demonstrating that jointly leveraging these two complementary branches yields a smaller estimation error than using either branch alone, formally proving that cross-domain GPL benefits from the integration between pre-trained knowledge and task-specific adaptation. Based on this insight, we propose GP2F, a dual-branch GPL method that explicitly instantiates the two extremes: (1) a frozen branch that retains pre-trained knowledge, and (2) an adapted branch with lightweight adapters for task-specific adaptation. We then perform adaptive fusion under topology constraints via a contrastive loss and a topology-consistent loss. Extensive experiments on cross-domain few-shot node and graph classification demonstrate that our method outperforms existing methods.

💡 Research Summary

Paper Overview

The paper tackles the emerging problem of cross‑domain graph prompt learning (GPL), where a graph neural network (GNN) pre‑trained on a source domain must be adapted to a target domain that may have a substantially different data distribution. While recent GPL methods have shown promising results in in‑domain settings, their effectiveness under domain shift remains poorly understood. The authors first observe that two simple baselines—Linear Probing (LP), which freezes the pre‑trained encoder and only learns a linear classifier, and Full Fine‑Tuning (FT), which updates all parameters—are surprisingly competitive with state‑of‑the‑art GPL approaches in cross‑domain few‑shot scenarios. This observation motivates a deeper theoretical investigation into why combining frozen and adapted knowledge can be beneficial.

Theoretical Contribution

The authors model the representations produced by a frozen encoder (h_g) and an adapted encoder (h_a) as noisy unbiased observations of an ideal latent representation (z):

\

Comments & Academic Discussion

Loading comments...

Leave a Comment