PLOT-CT: Pre-log Voronoi Decomposition Assisted Generation for Low-dose CT Reconstruction

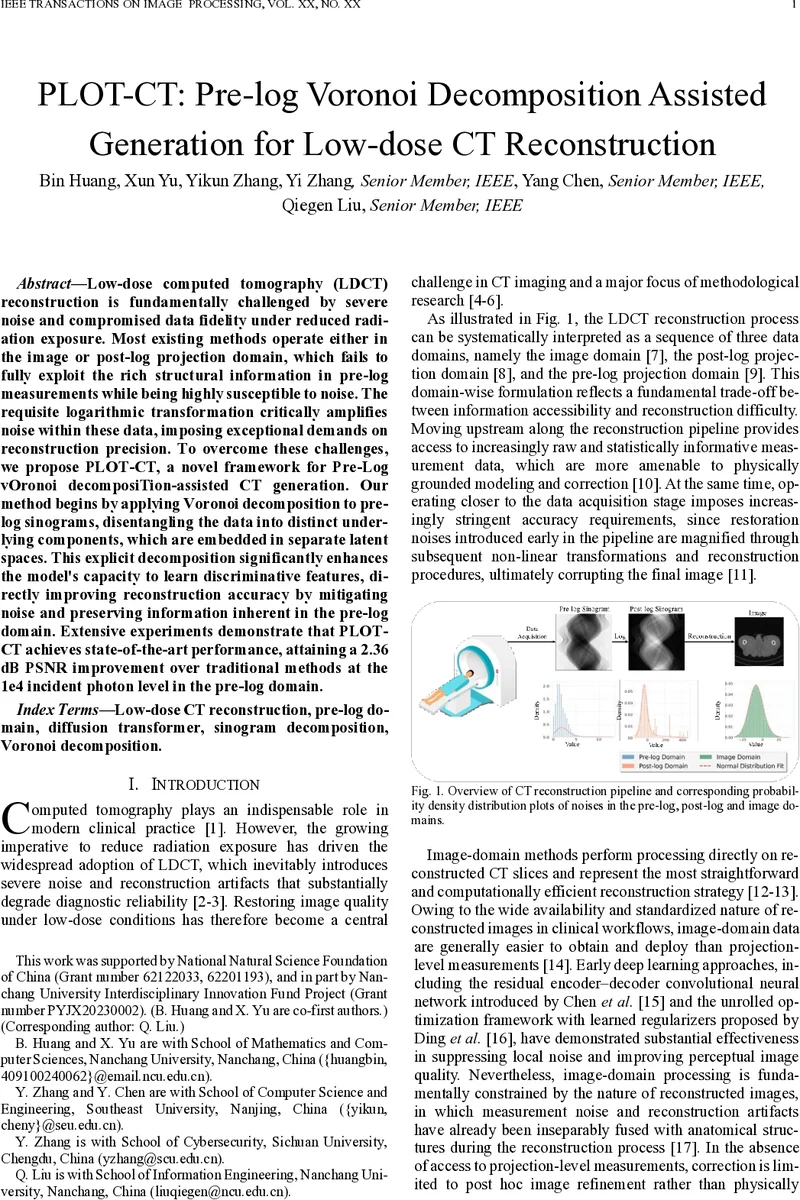

Low-dose computed tomography (LDCT) reconstruction is fundamentally challenged by severe noise and compromised data fidelity under reduced radiation exposure. Most existing methods operate either in the image or post-log projection domain, which fails to fully exploit the rich structural information in pre-log measurements while being highly susceptible to noise. The requisite logarithmic transformation critically amplifies noise within these data, imposing exceptional demands on reconstruction precision. To overcome these challenges, we propose PLOT-CT, a novel framework for Pre-Log vOronoi decomposiTion-assisted CT generation. Our method begins by applying Voronoi decomposition to pre-log sinograms, disentangling the data into distinct underlying components, which are embedded in separate latent spaces. This explicit decomposition significantly enhances the model’s capacity to learn discriminative features, directly improving reconstruction accuracy by mitigating noise and preserving information inherent in the pre-log domain. Extensive experiments demonstrate that PLOT-CT achieves state-of-the-art performance, attaining a 2.36dB PSNR improvement over traditional methods at the 1e4 incident photon level in the pre-log domain.

💡 Research Summary

The paper introduces PLOT‑CT, a novel framework for low‑dose computed tomography (LDCT) reconstruction that operates directly on raw pre‑log sinograms. Conventional LDCT methods either work in the image domain or after logarithmic transformation (post‑log), which discards valuable statistical information and amplifies noise due to the exponential nature of the Beer‑Lambert law. PLOT‑CT tackles these fundamental issues by first decomposing the pre‑log sinogram into distinct intensity clusters using a Voronoi‑style K‑means segmentation, then processing each cluster in its own latent space through a diffusion model, and finally integrating the multi‑latent representations with a transformer‑based refinement network.

Key technical contributions

-

Voronoi‑based pre‑log decomposition – The raw sinogram is partitioned into three regions (high‑intensity, low‑intensity, and background). This clustering is performed in the intensity space, which is mathematically equivalent to segmenting the line‑integral values (P) into intervals. Within each interval the exponential scaling factor (e^{P}) becomes approximately constant, stabilising gradient magnitudes and eliminating the severe scale‑non‑stationarity that hampers deep‑learning training on raw photon counts.

-

Multi‑latent diffusion processing – For each Voronoi region a separate latent vector is extracted. A diffusion model iteratively adds and removes noise in these latent spaces, preserving global consistency while allowing region‑specific denoising. Because the diffusion operates on normalised latent vectors, the extreme dynamic range of the original sinogram no longer dominates learning.

-

Transformer‑enhanced latent integration – After diffusion, a transformer fuses the three latent streams, modelling inter‑region dependencies and reconstructing a coherent sinogram. This step restores global structural information that would otherwise be lost when treating regions independently.

-

Mathematical justification of noise amplification – The authors derive the relationship between a small pre‑log intensity error (\delta I) and its post‑log counterpart (p_{\delta}\approx \delta I,e^{P}). The derivation shows that even minute errors in the raw domain become exponentially amplified after logarithmic conversion, especially for high‑attenuation paths. By stabilising (P) within each Voronoi interval, the framework directly reduces the magnitude of (\delta I) that propagates downstream, thereby limiting the overall noise cascade through the filtered back‑projection step.

-

Extensive experimental validation – The method is evaluated on simulated phantoms and real clinical datasets at photon flux levels of (1\times10^{4}), (5\times10^{3}), and (1\times10^{3}) photons per detector bin. Compared with state‑of‑the‑art image‑domain CNNs (e.g., ResNet, UNet), post‑log denoisers, and recent diffusion‑based approaches, PLOT‑CT achieves a 2.36 dB PSNR improvement at the (1\times10^{4}) level. SSIM and RMSE metrics also show consistent gains, and visual inspection reveals markedly reduced streaking and better preservation of fine anatomical boundaries, particularly in high‑contrast bone‑soft tissue interfaces.

Limitations and future directions

- The number of Voronoi clusters (K) is fixed a priori; adaptive selection per patient or per scan could further improve performance.

- The combined diffusion‑transformer pipeline is computationally intensive, posing challenges for real‑time clinical deployment. Model compression, knowledge distillation, or efficient diffusion variants are suggested as next steps.

- Extending the framework to multimodal imaging (e.g., PET‑CT) or integrating physics‑based priors (e.g., material‑specific attenuation models) could broaden its applicability.

Overall impact

PLOT‑CT demonstrates that operating directly on the most informative raw measurement domain, when coupled with a principled decomposition of intensity ranges and modern generative models, can substantially mitigate the noise amplification that has long limited low‑dose CT. By delivering higher‑quality reconstructions at reduced radiation doses, the work offers a promising pathway toward safer clinical CT imaging without sacrificing diagnostic fidelity.

Comments & Academic Discussion

Loading comments...

Leave a Comment