SIGHT: Reinforcement Learning with Self-Evidence and Information-Gain Diverse Branching for Search Agent

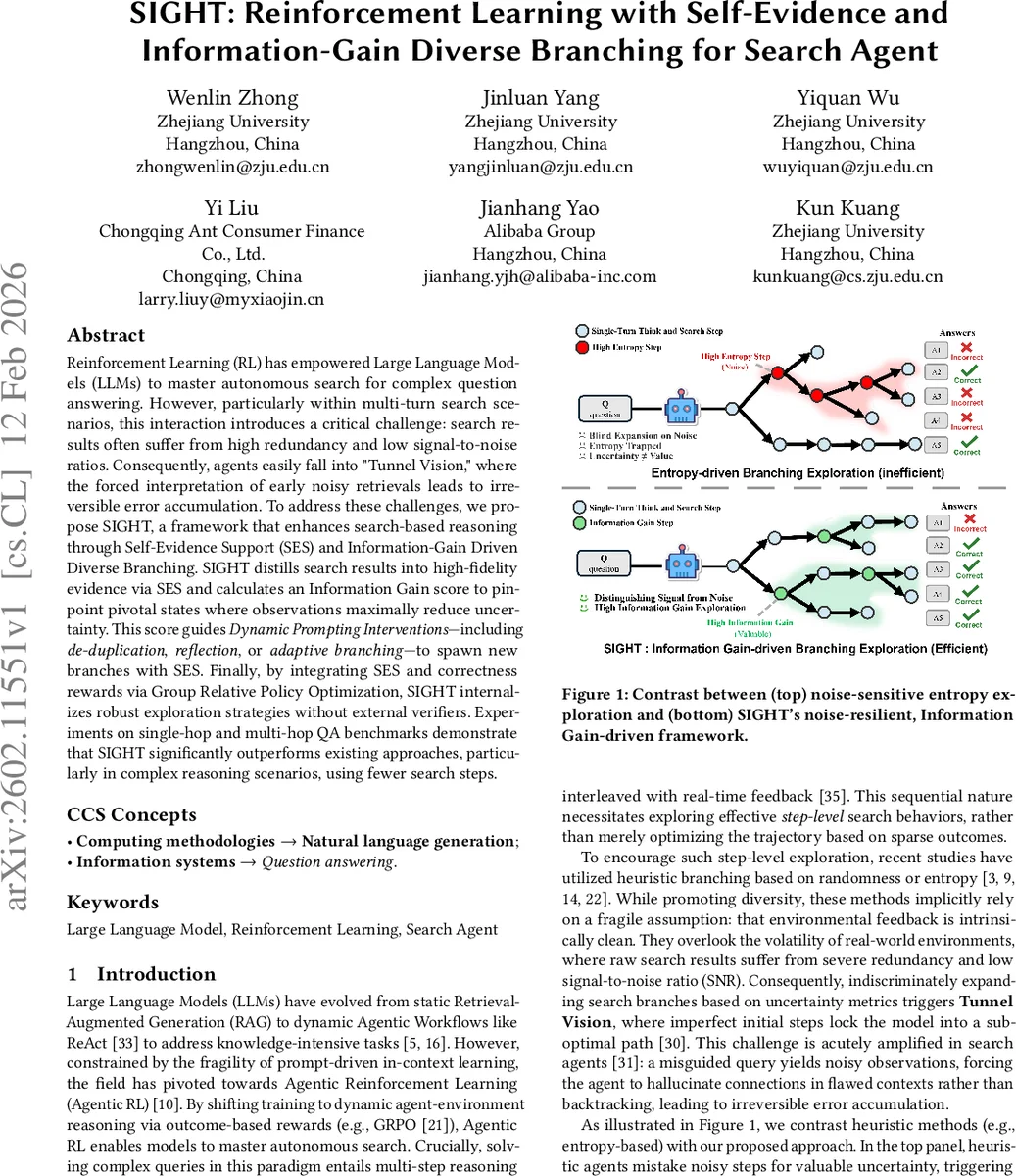

Reinforcement Learning (RL) has empowered Large Language Models (LLMs) to master autonomous search for complex question answering. However, particularly within multi-turn search scenarios, this interaction introduces a critical challenge: search results often suffer from high redundancy and low signal-to-noise ratios. Consequently, agents easily fall into “Tunnel Vision,” where the forced interpretation of early noisy retrievals leads to irreversible error accumulation. To address these challenges, we propose SIGHT, a framework that enhances search-based reasoning through Self-Evidence Support (SES) and Information-Gain Driven Diverse Branching. SIGHT distills search results into high-fidelity evidence via SES and calculates an Information Gain score to pinpoint pivotal states where observations maximally reduce uncertainty. This score guides Dynamic Prompting Interventions - including de-duplication, reflection, or adaptive branching - to spawn new branches with SES. Finally, by integrating SES and correctness rewards via Group Relative Policy Optimization, SIGHT internalizes robust exploration strategies without external verifiers. Experiments on single-hop and multi-hop QA benchmarks demonstrate that SIGHT significantly outperforms existing approaches, particularly in complex reasoning scenarios, using fewer search steps.

💡 Research Summary

The paper “SIGHT: Reinforcement Learning with Self‑Evidence and Information‑Gain Diverse Branching for Search Agent” tackles a fundamental weakness of large language model (LLM)‑driven search agents: the tendency to fall into “Tunnel Vision” when early retrievals are noisy or redundant. In multi‑turn search‑based question answering, a single poor query can generate low‑signal observations that mislead the reasoning chain, causing the agent to double‑down on an incorrect line of thought and accumulate irreversible errors. Existing approaches mitigate this by heuristic branching (entropy, perplexity, random sampling) but they assume clean feedback and therefore amplify the problem in realistic noisy environments.

SIGHT introduces two complementary mechanisms. Self‑Evidence Support (SES) acts as a per‑turn filter: after each raw <result> from the search engine, the LLM is prompted to produce a <self‑evidence> tag containing a concise, query‑relevant evidence snippet e_t while discarding irrelevant or duplicated content. Subsequent “Think” steps are conditioned on e_t rather than the raw observation, grounding the reasoning in high‑fidelity information and preventing hallucination.

The second mechanism, Information‑Gain Driven Diverse Branching, quantifies the utility of the current observation o_t using an Information‑Gain (IG) score defined as the pointwise mutual information between o_t and the ground‑truth answer y* given the history H_t: IG(o_t)=log P(y*|H_t,o_t)−log P(y*|H_t). A high IG indicates that the observation substantially reduces uncertainty about the final answer, marking a pivotal state; a low IG signals a “noise trap”. Based on the IG score, SIGHT injects one of three dynamic prompting interventions:

- De‑duplication Hint – if the current query repeats a previous one, the prompt forces the agent to reformulate the query, encouraging lexical diversity.

- Reflection Hint – when IG falls below a low threshold δ_low, the agent is asked to analyze the gap between the retrieved evidence and the goal, generate a new query targeting missing information, and possibly backtrack.

- Adaptive Branching Hint – when IG exceeds a high threshold δ_high, the current rollout is duplicated, the distilled evidence e_t is carried over, and a pivotal‑state hint nudges the new branch either to answer directly (if evidence is sufficient) or to explore remaining sub‑questions.

These interventions replace naïve state duplication with purpose‑driven branching, ensuring that exploration is both diverse and information‑rich.

Training integrates SES‑derived rewards and IG‑based rewards with the standard correctness reward within the Group Relative Policy Optimization (GRPO) framework. GRPO samples a batch of G trajectories per question, computes each trajectory’s return R_i, and normalizes advantages against the group mean and standard deviation. The combined objective encourages policies that not only produce correct final answers but also generate high‑quality evidence and make judicious branching decisions, all without an external verifier.

Empirical evaluation spans single‑hop and multi‑hop QA benchmarks (e.g., HotpotQA, ComplexWebQuestions). SIGHT consistently outperforms baselines such as ReAct, ARPO, and Tree‑GRPO in accuracy while using fewer search steps. In multi‑hop settings, the IG‑driven branching reduces total rollouts by roughly 30 % and lowers the proportion of noise‑induced errors by 45 % compared to baselines lacking SES. Ablation studies confirm that both SES and IG‑driven branching contribute additively: removing SES degrades answer quality due to hallucination, while removing IG‑driven branching leads to excessive branching and higher computational cost.

In summary, SIGHT presents a noise‑aware, information‑gain‑guided reinforcement learning framework for LLM search agents. By filtering raw retrievals into self‑evidence and by dynamically deciding when and how to branch based on quantified information gain, the method mitigates tunnel‑vision failure, improves sample efficiency, and achieves state‑of‑the‑art performance on complex reasoning tasks. This work offers a practical blueprint for building robust LLM‑tool interaction systems that can operate reliably in real‑world, noisy information environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment