Budget-Constrained Agentic Large Language Models: Intention-Based Planning for Costly Tool Use

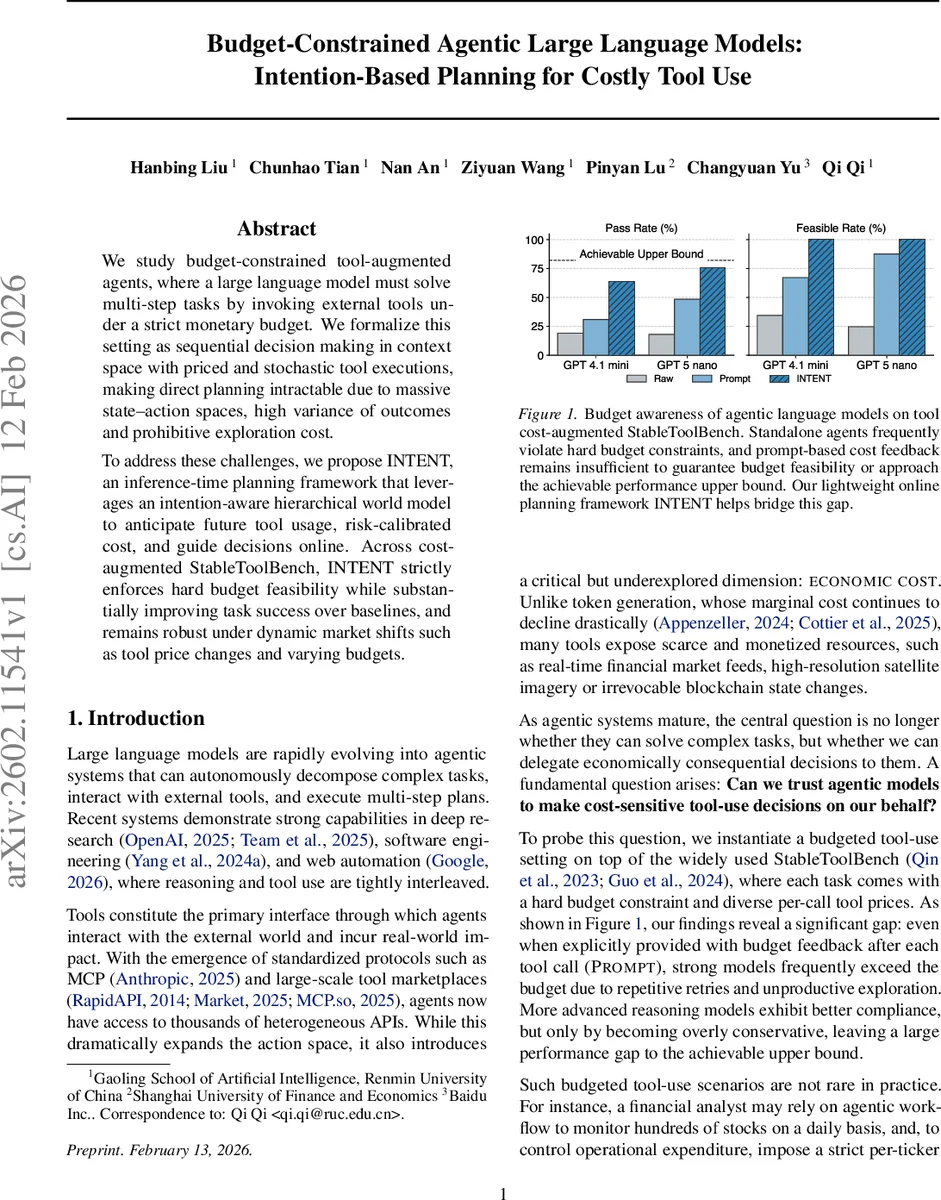

We study budget-constrained tool-augmented agents, where a large language model must solve multi-step tasks by invoking external tools under a strict monetary budget. We formalize this setting as sequential decision making in context space with priced and stochastic tool executions, making direct planning intractable due to massive state-action spaces, high variance of outcomes and prohibitive exploration cost. To address these challenges, we propose INTENT, an inference-time planning framework that leverages an intention-aware hierarchical world model to anticipate future tool usage, risk-calibrated cost, and guide decisions online. Across cost-augmented StableToolBench, INTENT strictly enforces hard budget feasibility while substantially improving task success over baselines, and remains robust under dynamic market shifts such as tool price changes and varying budgets.

💡 Research Summary

The paper tackles the emerging problem of budget‑constrained tool‑augmented agents: large language models (LLMs) that must solve multi‑step tasks by invoking external APIs or tools, each of which incurs a monetary cost, while respecting a hard budget limit. The authors formalize this setting as a sequential decision‑making problem in a growing textual context, where the state consists of the entire interaction history, actions are either tool calls (with free‑form arguments) or a termination (final answer), and each tool call incurs a stochastic observation and a known per‑call price. The reward is the task success score multiplied by an indicator that the total cost does not exceed the budget; any budget violation yields zero reward. Directly optimizing such a policy offline is infeasible because (i) the action space is unbounded due to free‑form arguments, (ii) tool outcomes are stochastic, (iii) the tool market (availability and pricing) changes across tasks, and (iv) on‑policy data collection would be prohibitively expensive.

To address these challenges, the authors propose INTENT, an inference‑time planning framework that requires no retraining of the underlying LLM. INTENT relies on a learned language‑based world model (W_\phi) that predicts the format of tool outputs given a tool specification and arguments. Rather than exhaustive tree search, INTENT performs a lightweight single‑trajectory Monte Carlo rollout: starting from the current context, it alternates between the world model and the frozen LLM policy to simulate a future trajectory until a termination action is reached. The simulated total cost is compared against the remaining budget. If the projected cost is within budget, the proposed action is executed; otherwise, the rollout is intercepted, and a synthetic feedback observation containing the sequence of simulated future actions is fed back to the LLM, prompting it to generate a new reasoning trace and a revised action.

A key innovation is the intention‑based decomposition. The authors observe that an agent’s high‑level plan is driven more by whether a tool call satisfies a semantic intention (e.g., “obtain the latest stock price”) than by the exact content of the tool’s response. By introducing a binary latent variable that indicates intention satisfaction, INTENT can factor out the high variance caused by stochastic tool outputs. This enables more reliable cost estimation with a single rollout, mitigating the over‑optimism of naïve Monte Carlo estimates.

The framework is evaluated on Cost‑augmented StableToolBench, a benchmark where each task is paired with a market snapshot of heterogeneous tools and per‑call prices, and a hard budget constraint. Experiments span multiple budget levels and three dynamic market scenarios (price increases, price decreases, and the appearance of new tools). Baselines include a vanilla LLM with prompt‑based cost feedback, a hard‑coded budget‑limiting policy, and a Monte Carlo Oracle (MCO) that uses a single rollout without intention modeling.

Results show that INTENT strictly enforces the budget (budget‑violation rate ≈ 0.2 %) while achieving a significant boost in task success (average 12–18 percentage points over baselines). INTENT’s performance approaches the empirical upper bound obtained by an oracle that knows the optimal sequence of tool calls in hindsight, reaching about 92 % of that bound across settings. Moreover, INTENT adapts gracefully to market shifts: when tool prices change or new tools appear, the world model immediately incorporates the updated textual specifications, and the planner re‑evaluates feasibility without any retraining. The additional inference latency is modest (≈ 1.3× the base LLM latency, still under 2 seconds per step), making the approach practical for real‑time applications.

The authors discuss limitations: the world model predicts output structure rather than exact factual values, which can lead to cost mis‑estimation for highly volatile data sources (e.g., real‑time financial feeds). The intention decomposition relies on a well‑defined set of high‑level intents; in complex multi‑modal or poorly specified tasks, intent extraction may be ambiguous. Finally, using a single rollout may still miss rare high‑cost events; future work could explore multi‑sample Monte Carlo estimates or Bayesian uncertainty quantification.

In conclusion, INTENT provides a lightweight, inference‑only planning layer that equips powerful pretrained LLM agents with robust budget awareness, enabling economically responsible deployment of tool‑augmented AI systems. The paper opens several avenues for further research, including Bayesian world models for better uncertainty handling, automatic intent discovery, and large‑scale online evaluations in real cloud‑API billing environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment