CausalAgent: A Conversational Multi-Agent System for End-to-End Causal Inference

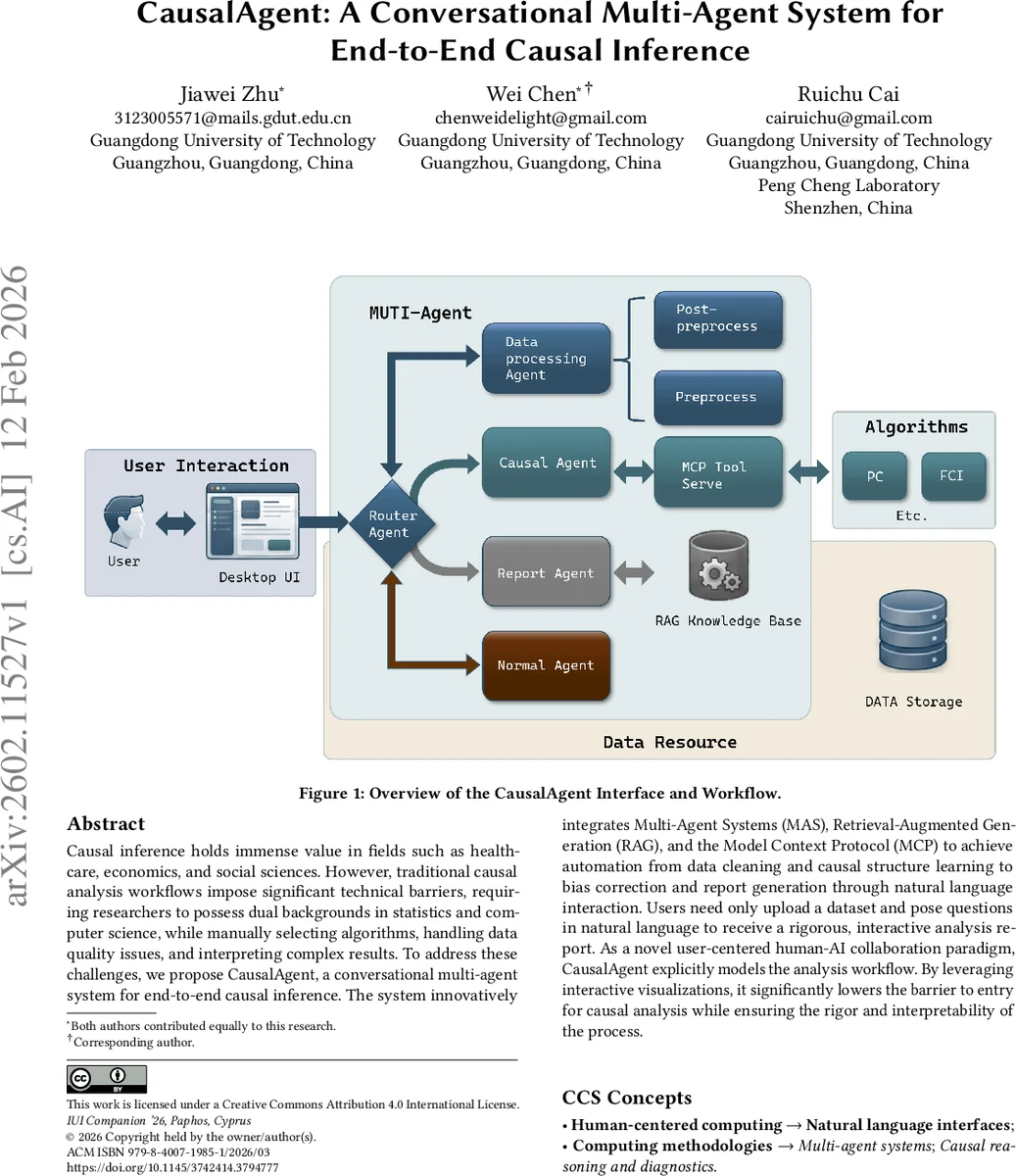

Causal inference holds immense value in fields such as healthcare, economics, and social sciences. However, traditional causal analysis workflows impose significant technical barriers, requiring researchers to possess dual backgrounds in statistics and computer science, while manually selecting algorithms, handling data quality issues, and interpreting complex results. To address these challenges, we propose CausalAgent, a conversational multi-agent system for end-to-end causal inference. The system innovatively integrates Multi-Agent Systems (MAS), Retrieval-Augmented Generation (RAG), and the Model Context Protocol (MCP) to achieve automation from data cleaning and causal structure learning to bias correction and report generation through natural language interaction. Users need only upload a dataset and pose questions in natural language to receive a rigorous, interactive analysis report. As a novel user-centered human-AI collaboration paradigm, CausalAgent explicitly models the analysis workflow. By leveraging interactive visualizations, it significantly lowers the barrier to entry for causal analysis while ensuring the rigor and interpretability of the process.

💡 Research Summary

CausalAgent is a conversational multi‑agent platform that enables end‑to‑end causal inference through natural‑language interaction. The authors identify three major barriers that prevent non‑experts from performing rigorous causal analysis: (1) the need for deep statistical knowledge, (2) the requirement to manually select and execute complex algorithms, and (3) the difficulty of interpreting results without a solid theoretical grounding. To overcome these obstacles, CausalAgent integrates three complementary technologies: a Multi‑Agent System (MAS) for modular workflow orchestration, Retrieval‑Augmented Generation (RAG) for grounding language model outputs in a curated knowledge base, and the Model Context Protocol (MCP) for a standardized interface between large language models (LLMs) and external computational tools.

The system is built on LangGraph, a graph‑based routing framework that orchestrates three specialized agents: the Data Processing Agent, the Causal Structure Learning Agent, and the Reporting Agent. Each agent operates on a shared state, preserving context across stages while keeping its own logic isolated. The Data Processing Agent validates uploaded files, computes descriptive statistics, infers variable semantics from column names, and produces a “causal‑analysis friendliness” score. It also visualizes distributions and correlation heatmaps, and detects Directed Acyclic Graph (DAG) violations using graph‑theoretic cycle detection. When cycles are found, the agent proposes edge modifications based on domain priors and statistical associations, with confidence scores supplied by the LLM.

The Causal Structure Learning Agent acts as an algorithm scheduler. It interprets user prompts and data characteristics, then selects an appropriate causal discovery algorithm using MCP. Currently supported methods include the PC algorithm (conditional independence‑based) and OLC‑based algorithms that can handle latent confounders. The chosen algorithm runs on a separate tool server, returning a node list and adjacency matrix that are stored as a checkpoint for later queries.

The Reporting Agent leverages RAG to synthesize diagnostic metrics, algorithmic choices, and the learned causal graph into a comprehensive, human‑readable report. The report details methodological decisions, key causal relationships, limitations, and suggestions for further investigation. An interactive front‑end visualizer allows users to explore the graph, manipulate nodes, and run what‑if simulations. For example, after the initial report, a user can ask “What happens to Erk if we intervene on Mek?” and the system will combine the causal model with domain priors to generate a plausible answer.

Implementation robustness is achieved through four strategies: (1) constructing a vectorized knowledge base from causal textbooks and seminal papers to anchor LLM outputs, (2) supervised fine‑tuning (SFT) of the base model to improve instruction following and statistical‑to‑natural‑language translation, (3) meticulous prompt engineering together with MCP to decouple reasoning from execution, and (4) employing GLM‑4.6 as the backbone LLM for its strong reasoning and task‑distribution capabilities.

A demonstration on the widely used Sachs protein‑signaling dataset showcases the full pipeline. The system automatically profiles the data, selects the PC algorithm for network reconstruction, generates a causal diagram that correctly identifies Akt and Pka as master regulators, and produces a report recommending them as drug‑target priorities. A follow‑up query about intervening on Mek is answered by synthesizing the learned graph with biological priors, illustrating the system’s interactive refinement capability.

The authors acknowledge current limitations: the existing algorithm library only partially addresses latent confounders, and quantitative causal effect estimation or counterfactual reasoning is not yet supported. They also note the need for expert‑in‑the‑loop feedback in high‑risk domains such as healthcare and finance to ensure safety and reliability. Future work will expand the algorithmic repertoire to include counterfactual modules and develop expert‑feedback mechanisms for domain adaptation.

In summary, CausalAgent demonstrates how a well‑engineered combination of MAS, RAG, and MCP can transform causal inference from a technically demanding, multi‑step process into an accessible conversational experience. By automating data cleaning, structure learning, bias correction, and report generation while preserving rigor and interpretability, the system lowers the entry barrier for researchers across disciplines and sets a new paradigm for human‑AI collaboration in causal discovery.

Comments & Academic Discussion

Loading comments...

Leave a Comment