How Smart Is Your GUI Agent? A Framework for the Future of Software Interaction

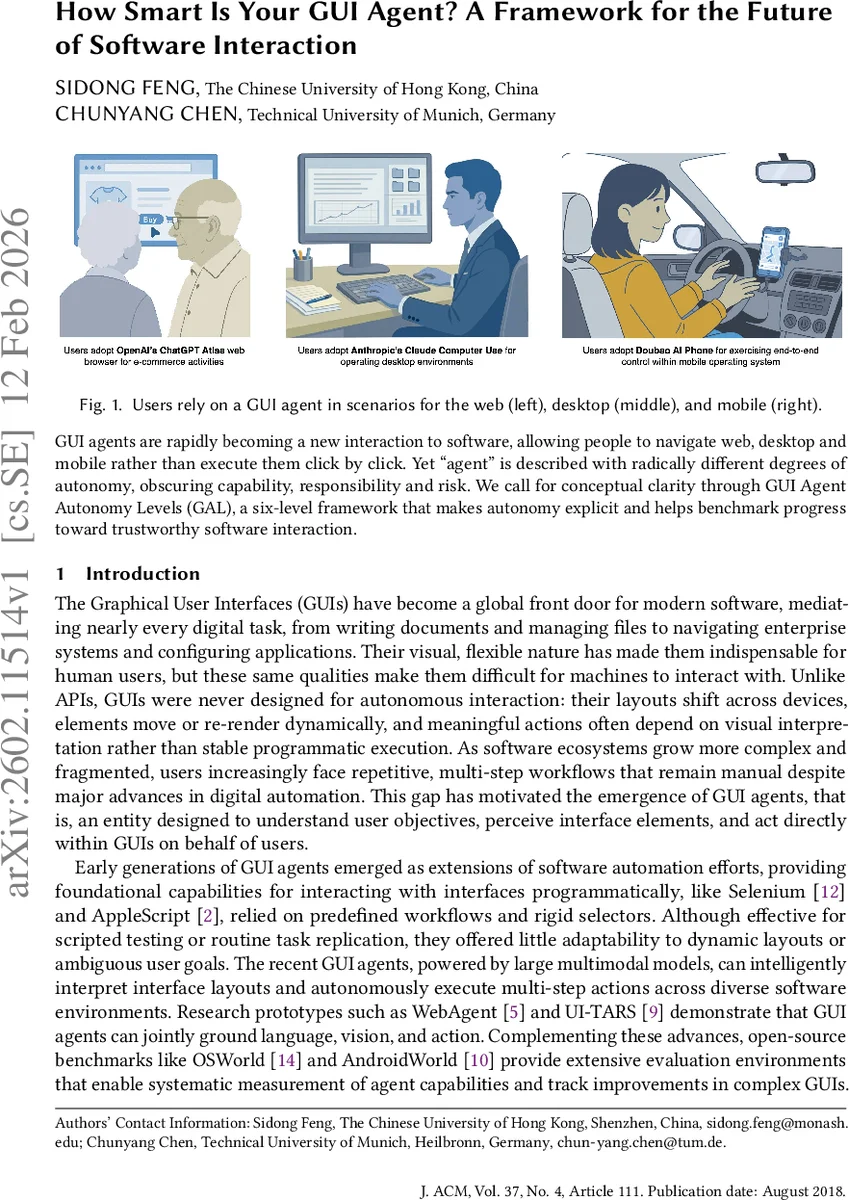

GUI agents are rapidly becoming a new interaction to software, allowing people to navigate web, desktop and mobile rather than execute them click by click. Yet ``agent’’ is described with radically different degrees of autonomy, obscuring capability, responsibility and risk. We call for conceptual clarity through GUI Agent Autonomy Levels (GAL), a six-level framework that makes autonomy explicit and helps benchmark progress toward trustworthy software interaction.

💡 Research Summary

The paper “How Smart Is Your GUI Agent? A Framework for the Future of Software Interaction” surveys the rapidly emerging field of graphical‑user‑interface (GUI) agents—software entities that can perceive, reason about, and act directly within visual user interfaces. While early automation tools such as Selenium, AppleScript, and UI Automator relied on static selectors and scripted step‑by‑step instructions, the latest generation of agents powered by large multimodal models (e.g., WebAgent, UI‑TARS, Claude Computer Use, ChatGPT Atlas, Manus) can interpret screen layouts, understand natural‑language goals, and execute multi‑step workflows across heterogeneous applications. Open‑source benchmarks such as OSWorld and AndroidWorld provide systematic evaluation environments, but the community lacks a shared vocabulary for describing how autonomous a GUI agent truly is.

To address this gap, the authors introduce the GUI Agent Autonomy Levels (GAL), a six‑level taxonomy inspired by the SAE vehicle autonomy ladder. Each level is defined with concrete examples and a clear boundary of agency:

- Level 0 – No Automation: The user performs every click, scroll, and keystroke; the system offers no assistance.

- Level 1 – Minimal Assistance: The agent provides contextual hints, auto‑completions, or tooltips (e.g., Gmail Smart Compose) but never initiates actions.

- Level 2 – Basic Automation: The agent executes single, explicitly commanded actions without any reasoning (e.g., Selenium scripts).

- Level 3 – Conditional Automation: The agent receives a goal, plans a deterministic multi‑step sequence, and can handle simple conditionals; it pauses for user confirmation when unexpected situations arise (e.g., UiPath, Automation Anywhere, early LLM‑driven agents).

- Level 4 – High Automation: The agent autonomously orchestrates complex, cross‑application workflows (e.g., extracting CRM data, building an Excel chart, emailing a boss). It may ask for clarification only in rare edge cases, otherwise completing the task end‑to‑end.

- Level 5 – Full Automation: A universal, domain‑agnostic agent that can interpret underspecified requests, learn new GUIs on the fly, reason over unseen interfaces, and proactively optimize execution without any human intervention. This represents the ultimate digital collaborator.

The paper surveys the current state of the art, noting that most deployed agents in industry and research sit between Levels 1 and 3, with a few prototypes approaching Level 4. Even the most advanced systems (Claude Computer Use, ChatGPT Atlas, Manus) still rely on limited long‑term memory, fragile visual grounding, and frequent human‑in‑the‑loop oversight when workflows deviate from expectations. Consequently, trust, security, and privacy remain open challenges.

Looking forward, the authors identify four broad research thrusts required to progress toward Levels 4 and 5:

- Usability – Reduce configuration overhead so agents can be “plug‑and‑play” across applications, leveraging improved multimodal perception to generalize without extensive scripting.

- Security – Enforce strict permission boundaries, transparent action logs, and sandboxed execution to prevent malicious or unintended behavior.

- Privacy – Minimize data exposure, support on‑device processing, and give users fine‑grained control over what information is stored or shared.

- Personalization – Continuously learn individual user preferences, routines, and contextual states (e.g., focus mode, meetings) to tailor assistance and reduce friction.

The authors conclude that achieving Level 5 autonomy will demand breakthroughs not only in perception, reasoning, and memory but also in interdisciplinary integration of software engineering, natural language processing, human‑computer interaction, and computer vision. The GAL framework, they argue, provides a common language for benchmarking progress, surfacing scientific challenges, and guiding responsible development of trustworthy GUI agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment