Differentially Private and Communication Efficient Large Language Model Split Inference via Stochastic Quantization and Soft Prompt

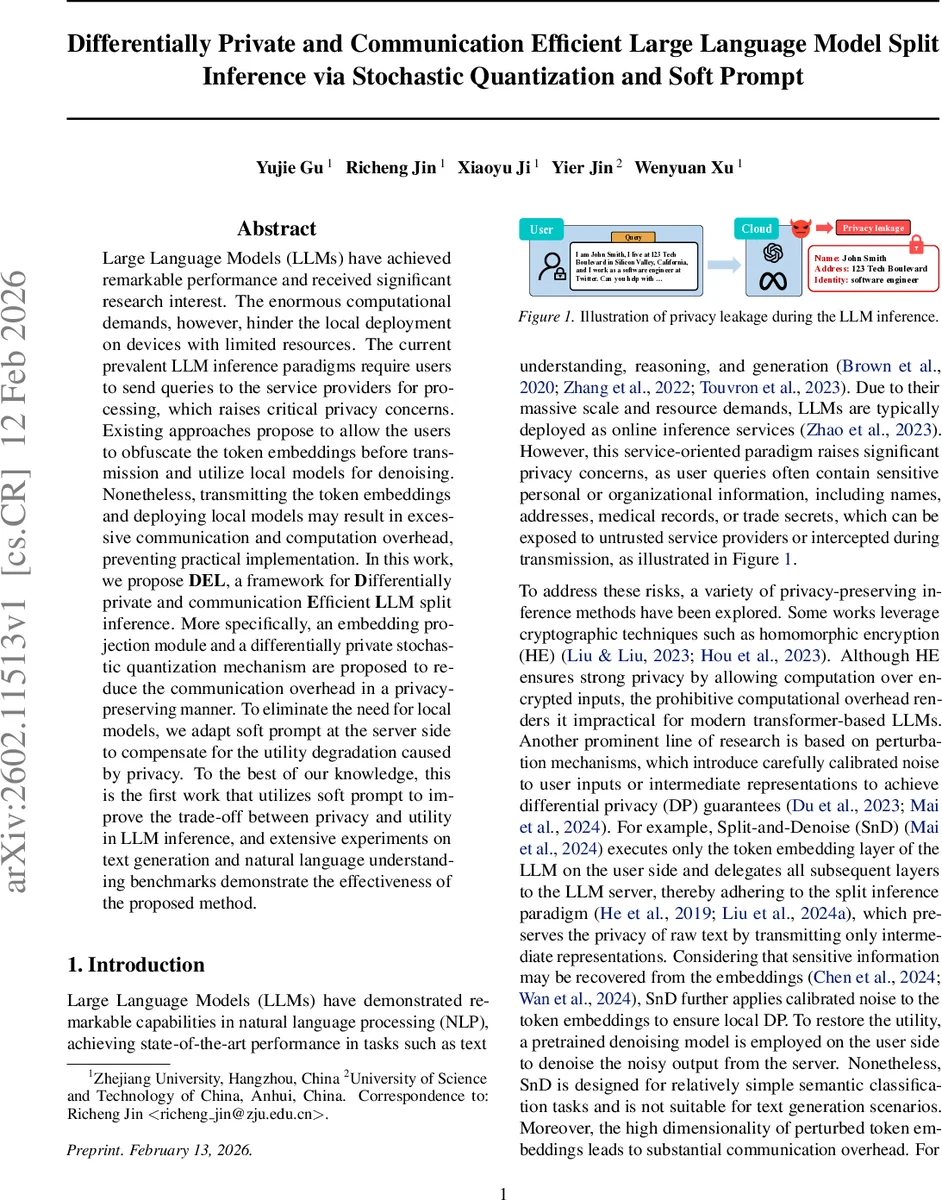

Large Language Models (LLMs) have achieved remarkable performance and received significant research interest. The enormous computational demands, however, hinder the local deployment on devices with limited resources. The current prevalent LLM inference paradigms require users to send queries to the service providers for processing, which raises critical privacy concerns. Existing approaches propose to allow the users to obfuscate the token embeddings before transmission and utilize local models for denoising. Nonetheless, transmitting the token embeddings and deploying local models may result in excessive communication and computation overhead, preventing practical implementation. In this work, we propose \textbf{DEL}, a framework for \textbf{D}ifferentially private and communication \textbf{E}fficient \textbf{L}LM split inference. More specifically, an embedding projection module and a differentially private stochastic quantization mechanism are proposed to reduce the communication overhead in a privacy-preserving manner. To eliminate the need for local models, we adapt soft prompt at the server side to compensate for the utility degradation caused by privacy. To the best of our knowledge, this is the first work that utilizes soft prompt to improve the trade-off between privacy and utility in LLM inference, and extensive experiments on text generation and natural language understanding benchmarks demonstrate the effectiveness of the proposed method.

💡 Research Summary

The paper introduces DEL (Differentially Private and Communication Efficient Large language model split inference), a novel framework that enables privacy‑preserving LLM inference while dramatically reducing communication overhead. Traditional split‑inference methods such as Split‑and‑Denoise (SnD) or InferDPT protect user queries by adding noise to high‑dimensional token embeddings and then rely on a local denoising model or a lightweight LLM to recover utility. These approaches suffer from two major drawbacks: (1) the high dimensionality of embeddings makes differential privacy (DP) costly, requiring large noise that degrades performance, and (2) the need for a local denoising model imposes substantial computational and memory burdens on resource‑constrained devices.

DEL tackles both issues with a three‑stage pipeline. First, a pretrained encoder‑decoder pair (g_e, g_d) is used to project the original token embeddings (dimension b) into a lower‑dimensional latent space (dimension d < b). This projection reduces the amount of data that must be protected and transmitted. Second, the latent vectors are privatized using a Gaussian mechanism that satisfies µ‑GDP, followed by a stochastic n‑bit quantization process. The quantizer maps each coordinate to one of 2ⁿ uniformly spaced levels in a range controlled by a scaling parameter A. By sampling a binomial variable for each coordinate, the quantization is inherently stochastic, providing additional indistinguishability beyond the Gaussian noise. The combined mechanism remains µ‑GDP, and the communication cost drops from O(b·T) floats to O(n·d·T) bits, where T is the token length. For example, with b = 4096, d = 256, n = 8, a 1024‑token query shrinks from ~16 MB to a few hundred kilobytes.

The third stage eliminates the need for any client‑side denoiser. On the server, the quantized latent vectors are decoded back to the original embedding dimension using g_d, and a learned soft prompt is prepended to these reconstructed embeddings before feeding them into the frozen LLM. The soft prompt consists of trainable continuous embeddings that are optimized on a downstream task dataset (both generation and NLU) to compensate for the utility loss introduced by DP noise and quantization. Because only the prompt parameters are updated, the approach incurs negligible extra computation and memory on the server, while preserving the LLM’s full capacity.

The authors provide a formal privacy analysis showing that the entire pipeline satisfies µ‑GDP, with the quantization scale A and the dimensionality d directly influencing the privacy budget ε. They also conduct extensive experiments on multiple state‑of‑the‑art LLMs (Llama‑3‑8B, Llama‑2‑13B, Falcon‑40B) across text generation benchmarks (OpenAI‑Eval, AlpacaEval) and natural language understanding suites (GLUE, SuperGLUE). DEL consistently outperforms prior DP split‑inference baselines: at ε = 1.0 it achieves BLEU/ROUGE scores 20 % higher than SnD while using less than 20 % of the communication bandwidth, and at ε = 4.0 it approaches the performance of the non‑private baseline with only a fraction of the data transmitted. Moreover, inference latency is reduced by up to 1.8× compared to methods that require a local denoising model.

The paper also discusses limitations and future directions. The current encoder‑decoder pair is static; adaptive or meta‑learned projection modules could improve cross‑domain robustness. Selecting the optimal (n, A, d) trade‑off remains a multi‑objective optimization problem that could benefit from automated tuning. Finally, while the soft prompt resides on the server, it could become an attack surface; protecting the prompt itself with additional DP or encryption is an open research question.

In summary, DEL presents the first LLM split‑inference framework that simultaneously guarantees differential privacy, achieves substantial communication savings, and restores utility without any client‑side heavy computation. By leveraging stochastic quantization and server‑side soft prompting, it paves the way for practical, privacy‑preserving LLM services on devices with limited resources.

Comments & Academic Discussion

Loading comments...

Leave a Comment