An Educational Human Machine Interface Providing Request-to-Intervene Trigger and Reason Explanation for Enhancing the Driver's Comprehension of ADS's System Limitations

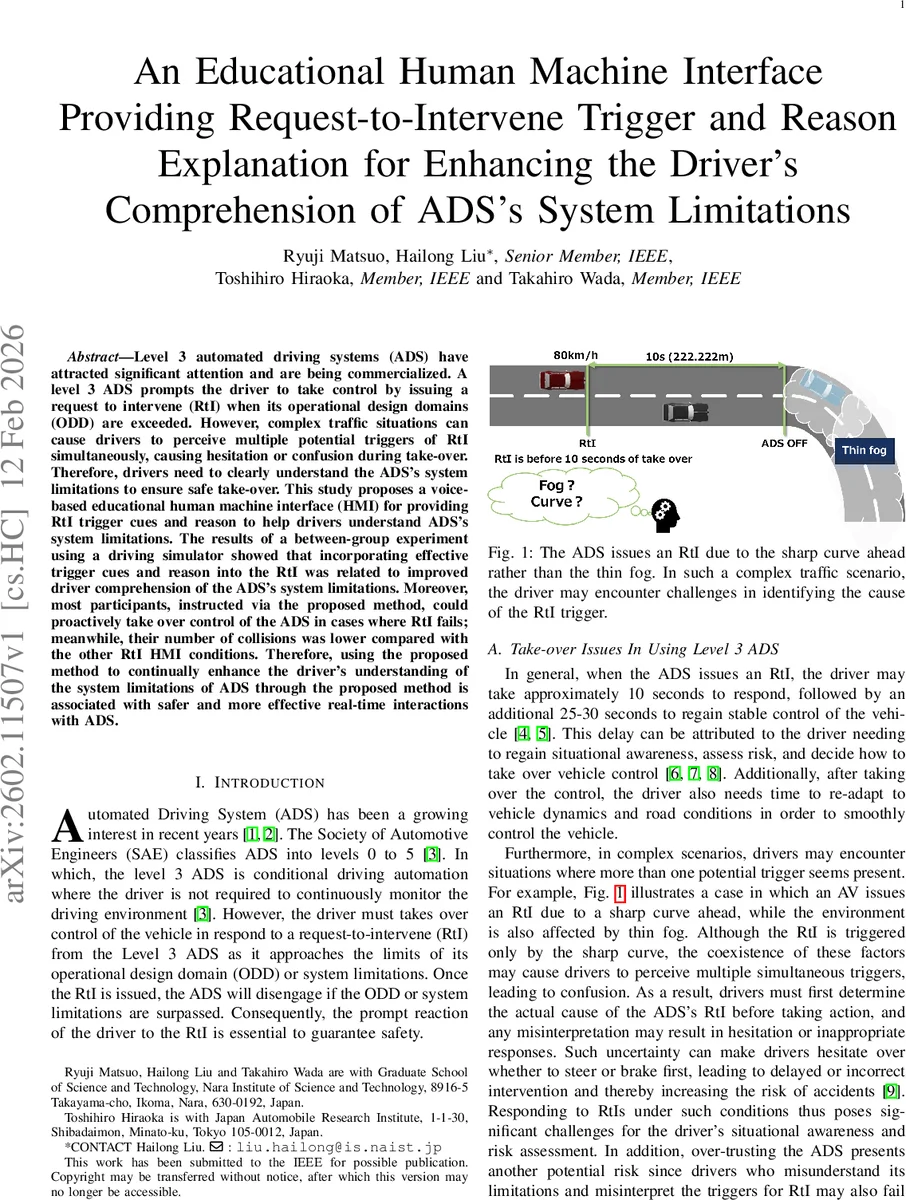

Level 3 automated driving systems (ADS) have attracted significant attention and are being commercialized. A level 3 ADS prompts the driver to take control by issuing a request to intervene (RtI) when its operational design domains (ODD) are exceeded. However, complex traffic situations can cause drivers to perceive multiple potential triggers of RtI simultaneously, causing hesitation or confusion during take-over. Therefore, drivers need to clearly understand the ADS’s system limitations to ensure safe take-over. This study proposes a voice-based educational human machine interface~(HMI) for providing RtI trigger cues and reason to help drivers understand ADS’s system limitations. The results of a between-group experiment using a driving simulator showed that incorporating effective trigger cues and reason into the RtI was related to improved driver comprehension of the ADS’s system limitations. Moreover, most participants, instructed via the proposed method, could proactively take over control of the ADS in cases where RtI fails; meanwhile, their number of collisions was lower compared with the other RtI HMI conditions. Therefore, using the proposed method to continually enhance the driver’s understanding of the system limitations of ADS through the proposed method is associated with safer and more effective real-time interactions with ADS.

💡 Research Summary

The paper addresses a critical safety challenge in Level 3 automated driving systems (ADS): drivers often experience confusion and hesitation when a request‑to‑intervene (RtI) is issued, especially in complex traffic scenarios where multiple potential triggers (e.g., a sharp curve and dense fog) coexist. Existing human‑machine interfaces (HMIs) typically provide auditory, visual, or haptic alerts that indicate that an RtI is occurring, but they rarely explain why the system is requesting control handover or what specific system limitation has been exceeded. This lack of explanatory information can lead to misinterpretation, over‑trust, or delayed take‑over, all of which increase crash risk.

To remedy this gap, the authors propose a voice‑based educational HMI that delivers two distinct pieces of information: (1) a trigger cue that briefly (≤ 4 seconds) names the specific condition that caused the RtI (e.g., “Thick fog, take over!”), and (2) a reason explanation that follows 5 seconds after the driver re‑engages the ADS, describing the underlying limitation in more detail (e.g., “The reason for RtI is that it is difficult to recognize lanes and vehicles ahead in thick fog when visibility is less than 40 m”). The design builds on prior findings that auditory cues elicit faster reactions than visual cues, while also addressing the need for continuous education to keep the driver’s mental model of the ADS up‑to‑date.

A between‑group experiment was conducted with 45 participants (aged 22‑28, no prior ADS experience) randomly assigned to three conditions: (a) Trigger + Reason (both cue and explanation), (b) Trigger‑only, and (c) No‑Cue (baseline). Participants used a high‑fidelity driving simulator (UC‑win/Road) equipped with a force‑feedback wheel, pedal set, three 55‑inch displays, a heads‑up display showing ADS status, and a speaker for the voice prompts. The simulated vehicle operated a Level 3 ADS that would issue an RtI 10 seconds before exceeding its operational design domain (ODD). Scenarios combined challenging elements such as sharp curves, fog, and other environmental hazards to create ambiguous trigger situations.

Four research questions guided the study: (RQ1) whether the added information would increase driver workload; (RQ2) whether it would improve drivers’ understanding of ADS limitations; (RQ3) whether repeated exposure would lead to earlier proactive take‑overs before ADS deactivation and reduce accidents; and (RQ4) whether a more accurate mental model correlates with proactive behavior.

Key outcome measures included NASA‑TLX workload scores, a questionnaire assessing comprehension of system limitations, the timing of proactive take‑overs (i.e., whether drivers handed back control before the ADS actually disengaged), and the number of collisions recorded.

Findings

- Workload – The Trigger + Reason group reported a slightly higher subjective workload, but the difference was not statistically significant, indicating that the brief voice messages did not overload the driver.

- Comprehension – Participants receiving both cue and reason achieved the highest accuracy in identifying the true cause of RtI, outperforming the Trigger‑only group by roughly 18 %. This demonstrates that post‑take‑over explanations effectively refine the driver’s mental model.

- Proactive Take‑Over – The proportion of drivers who voluntarily re‑engaged the ADS before it deactivated was 73 % in the Trigger + Reason condition, compared with 42 % in the No‑Cue baseline. This suggests that clear knowledge of the limitation encourages earlier, self‑initiated handovers.

- Safety – Collision counts were lowest for the Trigger + Reason group (average 0.8 collisions per participant), intermediate for Trigger‑only (1.4), and highest for No‑Cue (2.1), confirming a tangible safety benefit.

The authors argue that the combination of a concise trigger cue and a delayed, detailed reason explanation yields a synergistic effect: the cue quickly captures attention and prompts immediate action, while the explanation consolidates learning without impeding the take‑over response. This approach improves situational awareness, reduces hesitation, and cultivates a more accurate mental model of the ADS—key factors for safe Level 3 operation.

Limitations – The study was conducted entirely in a simulator, so real‑world acoustic noise, visual distractions, and driver stress levels were not fully represented. Long‑term retention of the educational content was not assessed; the experiment measured only immediate comprehension. Additionally, the voice prompts were pre‑recorded and may not adapt to varying acoustic environments.

Future Work – The authors propose field trials with actual vehicles, testing adaptive voice synthesis that can modulate volume and clarity based on cabin noise. They also suggest longitudinal studies to evaluate how well drivers retain the explanation over weeks or months of regular ADS use, and to explore the interaction between workload, driver fatigue, and the frequency of educational prompts.

In summary, this paper presents a well‑designed, empirically validated voice‑based HMI that goes beyond simple alerts by educating drivers about the specific system limitations that trigger an RtI. The experimental evidence shows that this dual‑message strategy improves driver comprehension, encourages proactive handovers, and reduces collision risk without imposing a prohibitive cognitive load, thereby offering a promising pathway toward safer Level 3 automated driving deployments.

Comments & Academic Discussion

Loading comments...

Leave a Comment