What if Agents Could Imagine? Reinforcing Open-Vocabulary HOI Comprehension through Generation

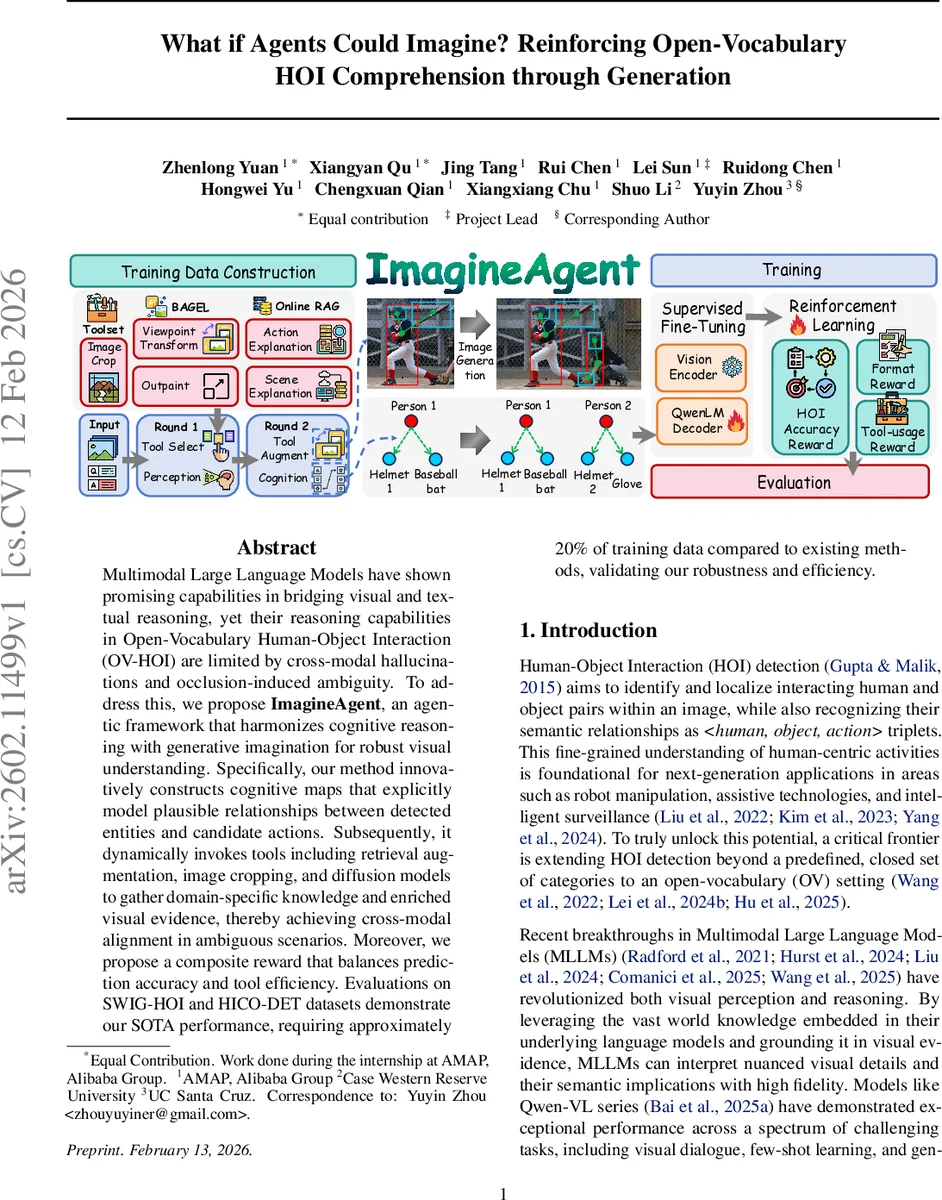

Multimodal Large Language Models have shown promising capabilities in bridging visual and textual reasoning, yet their reasoning capabilities in Open-Vocabulary Human-Object Interaction (OV-HOI) are limited by cross-modal hallucinations and occlusion-induced ambiguity. To address this, we propose \textbf{ImagineAgent}, an agentic framework that harmonizes cognitive reasoning with generative imagination for robust visual understanding. Specifically, our method innovatively constructs cognitive maps that explicitly model plausible relationships between detected entities and candidate actions. Subsequently, it dynamically invokes tools including retrieval augmentation, image cropping, and diffusion models to gather domain-specific knowledge and enriched visual evidence, thereby achieving cross-modal alignment in ambiguous scenarios. Moreover, we propose a composite reward that balances prediction accuracy and tool efficiency. Evaluations on SWIG-HOI and HICO-DET datasets demonstrate our SOTA performance, requiring approximately 20% of training data compared to existing methods, validating our robustness and efficiency.

💡 Research Summary

The paper introduces ImagineAgent, an agentic framework that tackles the challenges of Open‑Vocabulary Human‑Object Interaction (OV‑HOI) detection by integrating three complementary components: a structured cognitive map, a dynamic tool‑augmentation module, and a generative world model. Existing multimodal large language models (MLLMs) excel at bridging vision and language but suffer from three fundamental issues when applied to OV‑HOI: (1) a perceptual‑cognitive gap where detected humans and objects are not linked into coherent interaction hypotheses; (2) cross‑modal hallucination, i.e., generation of plausible‑sounding but visually unsupported actions driven by textual priors; and (3) occlusion‑induced ambiguity, where partial visibility prevents reliable verification of interactions.

ImagineAgent first builds a cognitive map that represents all detected human and object instances as nodes and possible verb edges as connections. This graph is refined through message‑passing that incorporates physical feasibility (distance, pose) and semantic plausibility (verb priors), thereby pruning implausible triplets before any further reasoning.

When a candidate interaction remains uncertain—because visual evidence is missing, the scene is ambiguous, or the verb is rare—the agent dynamically selects tools from a predefined library: (i) an image‑cropping tool to focus on high‑resolution regions; (ii) retrieval‑augmented generation (RAG) tools that query external knowledge bases for scene or action explanations; and (iii) diffusion‑based generative tools (outpainting and viewpoint transformation) that “imagine” missing parts of the scene, effectively reconstructing occluded views. The policy network πθ decides which tool(s) to invoke based on the current visual‑textual context and the state of the cognitive map.

Training proceeds in two stages. First, a large‑scale supervised fine‑tuning (SFT) dataset is constructed by running many rollouts with a powerful base model (Qwen2.5‑VL) and extracting successful reasoning chains. Each sample encodes a two‑round process: perception & tool selection, followed by tool augmentation & cognition. This dataset teaches the agent the basic workflow. Second, reinforcement learning (RL) refines the policy using the Generalized Reward‑Based Policy Optimization (GRPO) algorithm. The composite reward balances three terms: (a) HOI prediction accuracy (precision/recall), (b) structural coherence of the cognitive map, and (c) tool‑usage cost (number of calls, computational overhead). By jointly optimizing these objectives, the agent learns to achieve high accuracy while minimizing unnecessary tool invocations.

Empirical evaluation on the SWIG‑HOI and HICO‑DET benchmarks shows that ImagineAgent outperforms prior state‑of‑the‑art methods by 3–5 % absolute mAP, despite using only about 20 % of the training data. Ablation studies reveal that removing any of the three components degrades performance: the cognitive map contributes ~2 % AP, tool augmentation ~2 % AP, and the generative diffusion tools ~4 % AP in occlusion‑heavy cases. The framework also dramatically reduces hallucination errors and improves robustness to partially hidden objects.

The authors argue that the integration of generative imagination as a world model, combined with tool‑augmented RL, transforms static vision‑language models into active reasoning agents capable of “imagining” missing information and grounding their predictions in both visual evidence and external knowledge. This paradigm opens avenues for applications such as robot manipulation, assistive technologies, and real‑time surveillance, where reliable open‑vocabulary interaction understanding is essential. Future work may explore real‑time deployment, multimodal tool extensions (e.g., 3‑D or audio), and human‑in‑the‑loop collaboration.

In summary, ImagineAgent presents a novel, data‑efficient solution to OV‑HOI by unifying structured cognitive reasoning, dynamic tool usage, and generative imagination, all optimized through a carefully designed reinforcement learning reward. The results demonstrate that such an agentic approach can overcome the perceptual‑cognitive gap, suppress cross‑modal hallucinations, and resolve occlusion‑induced ambiguities, setting a new benchmark for open‑vocabulary interaction understanding.

Comments & Academic Discussion

Loading comments...

Leave a Comment