Arbitrary Ratio Feature Compression via Next Token Prediction

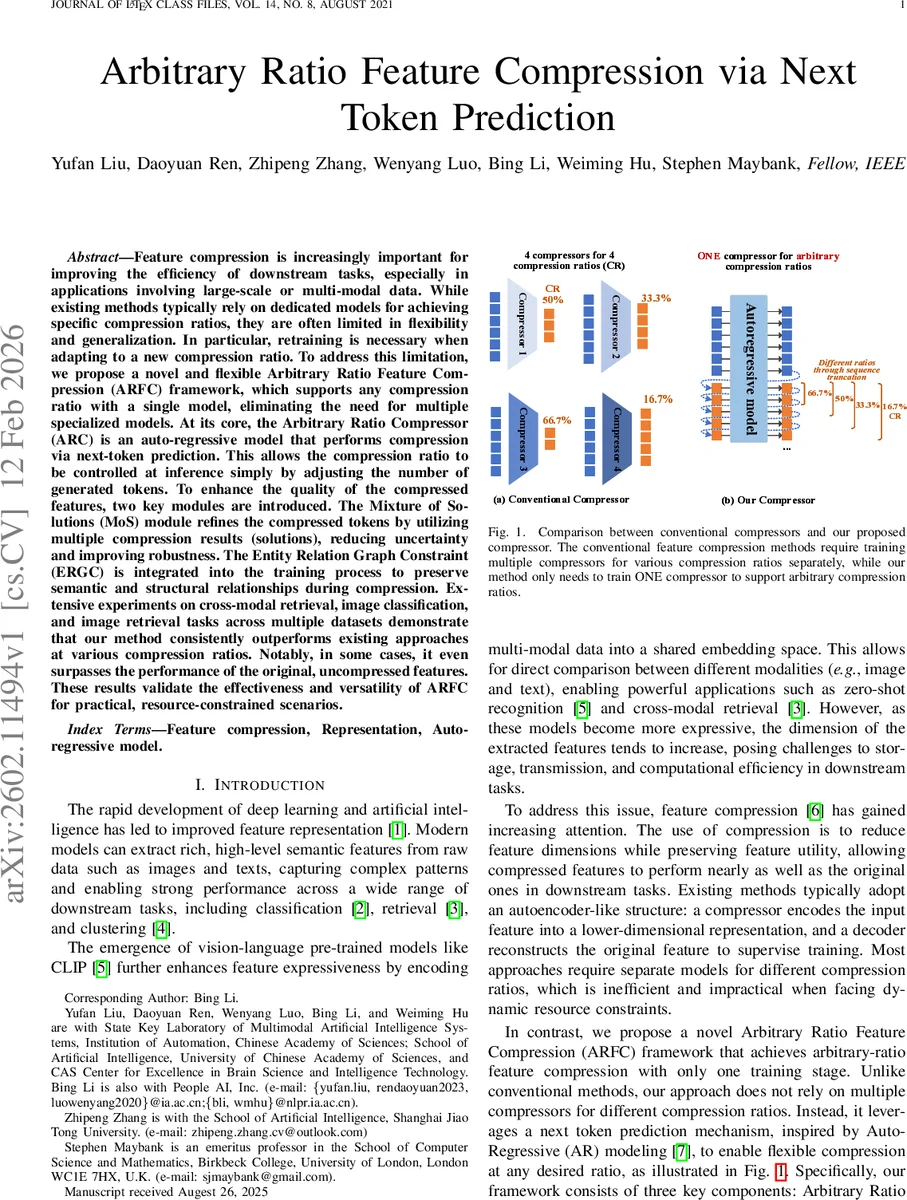

Feature compression is increasingly important for improving the efficiency of downstream tasks, especially in applications involving large-scale or multi-modal data. While existing methods typically rely on dedicated models for achieving specific compression ratios, they are often limited in flexibility and generalization. In particular, retraining is necessary when adapting to a new compression ratio. To address this limitation, we propose a novel and flexible Arbitrary Ratio Feature Compression (ARFC) framework, which supports any compression ratio with a single model, eliminating the need for multiple specialized models. At its core, the Arbitrary Ratio Compressor (ARC) is an auto-regressive model that performs compression via next-token prediction. This allows the compression ratio to be controlled at inference simply by adjusting the number of generated tokens. To enhance the quality of the compressed features, two key modules are introduced. The Mixture of Solutions (MoS) module refines the compressed tokens by utilizing multiple compression results (solutions), reducing uncertainty and improving robustness. The Entity Relation Graph Constraint (ERGC) is integrated into the training process to preserve semantic and structural relationships during compression. Extensive experiments on cross-modal retrieval, image classification, and image retrieval tasks across multiple datasets demonstrate that our method consistently outperforms existing approaches at various compression ratios. Notably, in some cases, it even surpasses the performance of the original, uncompressed features. These results validate the effectiveness and versatility of ARFC for practical, resource-constrained scenarios.

💡 Research Summary

The paper introduces a novel framework called Arbitrary Ratio Feature Compression (ARFC) that enables feature compression at any desired compression ratio using a single model, thereby eliminating the need for multiple specialized compressors. The core component, the Arbitrary Ratio Compressor (ARC), treats compression as a next‑token‑prediction problem. An input feature vector extracted from a frozen encoder (e.g., CLIP) is split into a sequence of T tokens, each of dimension d = D/T. A 12‑layer Transformer‑based autoregressive model predicts the next T tokens, forming a “basic solution” f_cmp. By selecting only the first (1 − r) · D dimensions of f_cmp, the system achieves a compression ratio r without any retraining.

To improve the robustness and quality of the compressed representations, the authors add two auxiliary mechanisms. The Mixture of Solutions (MoS) block aggregates multiple stochastic outputs (basic solutions) generated by ARC, either by weighted averaging or learned attention, thereby reducing the uncertainty inherent in a single autoregressive decode. The Entity Relation Graph Constraint (ERGC) constructs a graph over the original multi‑modal samples where edges encode semantic or structural similarity; a Laplacian regularization term forces the compressed features to preserve these relationships. Both MoS and ERGC are applied during training only; at inference time the decoder and graph regularizer are discarded, leaving a lightweight, on‑the‑fly compression pipeline that merely adjusts the number of output tokens.

Training proceeds by feeding f_cmp into a main decoder and several auxiliary decoders, which reconstruct the original feature vector. The total loss combines reconstruction error, MoS consistency loss, and the ERGC graph loss. This multi‑task objective encourages ARC to generate compressions that are both accurate and semantically faithful across a wide range of ratios.

The authors evaluate ARFC on three major downstream tasks: cross‑modal retrieval (MS‑COCO, Flickr30K), image classification (ImageNet‑1K), and image retrieval (person re‑identification datasets). Across compression ratios ranging from 16.7 % to 66.7 %, ARFC consistently outperforms prior methods such as Q‑Former, conventional autoencoders, PCA, and post‑training quantization. Notably, in several settings the compressed features even surpass the performance of the original uncompressed CLIP features, demonstrating that the learned compression can act as a regularizer that removes redundant information. MoS is shown to be especially beneficial at high compression (low token count), where it mitigates performance drops, while ERGC preserves cross‑modal alignment, leading to higher mean average precision (mAP) in retrieval tasks.

The paper also provides thorough ablation studies. Removing MoS or ERGC degrades performance by up to 3 % absolute mAP, confirming their complementary roles. Comparisons with PCA, LDA, and various autoencoder variants highlight ARFC’s advantage in handling multimodal data and arbitrary ratios without retraining.

Limitations are acknowledged: ARC’s Transformer backbone incurs non‑trivial memory and compute costs, especially when the desired compression ratio is low (i.e., many tokens are retained). The current pipeline assumes a frozen upstream encoder; joint optimization of the encoder and ARC could further improve compression efficiency but is left for future work. Additionally, the method has been tested only on vision‑language features; extending to other modalities such as audio, time‑series, or graph embeddings remains an open question.

In summary, ARFC presents a flexible, single‑model solution for arbitrary‑ratio feature compression, leveraging next‑token prediction, a mixture‑of‑solutions refinement, and graph‑based semantic regularization. Its strong empirical results across diverse tasks suggest high practical relevance for resource‑constrained deployments and for scenarios where dynamic bandwidth or storage constraints demand on‑the‑fly adjustment of feature dimensionality.

Comments & Academic Discussion

Loading comments...

Leave a Comment