OmniVL-Guard: Towards Unified Vision-Language Forgery Detection and Grounding via Balanced RL

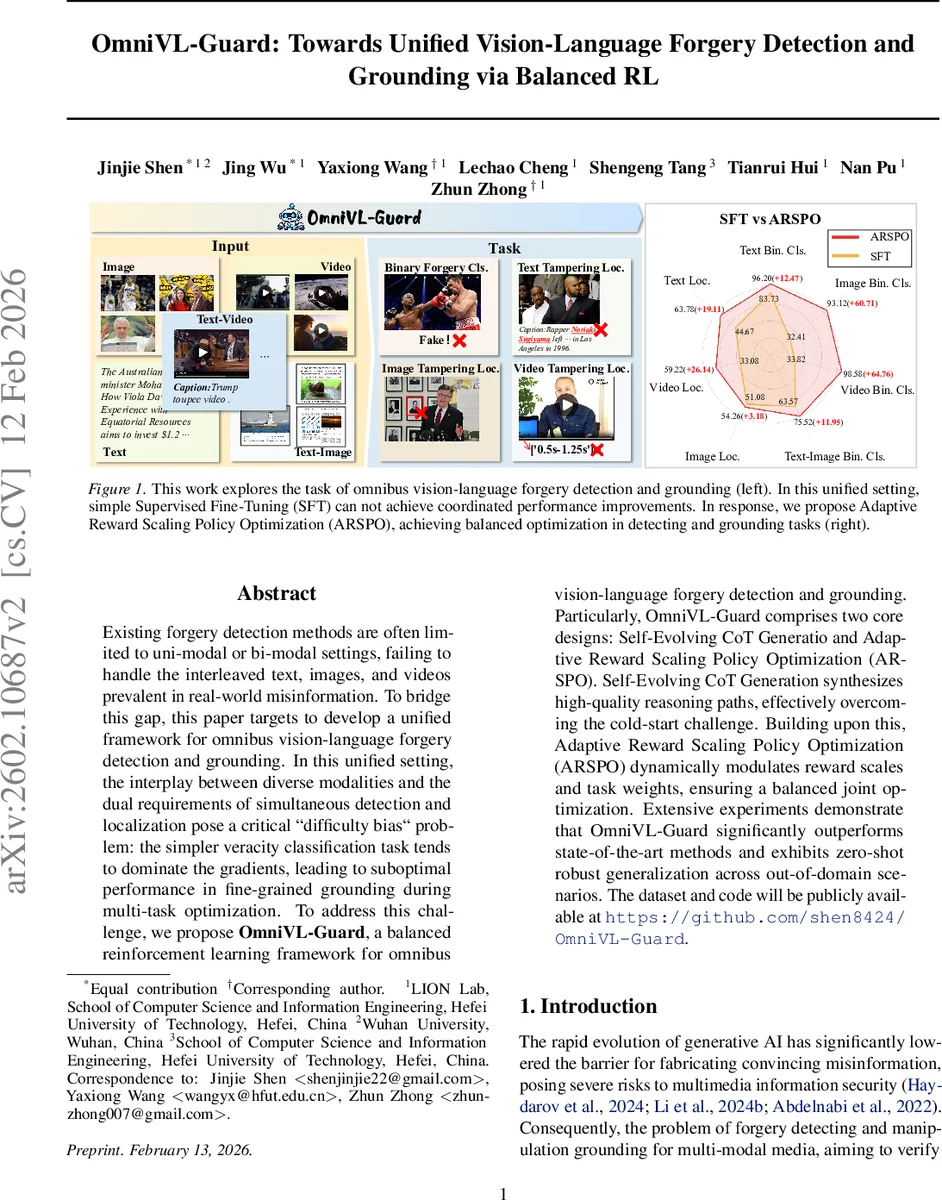

Existing forgery detection methods are often limited to uni-modal or bi-modal settings, failing to handle the interleaved text, images, and videos prevalent in real-world misinformation. To bridge this gap, this paper targets to develop a unified framework for omnibus vision-language forgery detection and grounding. In this unified setting, the {interplay} between diverse modalities and the dual requirements of simultaneous detection and localization pose a critical difficulty bias problem: the simpler veracity classification task tends to dominate the gradients, leading to suboptimal performance in fine-grained grounding during multi-task optimization. To address this challenge, we propose \textbf{OmniVL-Guard}, a balanced reinforcement learning framework for omnibus vision-language forgery detection and grounding. Particularly, OmniVL-Guard comprises two core designs: Self-Evolving CoT Generatio and Adaptive Reward Scaling Policy Optimization (ARSPO). {Self-Evolving CoT Generation} synthesizes high-quality reasoning paths, effectively overcoming the cold-start challenge. Building upon this, {Adaptive Reward Scaling Policy Optimization (ARSPO)} dynamically modulates reward scales and task weights, ensuring a balanced joint optimization. Extensive experiments demonstrate that OmniVL-Guard significantly outperforms state-of-the-art methods and exhibits zero-shot robust generalization across out-of-domain scenarios.

💡 Research Summary

OmniVL‑Guard addresses the pressing need for a unified detection system capable of handling the increasingly complex misinformation that combines text, images, and video. Existing forgery detection methods are typically confined to single‑modal (e.g., image only) or bi‑modal (e.g., image‑text) scenarios, which limits their applicability to real‑world social media content where multiple modalities coexist. The authors define an “omnibus” vision‑language setting that requires simultaneous binary classification of forgery (veracity) and fine‑grained localization of manipulated regions across three modalities: spatial image tampering, semantic text tampering, and temporal video tampering.

A central challenge in this multi‑task setting is “difficulty bias”: the easier classification task dominates gradient updates during joint optimization, causing the more difficult grounding tasks to receive insufficient learning signal. Simple supervised fine‑tuning (SFT) fails to overcome this bias, prompting the authors to incorporate reinforcement learning (RL), which has shown strong reasoning and generalization benefits in large multimodal language models (MLLMs).

The proposed framework consists of two novel components. First, Self‑Evolving Chain‑of‑Thought (CoT) Generation creates high‑quality reasoning paths needed for RL cold‑start. Starting from a small seed of manually verified CoT examples, the method iteratively expands the dataset through a self‑evolution loop: the current policy generates CoT for new samples, a separate verifier filters out inconsistent or incorrect reasoning, and the filtered set is merged back into the training pool. To handle long‑tail hard examples that persist after several iterations, a collaborative hard‑CoT synthesis stage employs three distinct MLLMs: one generates a GT‑conditioned CoT, a second rewrites it to mimic natural deduction without knowledge of the answer, and a third validates the rewritten reasoning. This pipeline yields the Full‑Spectrum Forensic Reasoning (FSFR) dataset, comprising 73 k SFT samples (including 15 % hard cases) and 110 k RL samples, covering text, image, video, and image‑text modalities and supporting four core tasks (binary classification, image grounding, text grounding, video grounding).

Second, Adaptive Reward Shaping Policy Optimization (ARSPO) tackles difficulty bias during multi‑task RL. The authors analytically decompose the policy gradient of standard RL objectives (e.g., GRPO) and show that the reward mapping function directly controls how much each task contributes to the gradient. ARSPO introduces task‑specific reward shaping that amplifies signals for high‑difficulty tasks and a dynamic coefficient adjustment that continuously re‑weights task losses based on real‑time statistics such as mean advantage and variance. This adaptive scaling ensures that the policy improves uniformly across all forensic subtasks rather than over‑optimizing the easy binary classification.

Experimental results demonstrate that OmniVL‑Guard substantially outperforms state‑of‑the‑art multimodal forgery detectors. In‑domain evaluations show improvements of up to 65 % absolute gain on video grounding and consistent gains of 10–20 % on image and text grounding, while binary classification accuracy rises modestly (≈0.3 %). More importantly, zero‑shot out‑of‑domain tests—where video‑text pairs unseen during training are presented—show that the model retains over 70 % of its in‑domain performance, indicating robust generalization. Ablation studies confirm that both the Self‑Evolving CoT pipeline and ARSPO are essential: removing the CoT generation leads to poor RL convergence, and using standard GRPO without adaptive reward scaling re‑introduces difficulty bias, degrading grounding performance.

In summary, OmniVL‑Guard delivers the first integrated solution for omnibus vision‑language forgery detection and grounding. By coupling a self‑evolving reasoning data generator with an adaptive multi‑task RL optimizer, it overcomes the inherent difficulty imbalance and achieves strong, balanced performance across classification and localization tasks. The authors suggest future work on lightweight real‑time deployment, continual dataset updates to counter emerging deep‑fake techniques, and human‑in‑the‑loop labeling to further improve data quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment