End-to-End Semantic ID Generation for Generative Advertisement Recommendation

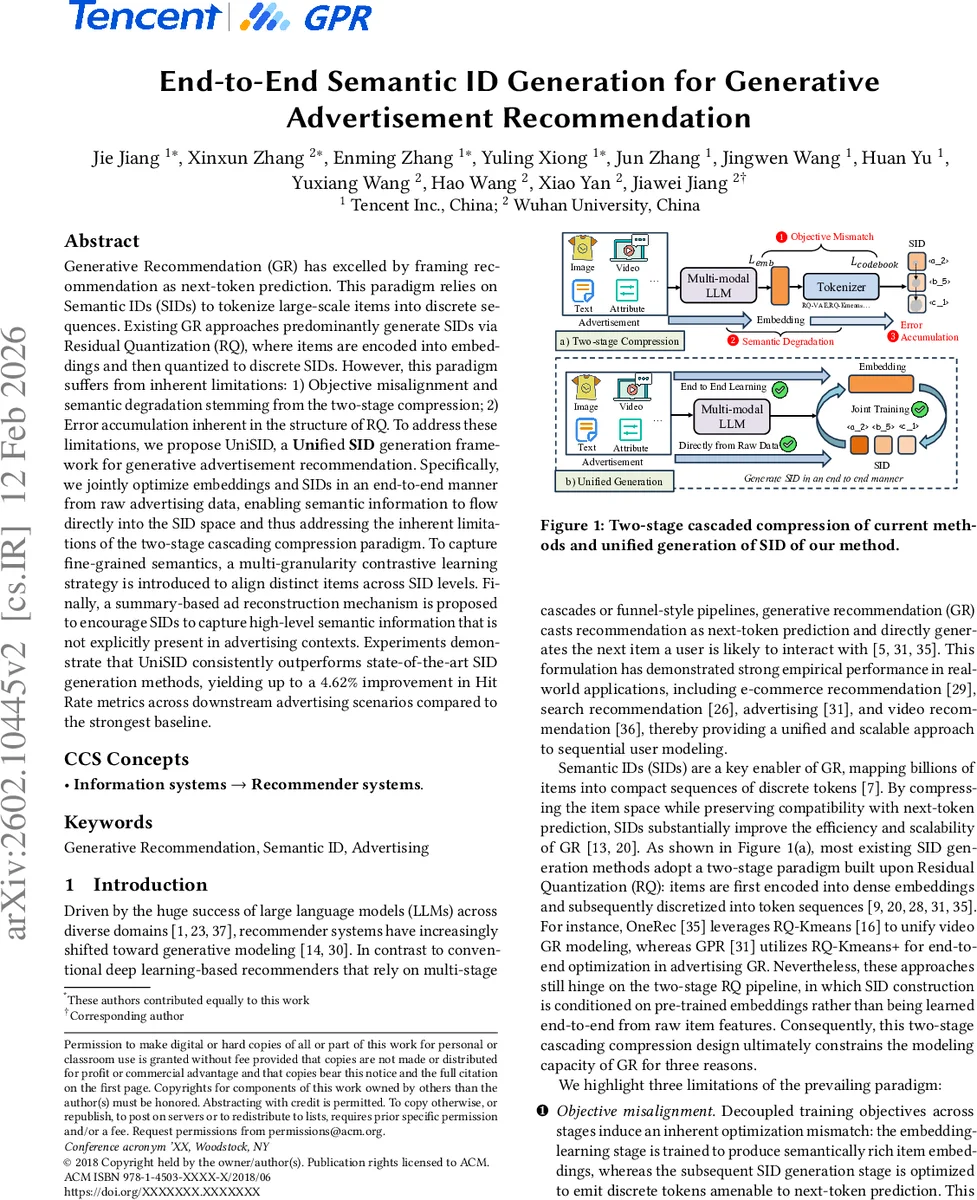

Generative Recommendation (GR) has excelled by framing recommendation as next-token prediction. This paradigm relies on Semantic IDs (SIDs) to tokenize large-scale items into discrete sequences. Existing GR approaches predominantly generate SIDs via Residual Quantization (RQ), where items are encoded into embeddings and then quantized to discrete SIDs. However, this paradigm suffers from inherent limitations: 1) Objective misalignment and semantic degradation stemming from the two-stage compression; 2) Error accumulation inherent in the structure of RQ. To address these limitations, we propose UniSID, a Unified SID generation framework for generative advertisement recommendation. Specifically, we jointly optimize embeddings and SIDs in an end-to-end manner from raw advertising data, enabling semantic information to flow directly into the SID space and thus addressing the inherent limitations of the two-stage cascading compression paradigm. To capture fine-grained semantics, a multi-granularity contrastive learning strategy is introduced to align distinct items across SID levels. Finally, a summary-based ad reconstruction mechanism is proposed to encourage SIDs to capture high-level semantic information that is not explicitly present in advertising contexts. Experiments demonstrate that UniSID consistently outperforms state-of-the-art SID generation methods, yielding up to a 4.62% improvement in Hit Rate metrics across downstream advertising scenarios compared to the strongest baseline.

💡 Research Summary

The paper introduces UniSID, a unified end‑to‑end framework for generating Semantic IDs (SIDs) in generative advertisement recommendation (GR). Existing GR systems rely on a two‑stage pipeline based on Residual Quantization (RQ): first, items are encoded into dense embeddings; second, these embeddings are quantized hierarchically into discrete token sequences (SIDs). This design suffers from three fundamental drawbacks: (1) objective misalignment—embedding learning optimizes for semantic richness while SID generation optimizes for next‑token prediction, leading to a mismatch; (2) semantic degradation—raw multimodal signals (images, text, structured attributes) are not directly used in SID construction, causing loss of important information; (3) error accumulation—each quantization level only sees the residual from the previous level, so quantization noise compounds and deeper SID layers become noisy.

UniSID addresses all three issues with a single, jointly optimized architecture. First, it builds an “advertisement‑enhanced input schema” that linearizes heterogeneous ad data (instruction prompt, visual tokens, textual description, and structured attributes such as industry and hierarchical categories) into a unified token sequence. Learnable SID tokens and an embedding token are appended to this sequence. A shared multimodal large language model (MLLM) processes the whole sequence, producing contextualized hidden states for every token.

Second, a dual‑head projection maps the hidden states of the SID tokens to a SID embedding space and the hidden state of the embedding token to an item embedding space. The SID embedding is split into L layers; each layer independently predicts a discrete token via argmax over its logits. Crucially, every SID layer receives the full ad context rather than a residual, eliminating the progressive sparsification inherent in RQ.

Third, UniSID introduces two auxiliary objectives to preserve semantics: (a) Multi‑Granularity Contrastive Learning constructs positive sample sets that are granularity‑specific. As the hierarchy deepens, the required similarity between query and positive samples increases, enforcing stronger semantic consistency at finer levels. (b) Summary‑Based Advertisement Reconstruction forces the generated SID sequence to reconstruct high‑level ad attributes (e.g., category path, key product descriptors). This reconstruction loss provides an additional supervision signal that encourages SIDs to embed implicit high‑level semantics not directly observable in raw modalities.

The authors evaluate UniSID on both industrial advertising workloads and public benchmark datasets. Metrics include SID quality, next‑ad prediction (Hit Rate, NDCG), advertisement retrieval accuracy, and generic next‑item prediction. UniSID consistently outperforms strong baselines such as RQ‑VAE, RQ‑Kmeans, and RQ‑Kmeans+ across all tasks, achieving up to 4.62 % absolute improvement in Hit Rate, 45.46 % gain in ad retrieval, and 11.83 % boost in next‑item prediction. Ablation studies demonstrate that each component—integrated input schema, multi‑granularity contrastive learning, and summary reconstruction—contributes significantly to the overall performance gains.

In summary, UniSID’s contributions are threefold: (1) it identifies and formally articulates the limitations of the prevailing two‑stage SID generation paradigm; (2) it proposes a unified end‑to‑end SID‑embedding learning pipeline that directly ingests raw multimodal ad data, thereby aligning objectives and eliminating error accumulation; (3) it designs novel semantic‑preserving objectives (multi‑granularity contrastive loss and summary reconstruction) that endow SIDs with both fine‑grained and high‑level semantic fidelity. The work opens avenues for scaling generative recommendation to massive item catalogs without sacrificing semantic richness, and suggests future extensions to larger LLM backbones, real‑time serving, and cross‑domain applications such as e‑commerce, video streaming, and social media recommendation.

Comments & Academic Discussion

Loading comments...

Leave a Comment