STaR: Scalable Task-Conditioned Retrieval for Long-Horizon Multimodal Robot Memory

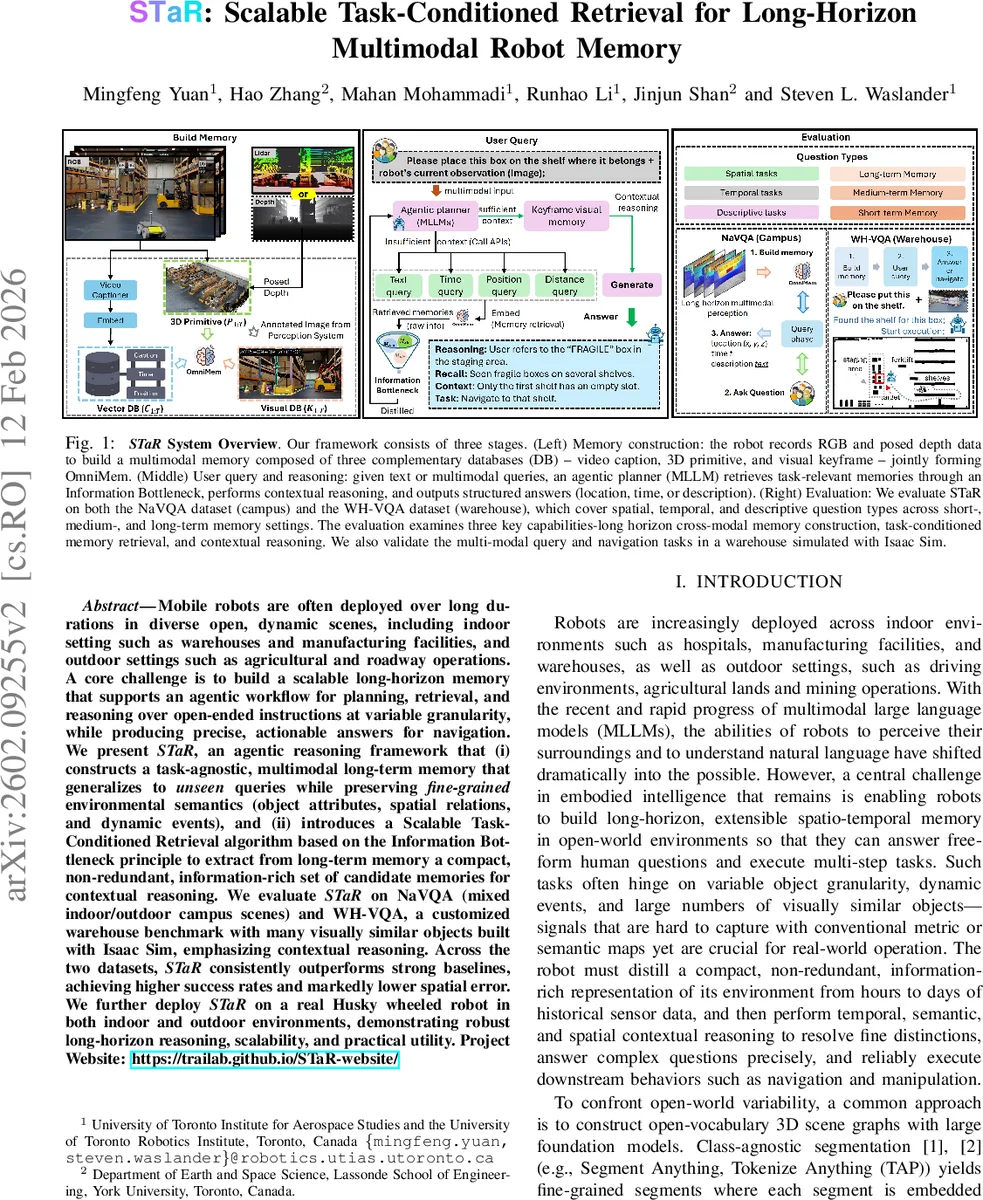

Mobile robots are often deployed over long durations in diverse open, dynamic scenes, including indoor setting such as warehouses and manufacturing facilities, and outdoor settings such as agricultural and roadway operations. A core challenge is to build a scalable long-horizon memory that supports an agentic workflow for planning, retrieval, and reasoning over open-ended instructions at variable granularity, while producing precise, actionable answers for navigation. We present STaR, an agentic reasoning framework that (i) constructs a task-agnostic, multimodal long-term memory that generalizes to unseen queries while preserving fine-grained environmental semantics (object attributes, spatial relations, and dynamic events), and (ii) introduces a Scalable Task Conditioned Retrieval algorithm based on the Information Bottleneck principle to extract from long-term memory a compact, non-redundant, information-rich set of candidate memories for contextual reasoning. We evaluate STaR on NaVQA (mixed indoor/outdoor campus scenes) and WH-VQA, a customized warehouse benchmark with many visually similar objects built with Isaac Sim, emphasizing contextual reasoning. Across the two datasets, STaR consistently outperforms strong baselines, achieving higher success rates and markedly lower spatial error. We further deploy STaR on a real Husky wheeled robot in both indoor and outdoor environments, demonstrating robust long horizon reasoning, scalability, and practical utility. Project Website: https://trailab.github.io/STaR-website/

💡 Research Summary

The paper introduces STaR (Scalable Task‑Conditioned Retrieval), a comprehensive framework that enables mobile robots to build, query, and reason over a long‑horizon, multimodal memory in open‑world environments. The authors first construct a unified memory called OmniMem, which consists of three complementary databases: (i) a video‑caption memory that stores temporally aligned natural‑language descriptions of the robot’s visual stream, (ii) a 3D primitive memory that captures geometry, semantic labels, and timestamps for each segmented object using an open‑vocabulary perception stack (RAM, Grounding‑DINO, Segment‑Anything), and (iii) a keyframe memory that retains selected RGB images with overlaid object contours for fine‑grained visual verification. This tripartite design is task‑agnostic yet expressive enough to preserve object attributes, spatial relations, and dynamic events over hours or days of operation.

When a user issues an open‑ended query (text, image, or multimodal), STaR does not feed the entire memory to a large multimodal language model (MLLM) because of token limits and hallucination risk. Instead, it performs a three‑stage, task‑conditioned retrieval based on the Information Bottleneck (IB) principle. First, the query is embedded and used to retrieve a high‑recall set of captions from the vector database; each caption is linked to a set of 3D primitives, forming a working subset X′_Q. Second, IB clustering is applied to X′_Q, iteratively merging neighboring primitives into compact clusters that retain maximal information about the downstream task while discarding redundancy. Third, for each cluster the system selects a representative caption, producing a non‑redundant evidence set R. Optionally, the corresponding keyframes are loaded to resolve visual details that captions cannot capture.

The retrieved evidence set R is then handed to an MLLM‑based agent that autonomously plans a reasoning strategy, issues API calls to fetch R, and performs contextual reasoning across spatial, temporal, and descriptive dimensions. The agent outputs structured answers (e.g., 3‑D target pose, timestamps, descriptive text) or navigation commands that can be directly executed by the robot.

The authors evaluate STaR on two benchmarks. NaVQA is a mixed indoor/outdoor campus video‑question‑answering dataset covering spatial, temporal, and descriptive queries. WH‑VQA is a newly created warehouse benchmark built in Isaac Sim, featuring many visually similar objects and requiring variable‑granularity reasoning. Across both datasets, STaR outperforms strong baselines (standard Retrieval‑Augmented Generation, object‑centric scene‑graph methods) by a large margin: higher answer accuracy, substantially lower spatial error, and faster retrieval (≈180 ms vs. >600 ms). The IB‑based retrieval reduces the size of the evidence set to roughly 5 % of the full memory while preserving answer fidelity, and the keyframe strategy cuts storage requirements by about 70 %.

Finally, the system is deployed on a real Husky wheeled robot in indoor corridors and outdoor garden settings. In multimodal tasks such as “pick up the fragile‑labeled box and place it on the correct shelf,” the robot successfully extracts the precise 3‑D location, navigates to it, and completes the placement with sub‑meter positional error. Success rates exceed 90 % in these real‑world trials, demonstrating that STaR scales from simulation to physical platforms.

In summary, STaR advances robotic long‑term memory by (1) integrating multimodal streams into a unified, query‑agnostic memory, (2) applying Information Bottleneck‑driven, task‑conditioned retrieval to obtain compact, information‑rich evidence, and (3) coupling this with an agentic MLLM that performs contextual reasoning and generates actionable robot commands. The work sets a new benchmark for scalable, accurate, and flexible memory‑based reasoning in embodied AI, and opens avenues for future research on dynamic event modeling, multi‑robot memory sharing, and continual adaptation of large language models to embodied contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment