RLinf-USER: A Unified and Extensible System for Real-World Online Policy Learning in Embodied AI

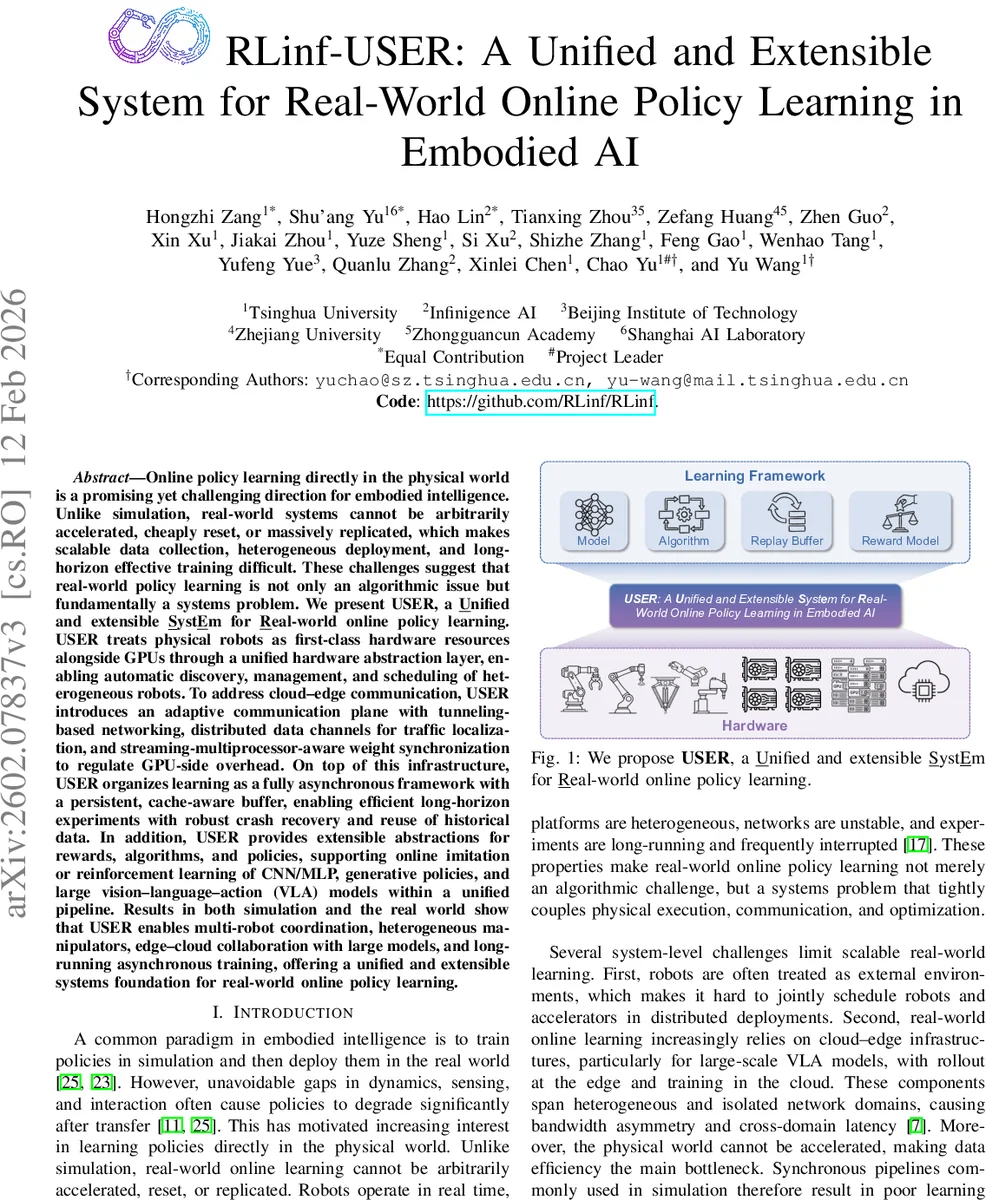

Online policy learning directly in the physical world is a promising yet challenging direction for embodied intelligence. Unlike simulation, real-world systems cannot be arbitrarily accelerated, cheaply reset, or massively replicated, which makes scalable data collection, heterogeneous deployment, and long-horizon effective training difficult. These challenges suggest that real-world policy learning is not only an algorithmic issue but fundamentally a systems problem. We present USER, a Unified and extensible SystEm for Real-world online policy learning. USER treats physical robots as first-class hardware resources alongside GPUs through a unified hardware abstraction layer, enabling automatic discovery, management, and scheduling of heterogeneous robots. To address cloud-edge communication, USER introduces an adaptive communication plane with tunneling-based networking, distributed data channels for traffic localization, and streaming-multiprocessor-aware weight synchronization to regulate GPU-side overhead. On top of this infrastructure, USER organizes learning as a fully asynchronous framework with a persistent, cache-aware buffer, enabling efficient long-horizon experiments with robust crash recovery and reuse of historical data. In addition, USER provides extensible abstractions for rewards, algorithms, and policies, supporting online imitation or reinforcement learning of CNN/MLP, generative policies, and large vision-language-action (VLA) models within a unified pipeline. Results in both simulation and the real world show that USER enables multi-robot coordination, heterogeneous manipulators, edge-cloud collaboration with large models, and long-running asynchronous training, offering a unified and extensible systems foundation for real-world online policy learning.

💡 Research Summary

The paper introduces USER (Unified and extensible System for Real‑world online policy learning), a comprehensive infrastructure that treats physical robots as first‑class hardware resources alongside GPUs, thereby addressing the systemic bottlenecks that arise when moving policy learning from simulation to the real world. The authors argue that real‑world learning cannot rely on accelerated time, cheap resets, or massive replication, which makes data collection, heterogeneous deployment, and long‑horizon training fundamentally a systems problem rather than a purely algorithmic one.

USER’s architecture consists of four tightly integrated layers:

-

Unified Hardware Abstraction Layer (HAL) – Robots, GPUs, and other accelerators are modeled as uniform “hardware units.” Each unit carries a lightweight descriptor (type, model, network address, sensor bindings). HAL provides plug‑in checkers for automatic discovery, metadata collection, and health verification. Nodes can host heterogeneous groups of units, and a rank‑based scheduler maps processes to specific hardware, enabling simultaneous allocation of robots and accelerators within a single job.

-

Adaptive Communication Plane – To bridge isolated cloud‑edge domains (e.g., campus VLANs, factory networks), USER builds a UDP‑tunneling overlay that creates a flattened TCP/IP substrate. On top of this tunnel, Ray handles cluster membership while a custom data plane uses TCP rendezvous for point‑to‑point connections. Data exchange is abstracted as “distributed data channels,” FIFO queues that are sharded by keys (e.g., robot IDs) to localize traffic and reduce cross‑domain bandwidth consumption. Additionally, weight synchronization via NCCL is made SM‑aware: a configurable cap limits the number of cooperative thread arrays (CTAs) that NCCL can launch, preventing GPU streaming multiprocessors from being monopolized by communication kernels.

-

Fully Asynchronous Learning Framework – Unlike traditional synchronous pipelines, USER decouples trajectory generation (rollouts), transmission, training, and weight updates. Central to this design is a persistent, cache‑aware replay buffer that streams trajectories to disk while keeping a hot cache in RAM for high‑throughput sampling. The buffer supports metadata‑driven indexing, crash recovery, and reuse of historical data across training phases, making experiments robust over days or weeks despite network glitches or robot resets.

-

Extensible Abstractions for Rewards, Algorithms, and Policies – USER provides modular APIs for defining rewards (rule‑based, human feedback, learned models), selecting learning algorithms (imitation, reinforcement, hybrid), and plugging in policy architectures ranging from CNN/MLP controllers to flow‑based generative policies and large Vision‑Language‑Action (VLA) models. This uniform pipeline allows researchers to experiment with diverse learning paradigms without re‑engineering the underlying system.

The authors release the full implementation as open‑source code (https://github.com/RLinf/RLinf). Empirical evaluation spans both simulated benchmarks and real‑world robot platforms. Results demonstrate that USER can orchestrate multi‑robot coordination, handle heterogeneous manipulators, support edge‑cloud collaboration with large VLA models, and sustain long‑running asynchronous training. Crucially, the system maintains stable performance under realistic disturbances such as network latency spikes, robot power cycles, and temporary training pauses, while efficiently reusing previously collected data.

In summary, USER reframes real‑world online policy learning as a systems engineering challenge and delivers a unified, extensible stack that integrates hardware abstraction, adaptive networking, asynchronous execution, and persistent data management. By doing so, it lowers the barrier for researchers to conduct scalable, reproducible, and data‑efficient learning directly on physical robots, paving the way for tighter simulation‑to‑real transfer and for deploying ever larger vision‑language‑action models in embodied AI applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment