Humanoid Manipulation Interface: Humanoid Whole-Body Manipulation from Robot-Free Demonstrations

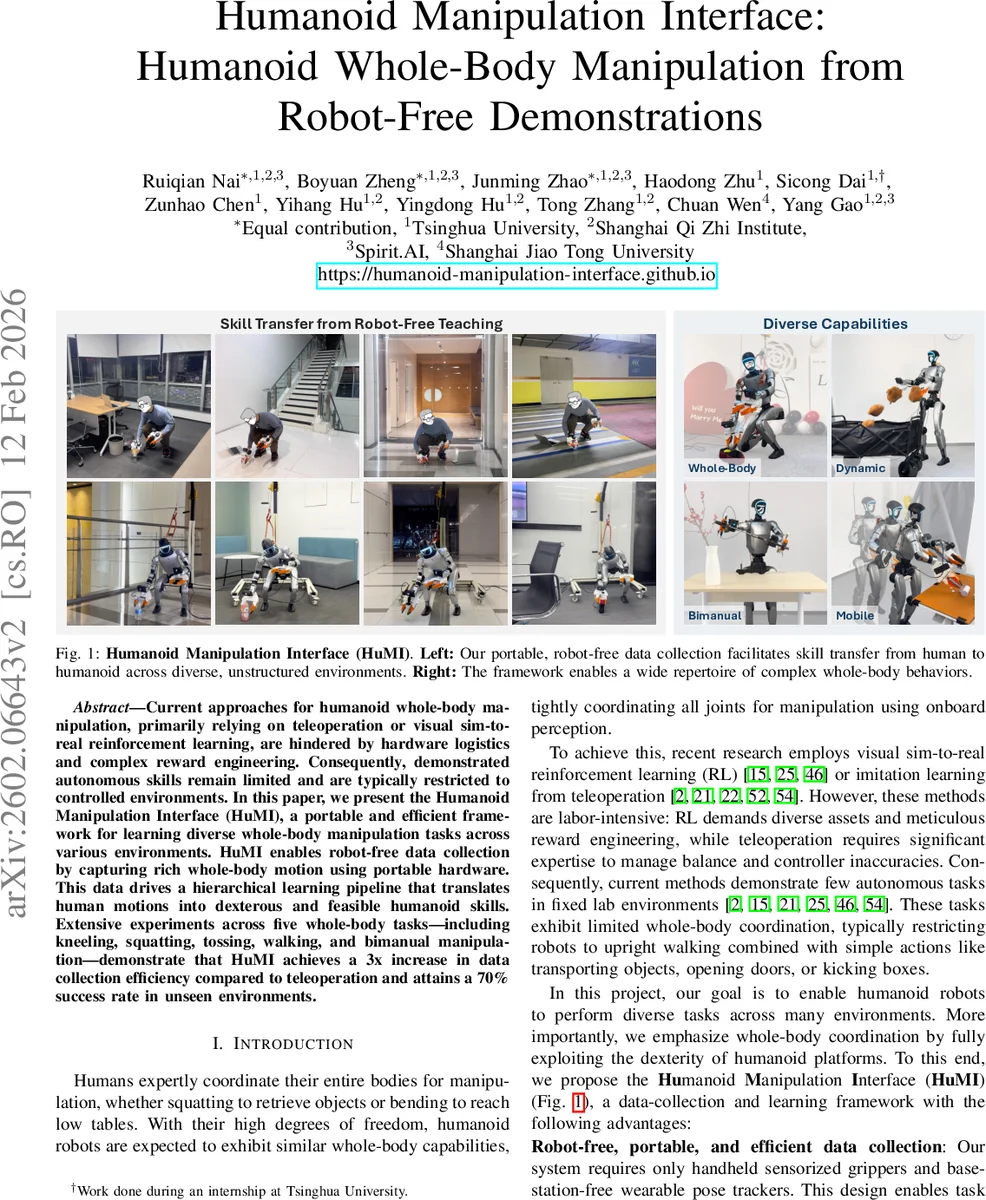

Current approaches for humanoid whole-body manipulation, primarily relying on teleoperation or visual sim-to-real reinforcement learning, are hindered by hardware logistics and complex reward engineering. Consequently, demonstrated autonomous skills remain limited and are typically restricted to controlled environments. In this paper, we present the Humanoid Manipulation Interface (HuMI), a portable and efficient framework for learning diverse whole-body manipulation tasks across various environments. HuMI enables robot-free data collection by capturing rich whole-body motion using portable hardware. This data drives a hierarchical learning pipeline that translates human motions into dexterous and feasible humanoid skills. Extensive experiments across five whole-body tasks–including kneeling, squatting, tossing, walking, and bimanual manipulation–demonstrate that HuMI achieves a 3x increase in data collection efficiency compared to teleoperation and attains a 70% success rate in unseen environments.

💡 Research Summary

The paper introduces the Humanoid Manipulation Interface (HuMI), a novel framework that enables humanoid robots to acquire whole‑body manipulation skills from robot‑free human demonstrations. Existing approaches rely heavily on teleoperation or visual sim‑to‑real reinforcement learning, both of which suffer from cumbersome hardware logistics, extensive reward engineering, and limited generalization to unstructured environments. HuMI addresses these limitations through two main contributions: a portable, robot‑free data‑collection system and a hierarchical learning pipeline that bridges the embodiment gap between human demonstrators and the target robot.

Data‑collection system. The authors equip a human operator with a lightweight backpack containing an HTC VIVE Ultimate Tracker, two 3‑D‑printed handheld grippers each fitted with a GoPro camera, and additional trackers attached to the waist and both feet. This configuration captures full‑body SE(3) trajectories for the hands, pelvis, and feet without requiring any external base stations, allowing demonstrations to be recorded in diverse indoor and outdoor settings. Crucially, the system does not scale human poses to match the robot’s size; instead, it visualizes the robot’s kinematic configuration in real time via an online inverse‑kinematics (IK) preview. Demonstrators can instantly adjust their motions to ensure that the resulting robot trajectories are collision‑free and within the robot’s workspace, effectively mitigating the “morphology gap” that typically plagues robot‑free learning.

Hierarchical learning pipeline. Collected demonstrations serve two purposes. First, a high‑level Diffusion Policy processes onboard camera images and proprioceptive data (joint positions, velocities, IMU) at 5 Hz and predicts receding‑horizon key‑point trajectories for the hands, feet, and pelvis (action chunks). Second, a low‑level whole‑body controller, trained via reinforcement learning in simulation, tracks these key‑points at 50 Hz. The controller’s reward function combines a standard whole‑body tracking term (penalizing position, orientation, and velocity errors) with an adaptive end‑effector term that dynamically relaxes precision tolerances when the reference motion is fast and tightens them during slow, contact‑rich phases. This adaptive reward encourages coordinated whole‑body motion while still achieving the high precision required for manipulation. Additionally, the authors introduce variable‑speed augmentation: during training, the execution speed of reference motions is randomly sampled within a predefined range, giving the policy ample time to correct small errors and learn fine‑grained control.

Policy‑controller interface improvements. Prior work often anchors each new action chunk to the robot’s actually executed end‑effector pose, which leads to discontinuities when tracking errors accumulate. HuMI instead anchors subsequent chunks to the scheduled key‑point trajectory itself, preserving continuity despite residual tracking errors. This design, together with the adaptive reward and speed augmentation, yields a robust system that can handle the non‑negligible 4–6 cm tracking deviations typical of current humanoid simulators.

Experimental validation. The framework is evaluated on a 130 cm Unitree G1 humanoid across five diverse whole‑body tasks: kneeling to pick up an object, squatting to retrieve a bottle, tossing a toy, unsheathing a sword, and walking while cleaning a table. Compared to teleoperation‑based data collection, HuMI achieves a three‑fold increase in demonstration throughput (over 1,200 demonstrations collected). In previously unseen environments and with novel objects, the learned policies attain an average success rate of 70 %, demonstrating strong generalization. The low‑level controller maintains tracking errors within 4–6 cm, a notable improvement over prior robot‑free pipelines.

Key contributions.

- The first robot‑free demonstration system tailored for humanoid whole‑body manipulation, incorporating real‑time IK preview to close the embodiment gap.

- A hierarchical learning architecture that jointly optimizes a diffusion‑based high‑level policy and a manipulation‑centric whole‑body controller, featuring adaptive end‑effector rewards and variable‑speed training.

- Extensive real‑world experiments confirming three‑times faster data collection and robust performance (≈70 % success) on unseen tasks.

In summary, HuMI demonstrates that portable, robot‑free motion capture combined with carefully designed hierarchical learning can endow humanoid robots with versatile, precise, and generalizable whole‑body manipulation capabilities, opening avenues for future work on more complex collaborative tasks and online adaptation.

Comments & Academic Discussion

Loading comments...

Leave a Comment