LoGoSeg: Integrating Local and Global Features for Open-Vocabulary Semantic Segmentation

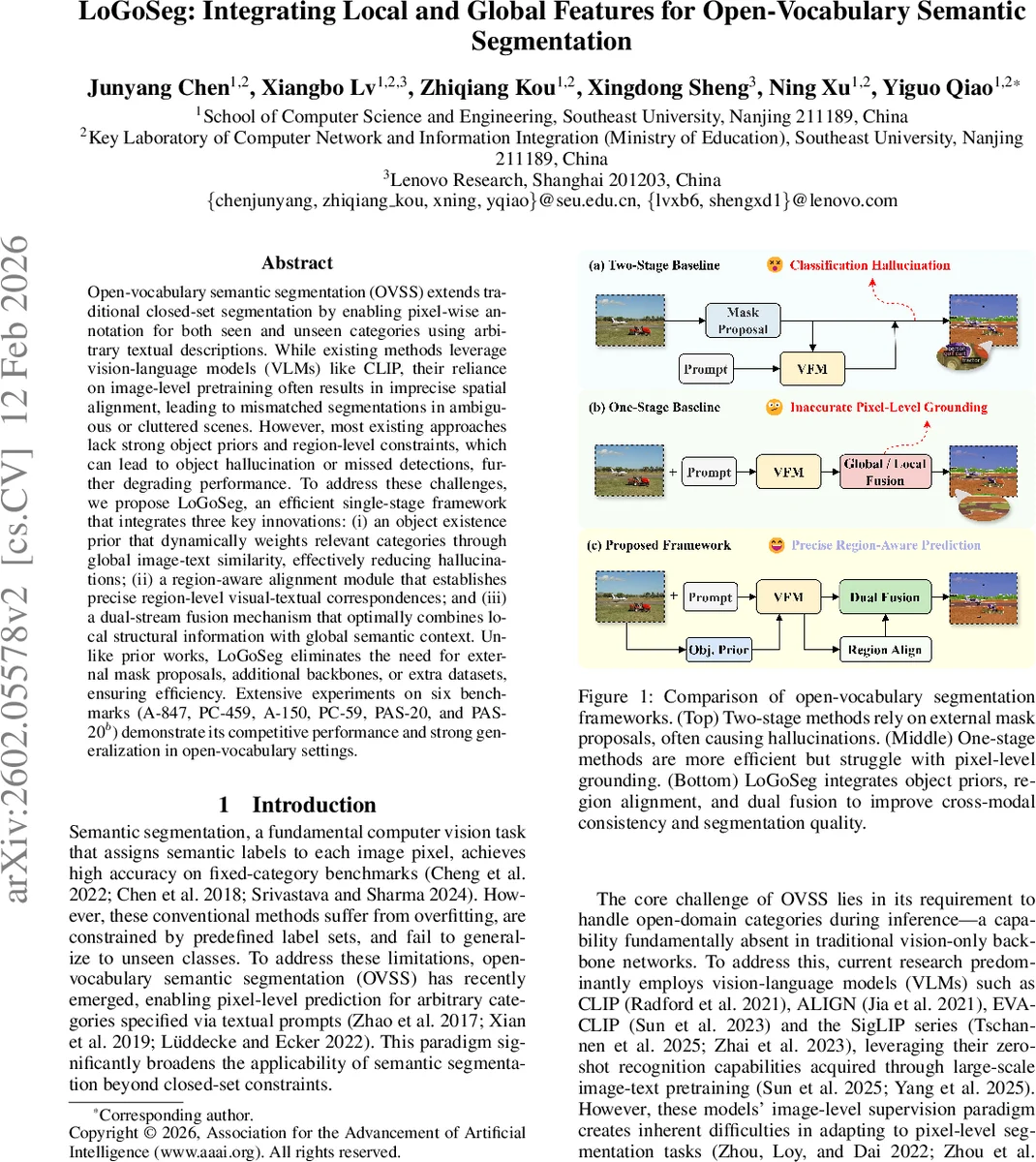

Open-vocabulary semantic segmentation (OVSS) extends traditional closed-set segmentation by enabling pixel-wise annotation for both seen and unseen categories using arbitrary textual descriptions. While existing methods leverage vision-language models (VLMs) like CLIP, their reliance on image-level pretraining often results in imprecise spatial alignment, leading to mismatched segmentations in ambiguous or cluttered scenes. However, most existing approaches lack strong object priors and region-level constraints, which can lead to object hallucination or missed detections, further degrading performance. To address these challenges, we propose LoGoSeg, an efficient single-stage framework that integrates three key innovations: (i) an object existence prior that dynamically weights relevant categories through global image-text similarity, effectively reducing hallucinations; (ii) a region-aware alignment module that establishes precise region-level visual-textual correspondences; and (iii) a dual-stream fusion mechanism that optimally combines local structural information with global semantic context. Unlike prior works, LoGoSeg eliminates the need for external mask proposals, additional backbones, or extra datasets, ensuring efficiency. Extensive experiments on six benchmarks (A-847, PC-459, A-150, PC-59, PAS-20, and PAS-20b) demonstrate its competitive performance and strong generalization in open-vocabulary settings.

💡 Research Summary

LoGoSeg tackles the core challenges of open‑vocabulary semantic segmentation (OVSS) by integrating three complementary mechanisms into a single‑stage, end‑to‑end framework built on top of a frozen CLIP backbone. First, the method introduces an object existence prior that leverages global image‑text similarity to estimate the likelihood that each candidate class actually appears in the image. By averaging the CLIP text embeddings for each class and computing a cosine similarity score across all spatial locations, a scalar prior p_prior_n is obtained for every class n. This prior is used to re‑weight the class‑specific text embeddings, effectively suppressing the influence of categories that are not present, which dramatically reduces the well‑known “hallucination” problem where unseen classes are erroneously activated.

Second, LoGoSeg implements a region‑aware alignment module that brings visual and linguistic information together at a fine‑grained spatial scale. The image feature map V (C × H × W) is partitioned into K non‑overlapping regions {R_k}. For each region, a mean‑pooled visual token v_k is computed. The re‑weighted class text vectors ˆ T_n are then compared to each v_k via cosine similarity, producing a region‑class affinity matrix A_{k,n}. After temperature‑scaled softmax, region‑specific weights w_{k,n} are derived, which are used to aggregate visual tokens into class‑wise prototypes m_n = Σ_k w_{k,n} v_k. A linear projection combines each prototype with its corresponding text center to generate a region‑level textual guidance vector g_region

Comments & Academic Discussion

Loading comments...

Leave a Comment