FaithRL: Learning to Reason Faithfully through Step-Level Faithfulness Maximization

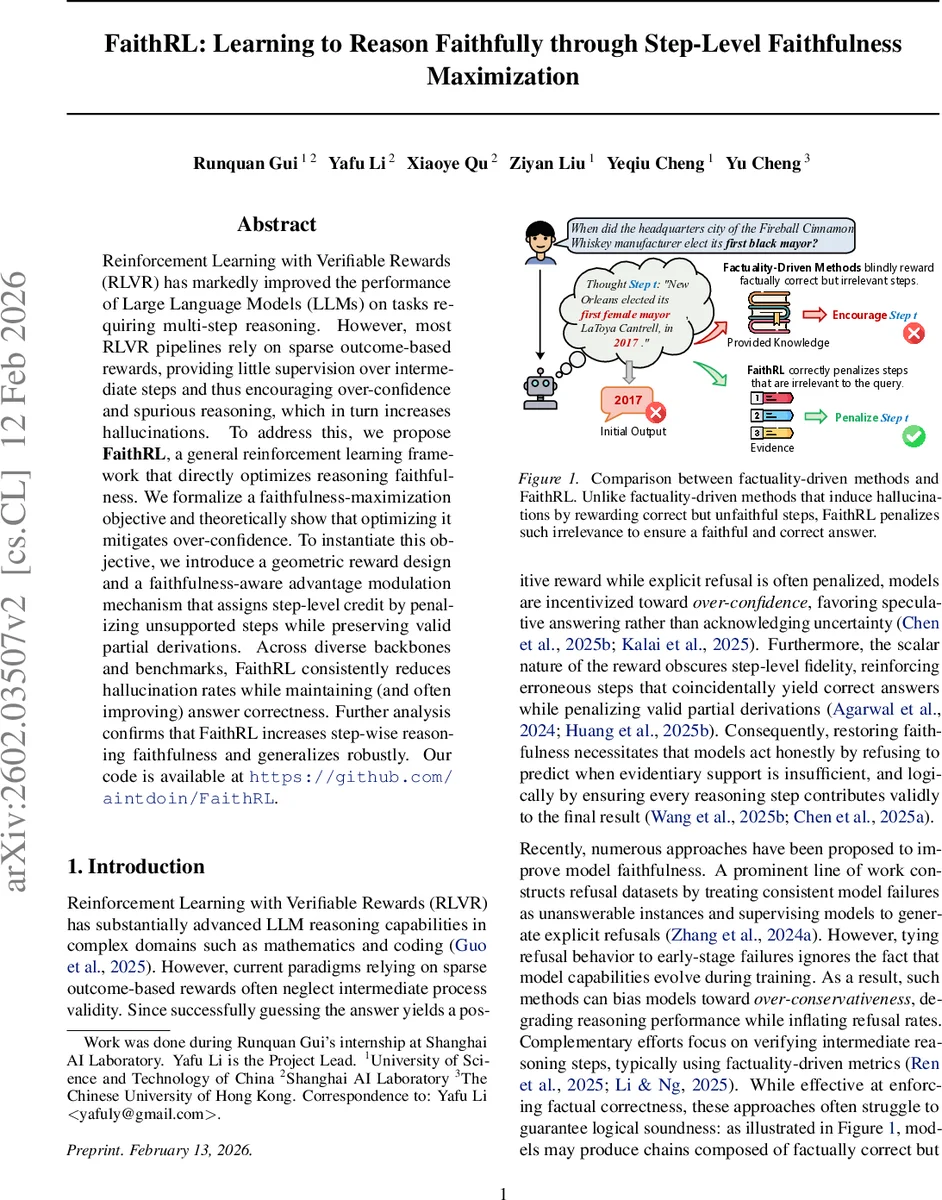

Reinforcement Learning with Verifiable Rewards (RLVR) has markedly improved the performance of Large Language Models (LLMs) on tasks requiring multi-step reasoning. However, most RLVR pipelines rely on sparse outcome-based rewards, providing little supervision over intermediate steps and thus encouraging over-confidence and spurious reasoning, which in turn increases hallucinations. To address this, we propose FaithRL, a general reinforcement learning framework that directly optimizes reasoning faithfulness. We formalize a faithfulness-maximization objective and theoretically show that optimizing it mitigates over-confidence. To instantiate this objective, we introduce a geometric reward design and a faithfulness-aware advantage modulation mechanism that assigns step-level credit by penalizing unsupported steps while preserving valid partial derivations. Across diverse backbones and benchmarks, FaithRL consistently reduces hallucination rates while maintaining (and often improving) answer correctness. Further analysis confirms that FaithRL increases step-wise reasoning faithfulness and generalizes robustly. Our code is available at https://github.com/aintdoin/FaithRL.

💡 Research Summary

FaithRL tackles a fundamental shortcoming of current reinforcement‑learning‑with‑verifiable‑rewards (RLVR) pipelines for large language models (LLMs): they rely on sparse, outcome‑only rewards that give the model no feedback on the quality of its intermediate reasoning steps. This lack of supervision encourages two pathological behaviors—over‑confidence, where the model guesses answers without sufficient evidence, and over‑conservativeness, where the model refuses to answer to avoid any risk of hallucination. Both behaviors increase the incidence of hallucinations and reduce the reliability of LLM‑driven reasoning.

The authors propose a new optimization objective: maximizing reasoning faithfulness. Faithfulness is defined as the property that the set of knowledge items used by the model (ϕ(τ)) exactly matches the minimal evidence set required to answer the query (E(q)). Under two idealized assumptions—(1) covering the evidence set guarantees the correct answer (correctness sufficiency) and (2) restricting the reasoning to the evidence set prevents hallucinated answers (hallucination prevention)—maximizing faithfulness simultaneously ensures correctness and eliminates hallucinations.

To operationalize this objective, FaithRL introduces two complementary mechanisms:

-

Geometric Reward Design – Instead of a binary reward, the authors map a model’s baseline capability (x₀ = correctness rate, y₀ = hallucination rate) and its post‑training capability (x₁, y₁) onto a 2‑D plane. The “Truthful Helpfulness Score” (THS) is defined as the normalized signed area of the triangle formed by the origin, the baseline point, and the post‑training point, relative to the ideal point (1, 0). This geometric formulation automatically balances improvements in accuracy against reductions in hallucination without hand‑tuned hyper‑parameters.

-

Faithfulness‑Aware Advantage Modulation – Building on Group‑Relative Policy Optimization (GRPO), FaithRL injects step‑level supervision. An external verifier V checks each reasoning step sᵢ to see whether it is supported by the required evidence. If a step is faithful, its advantage is left unchanged; if it is unsupported or irrelevant, the advantage is heavily penalized. This prevents the model from inserting spurious steps that merely happen to lead to a correct final answer, forcing every token to contribute meaningfully to the logical chain.

The paper provides a theoretical analysis (Theorem 4.1) showing that three possible objectives lead to distinct asymptotic behaviors: maximizing correctness drives the miss (refusal) rate to zero (over‑confidence), minimizing hallucination drives the miss rate to one (over‑conservativeness), while maximizing faithfulness stabilizes the miss rate at a value determined by the model’s intrinsic capability, thereby avoiding collapse.

Empirically, FaithRL is evaluated on several multi‑hop QA benchmarks (HotpotQA, MultiHopQA, MuSiQue) and on mathematical reasoning datasets (Math500, GSM8K) using multiple backbones (Llama‑2‑7B/13B, GPT‑3.5‑Turbo). Compared with strong RLVR baselines, FaithRL reduces hallucination rates by an average of 4.7 percentage points while improving answer correctness by 1.6 pp. On out‑of‑distribution tasks, accuracy improves by 10.8 pp and hallucination drops by 8.4 pp. Moreover, the proportion of faithful reasoning steps rises dramatically during training (31.1 pp in‑domain, 6.5 pp out‑of‑domain). The overall THS improves by 16 points, indicating simultaneous gains in usefulness and honesty.

The authors acknowledge limitations: the approach assumes that a reliable evidence set E(q) can be identified and that the verifier V is accurate. In domains where evidence is noisy or implicit, the theoretical assumptions may not hold, and the quality of V directly influences training stability. Extending FaithRL to free‑form generation tasks (summarization, translation) will require new definitions of faithfulness beyond explicit evidence retrieval.

In conclusion, FaithRL introduces a principled way to align LLM incentives with step‑wise factual and logical correctness, moving beyond outcome‑only rewards. By integrating a geometric, capability‑aware reward and a step‑level advantage modulation, it curbs both over‑confidence and over‑conservativeness, yielding more trustworthy and accurate reasoning. The framework opens avenues for future work on automated verifiers, broader task families, and tighter theoretical guarantees for faithfulness‑driven reinforcement learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment