DexterCap: An Affordable and Automated System for Capturing Dexterous Hand-Object Manipulation

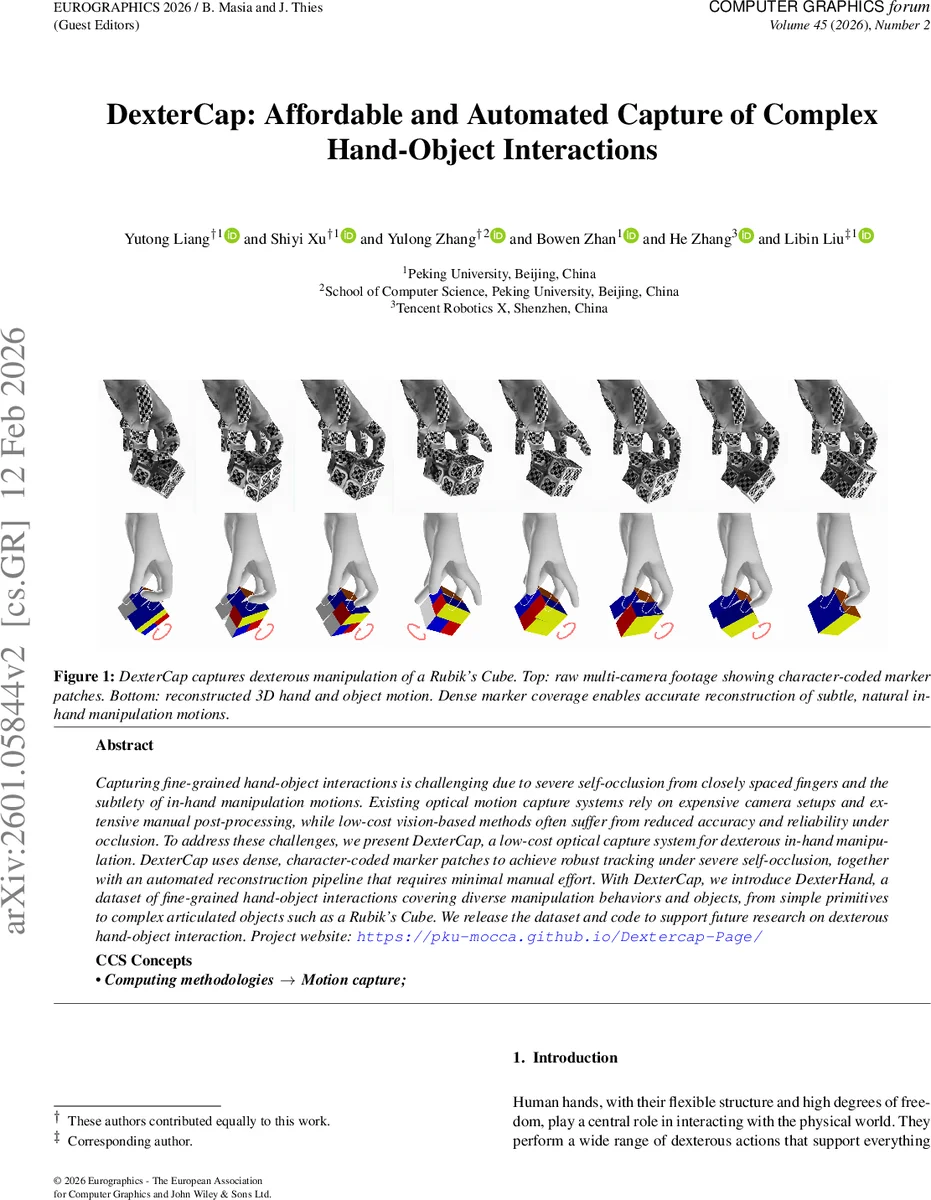

Capturing fine-grained hand-object interactions is challenging due to severe self-occlusion from closely spaced fingers and the subtlety of in-hand manipulation motions. Existing optical motion capture systems rely on expensive camera setups and extensive manual post-processing, while low-cost vision-based methods often suffer from reduced accuracy and reliability under occlusion. To address these challenges, we present DexterCap, a low-cost optical capture system for dexterous in-hand manipulation. DexterCap uses dense, character-coded marker patches to achieve robust tracking under severe self-occlusion, together with an automated reconstruction pipeline that requires minimal manual effort. With DexterCap, we introduce DexterHand, a dataset of fine-grained hand-object interactions covering diverse manipulation behaviors and objects, from simple primitives to complex articulated objects such as a Rubik’s Cube. We release the dataset and code to support future research on dexterous hand-object interaction. Project website: https://pku-mocca.github.io/Dextercap-Page/

💡 Research Summary

DexterCap addresses the long‑standing trade‑off between accuracy, cost, and labor in capturing fine‑grained hand‑object interactions. The system combines inexpensive industrial grayscale cameras (six units, 2048 × 2448 px at 20 fps) with a dense set of custom visual markers that encode a two‑character alphanumeric ID. Each marker consists of a high‑contrast checkerboard pattern where every white square contains a unique tag drawn from 26 letters and 10 digits (324 possible tags), with an underscore beneath the left character to resolve orientation. The markers are printed on medical adhesive tape and affixed directly to relatively rigid hand regions (19 patches per hand covering knuckles, dorsum, and palm) and to the surfaces of manipulated objects, including articulated items such as a Rubik’s Cube. This layout yields over 500 detectable corners per capture, providing redundancy that survives severe self‑occlusion.

The acquisition pipeline first synchronizes the cameras and performs a standard OpenCV calibration. Short exposure (1 ms) and a narrow aperture increase depth of field and suppress motion blur, while ambient lighting supplemented by LED panels ensures consistent illumination. Captured video streams are then processed by a three‑stage deep‑learning marker detection pipeline:

-

CornerNet – a U‑Net‑style network that receives 64 × 64 grayscale patches and outputs a heatmap where each corner appears as a Gaussian peak. Multi‑scale processing (original and half‑size) improves robustness to perspective changes. A low confidence threshold (0.6) favors high recall, allowing many true corners to pass to the next stage.

-

EdgeNet – a ResNet‑based binary classifier that decides whether a pair of candidate corners belongs to the same checkerboard edge. For each corner pair within a distance threshold derived from training statistics (set to achieve 95 % recall), a rectangular patch centered on the line segment is extracted and classified. This step dramatically prunes the combinatorial explosion of possible quads.

-

BlockNet – assembles validated edges into 2 × 2 checkerboard blocks and decodes the embedded alphanumeric ID. The decoded IDs provide the global correspondence needed for 3‑D reconstruction.

Unlike generic ArUco markers, the learned detectors tolerate the deformations and partial occlusions typical of hand motion capture. After marker detection, the system reconstructs 3‑D hand pose using the MANO morphable hand model. A non‑linear least‑squares optimization aligns the 3‑D marker positions with MANO’s joint parameters, solved via Levenberg‑Marquardt. Hand shape parameters are estimated once during an initial calibration session and reused thereafter, reducing per‑session labeling effort. Object pose is recovered by solving a 6‑DoF (or articulated) transformation that best aligns the detected object markers with a known object model; for the Rubik’s Cube, each cubie’s pose is estimated independently.

Quantitative evaluation against a high‑end optical system (Qualisys) shows average positional errors below 3 mm and rotational errors under 5°, with particularly strong performance on fingertip trajectories (±2 mm) and subtle object rotations (±3°). The dense marker layout ensures that even when many markers are hidden, enough remain to keep the optimization well‑conditioned.

Using DexterCap, the authors built DexterHand, a publicly released dataset containing over 200 multi‑minute sequences of dexterous manipulation across 30+ objects, ranging from simple primitives to articulated tools and a Rubik’s Cube. Each sequence provides synchronized multi‑view video, 3‑D hand meshes, object meshes, per‑frame marker coordinates, and contact annotations, enabling downstream research in physics‑based simulation, hand‑object interaction synthesis, and vision‑language modeling.

Key contributions of the work are:

- Cost‑effectiveness: the hardware bill is on the order of a few thousand dollars, far cheaper than commercial motion‑capture rigs.

- Automation: after a one‑time calibration and a small set of manually labeled frames for training CornerNet/EdgeNet/BlockNet, the entire pipeline runs without human intervention.

- Robustness to occlusion: the high density of uniquely coded markers, combined with the three‑stage detection, maintains high recall even under severe self‑occlusion typical of in‑hand manipulation.

- Extensibility: the marker design and detection framework can be applied to arbitrary rigid objects and easily extended to articulated objects.

Limitations include residual sensitivity to extreme lighting changes (due to the use of grayscale cameras) and occasional failure when a marker patch is completely occluded for an extended period, which can degrade pose accuracy. Future work suggested by the authors involves integrating marker‑less deep pose estimators to fill gaps, real‑time feedback for interactive capture, and expanding the marker set to accommodate diverse hand sizes and skin tones.

In summary, DexterCap delivers a practical, low‑cost, and largely automated solution for capturing high‑fidelity hand‑object motion, and its accompanying DexterHand dataset fills a notable gap in the community by providing richly annotated, long‑duration recordings of complex in‑hand manipulations. This work is poised to accelerate research in dexterous robotics, virtual reality, and computer graphics where accurate hand‑object interaction data are essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment