MemRL: Self-Evolving Agents via Runtime Reinforcement Learning on Episodic Memory

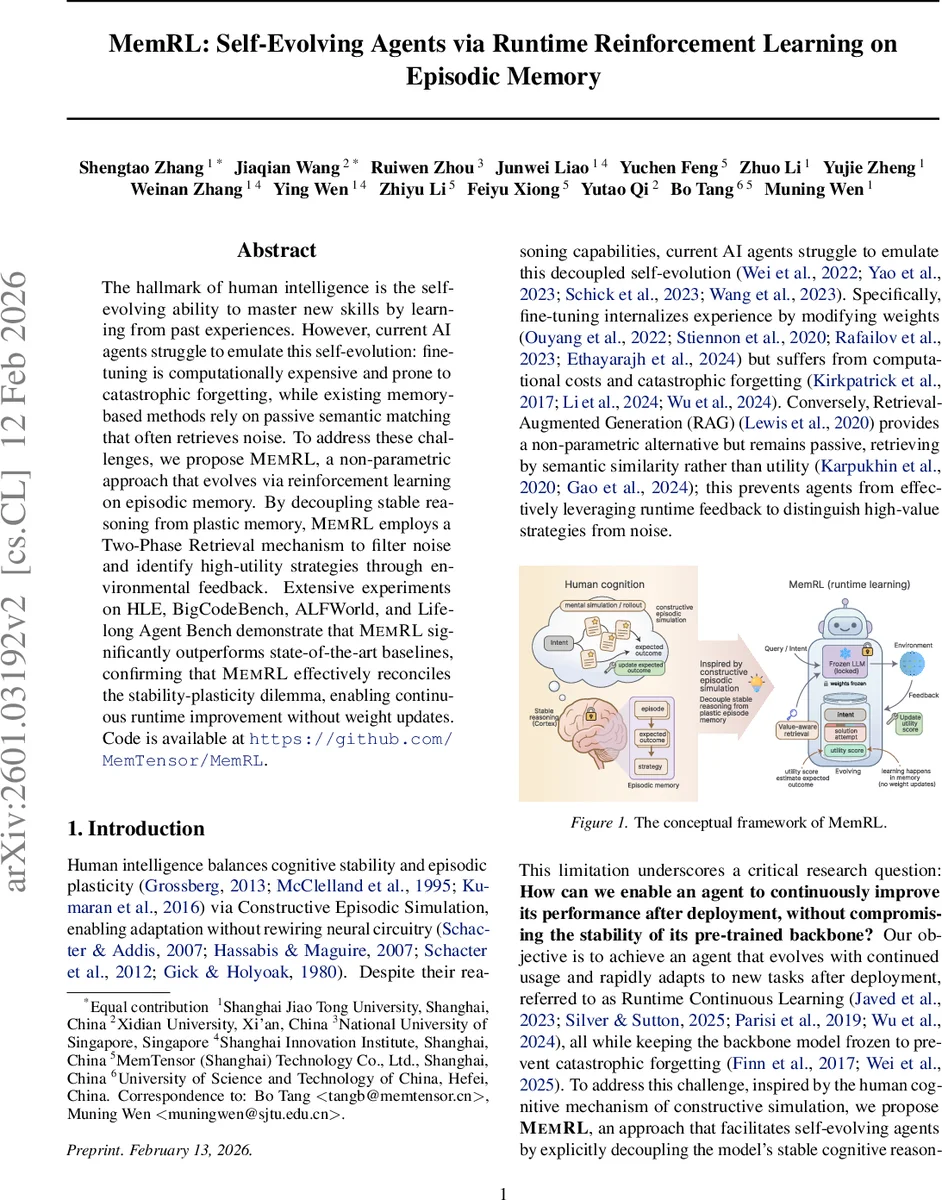

The hallmark of human intelligence is the self-evolving ability to master new skills by learning from past experiences. However, current AI agents struggle to emulate this self-evolution: fine-tuning is computationally expensive and prone to catastrophic forgetting, while existing memory-based methods rely on passive semantic matching that often retrieves noise. To address these challenges, we propose MemRL, a non-parametric approach that evolves via reinforcement learning on episodic memory. By decoupling stable reasoning from plastic memory, MemRL employs a Two-Phase Retrieval mechanism to filter noise and identify high-utility strategies through environmental feedback. Extensive experiments on HLE, BigCodeBench, ALFWorld, and Lifelong Agent Bench demonstrate that MemRL significantly outperforms state-of-the-art baselines, confirming that MemRL effectively reconciles the stability-plasticity dilemma, enabling continuous runtime improvement without weight updates. Code is available at https://github.com/MemTensor/MemRL.

💡 Research Summary

MemRL tackles the stability‑plasticity dilemma by keeping a pre‑trained large language model (LLM) frozen while continuously improving its behavior through non‑parametric reinforcement learning on an episodic memory bank. The core contribution is the Intent‑Experience‑Utility (I‑E‑U) triplet representation: each memory entry stores an intent embedding (the current query or goal), the raw experience (a past solution trace, success or failure), and a learned utility value Q that estimates the expected return when that experience is applied to similar intents.

The retrieval process is split into two phases. Phase A performs a fast similarity‑based recall, selecting the top‑k₁ candidates using cosine similarity of intent embeddings. Phase B re‑ranks these candidates with a composite score that blends normalized similarity and the learned Q‑value:

score = (1‑λ)·(\hat{sim}) + λ·(\hat{Q}).

The λ hyper‑parameter controls the exploration‑exploitation trade‑off, allowing the system to filter out “semantically similar but useless” memories that would otherwise mislead a vanilla RAG system.

Learning occurs entirely within the memory. After the agent generates an action using the frozen LLM conditioned on the selected memory context, it receives an environmental reward r. The utility Q of the retrieved memory is updated via either a temporal‑difference (TD) rule

( Q←Q+α

Comments & Academic Discussion

Loading comments...

Leave a Comment