GPR: Towards a Generative Pre-trained One-Model Paradigm for Large-Scale Advertising Recommendation

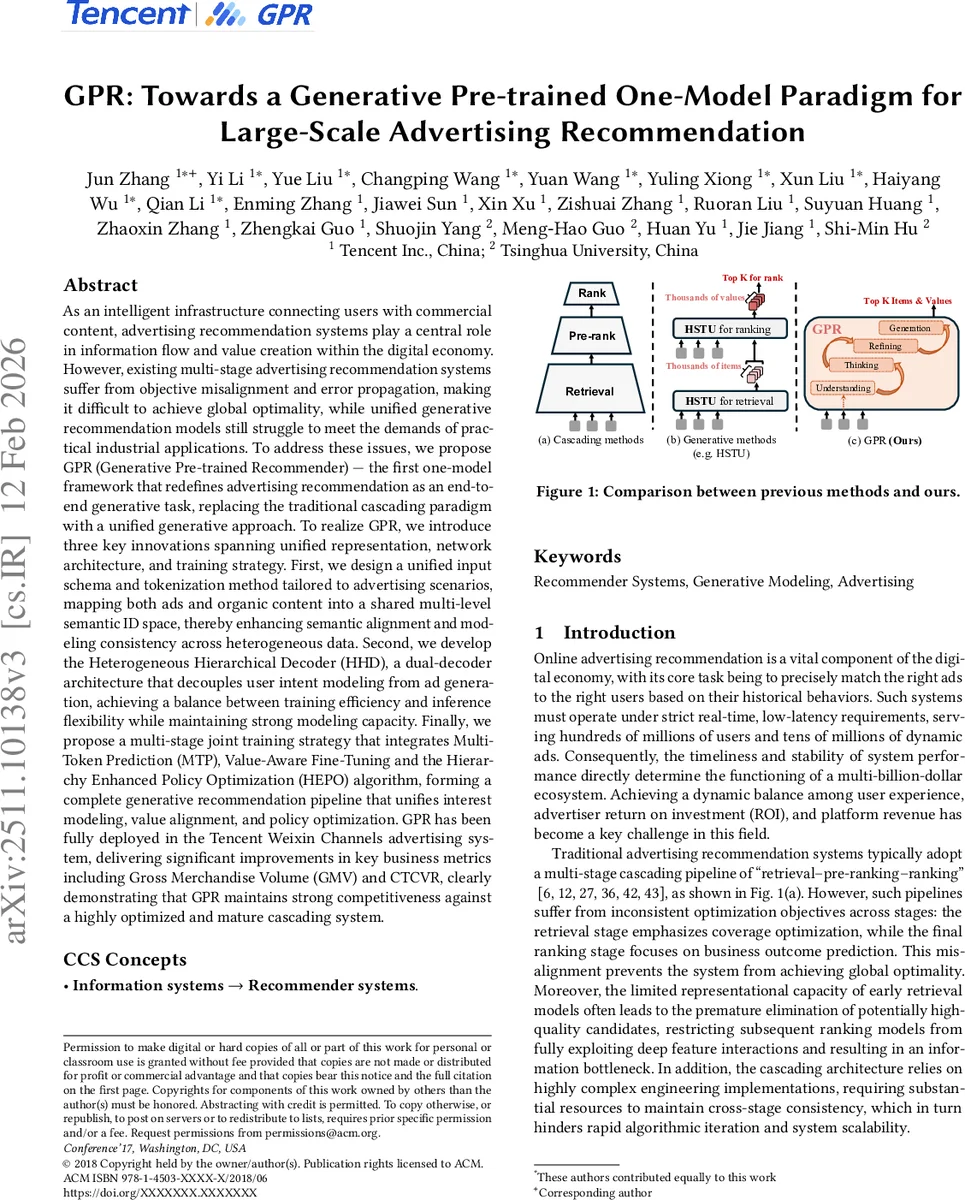

As an intelligent infrastructure connecting users with commercial content, advertising recommendation systems play a central role in information flow and value creation within the digital economy. However, existing multi-stage advertising recommendation systems suffer from objective misalignment and error propagation, making it difficult to achieve global optimality, while unified generative recommendation models still struggle to meet the demands of practical industrial applications. To address these issues, we propose GPR (Generative Pre-trained Recommender), the first one-model framework that redefines advertising recommendation as an end-to-end generative task, replacing the traditional cascading paradigm with a unified generative approach. To realize GPR, we introduce three key innovations spanning unified representation, network architecture, and training strategy. First, we design a unified input schema and tokenization method tailored to advertising scenarios, mapping both ads and organic content into a shared multi-level semantic ID space, thereby enhancing semantic alignment and modeling consistency across heterogeneous data. Second, we develop the Heterogeneous Hierarchical Decoder (HHD), a dual-decoder architecture that decouples user intent modeling from ad generation, achieving a balance between training efficiency and inference flexibility while maintaining strong modeling capacity. Finally, we propose a multi-stage joint training strategy that integrates Multi-Token Prediction (MTP), Value-Aware Fine-Tuning and the Hierarchy Enhanced Policy Optimization (HEPO) algorithm, forming a complete generative recommendation pipeline that unifies interest modeling, value alignment, and policy optimization. GPR has been fully deployed in the Tencent Weixin Channels advertising system, delivering significant improvements in key business metrics including GMV and CTCVR.

💡 Research Summary

The paper introduces GPR (Generative Pre‑trained Recommender), a novel “one‑model” paradigm that replaces the traditional multi‑stage retrieval‑pre‑ranking‑ranking pipeline of large‑scale advertising recommendation with a single end‑to‑end generative architecture. The authors identify three fundamental shortcomings of existing systems: (1) misaligned objectives across stages that prevent global optimality, (2) error propagation caused by early‑stage pruning, and (3) engineering complexity that hinders rapid iteration. To overcome these issues, GPR is built around three technical pillars: unified representation, a hierarchical dual‑decoder, and a multi‑stage joint training strategy.

Unified Input Schema and Tokenization

User behavior logs, organic content, ad‑environment context, and ad items are encoded as four token types: U‑Token (user attributes), O‑Token (organic content), E‑Token (environment), and I‑Token (ad item). O‑Token and I‑Token are further quantized into discrete semantic IDs using a newly proposed RQ‑Kmeans+ quantizer. RQ‑Kmeans+ first generates a high‑quality codebook with RQ‑Kmeans, then refines it with a residual‑connection encoder and the RQ‑VAE loss, effectively eliminating codebook collapse and improving utilization. This unified token stream enables the model to ingest ultra‑long, heterogeneous sequences while keeping memory and compute requirements tractable.

Heterogeneous Hierarchical Decoder (HHD)

HHD consists of three cooperating modules:

-

Heterogeneous Sequence‑wise Decoder (HSD) – a transformer‑style decoder that processes the whole token stream. It employs a Hybrid Attention mask that allows bidirectional attention among prompt tokens (U/O/E) while preserving causal masking for generated tokens. Token‑aware layer‑norm and feed‑forward networks are applied separately to each token type, preserving their distinct semantics. A Mixture‑of‑Recursions (MoR) mechanism deepens the network without proportional memory growth.

-

Progressive Token‑wise Decoder (PTD) – implements a “Thinking‑Refining‑Generation” workflow. First, a “thinking” token explores high‑level user intent, producing intent embeddings. Then a “refining” token uses these embeddings to generate concrete ad item IDs. This staged generation yields richer reasoning than a single‑step token predictor.

-

Hierarchical Token‑wise Evaluator (HTE) – predicts a business value (e.g., expected bid price or conversion value) for each candidate token. During inference, HTE drives a Trie‑based beam search that encodes real‑time constraints (budget, targeting, forbidden items). Value‑guided pruning combines the predicted value with token probability to discard low‑value paths early, dramatically reducing latency.

Multi‑Stage Joint Training Strategy

Training proceeds in three coordinated phases:

-

Multi‑Token Prediction (MTP) – instead of the classic next‑token loss, MTP jointly predicts a short sequence of tokens (intent, item, value), improving learning efficiency for the hierarchical decoder.

-

Value‑Aware Fine‑Tuning (VAFT) – augments the loss with a term that penalizes deviation between predicted and actual business values (e.g., GMV, ROI). This aligns the model’s objective with platform revenue rather than pure click prediction.

-

Hierarchy Enhanced Policy Optimization (HEPO) – a reinforcement‑learning stage that treats the intent level and item level as hierarchical policies. The higher‑level policy constrains the action space of the lower‑level policy, ensuring consistent decision‑making while allowing exploration.

These stages can be trained sequentially or jointly, yielding a single set of parameters that simultaneously captures user interest, business value, and optimal serving policy.

Empirical Evaluation and Deployment

Offline experiments on Tencent’s advertising logs show consistent improvements of 10‑15 % across CTR, CVR, and NDCG compared with a highly tuned cascading baseline. In a large‑scale online A/B test on Weixin Channels, GPR increased Gross Merchandise Volume (GMV) by an average of 12.4 % and the Click‑Through‑Conversion‑Rate (CTCVR) by 8.7 %. Inference latency dropped from ~40 ms to ~28 ms (≈30 % reduction), and the model size was reduced by ~28 % (1.8 B vs. 2.5 B parameters). Update cycles moved from daily batch jobs to near‑real‑time streaming, demonstrating operational feasibility at industrial scale.

Conclusions and Future Work

GPR demonstrates that a unified generative model can simultaneously address heterogeneity, efficiency‑flexibility trade‑offs, and multi‑stakeholder value optimization in advertising recommendation. The authors plan to extend the framework to richer multimodal signals (audio, AR), incorporate longer‑term value metrics (LTV), and explore privacy‑preserving quantization (differential privacy). By delivering a practical, production‑ready architecture, GPR paves the way for the next generation of recommendation systems that move beyond cascaded pipelines toward holistic, end‑to‑end generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment