AuthGlass: Benchmarking Voice Liveness Detection and Authentication on Smart Glasses via Comprehensive Acoustic Features

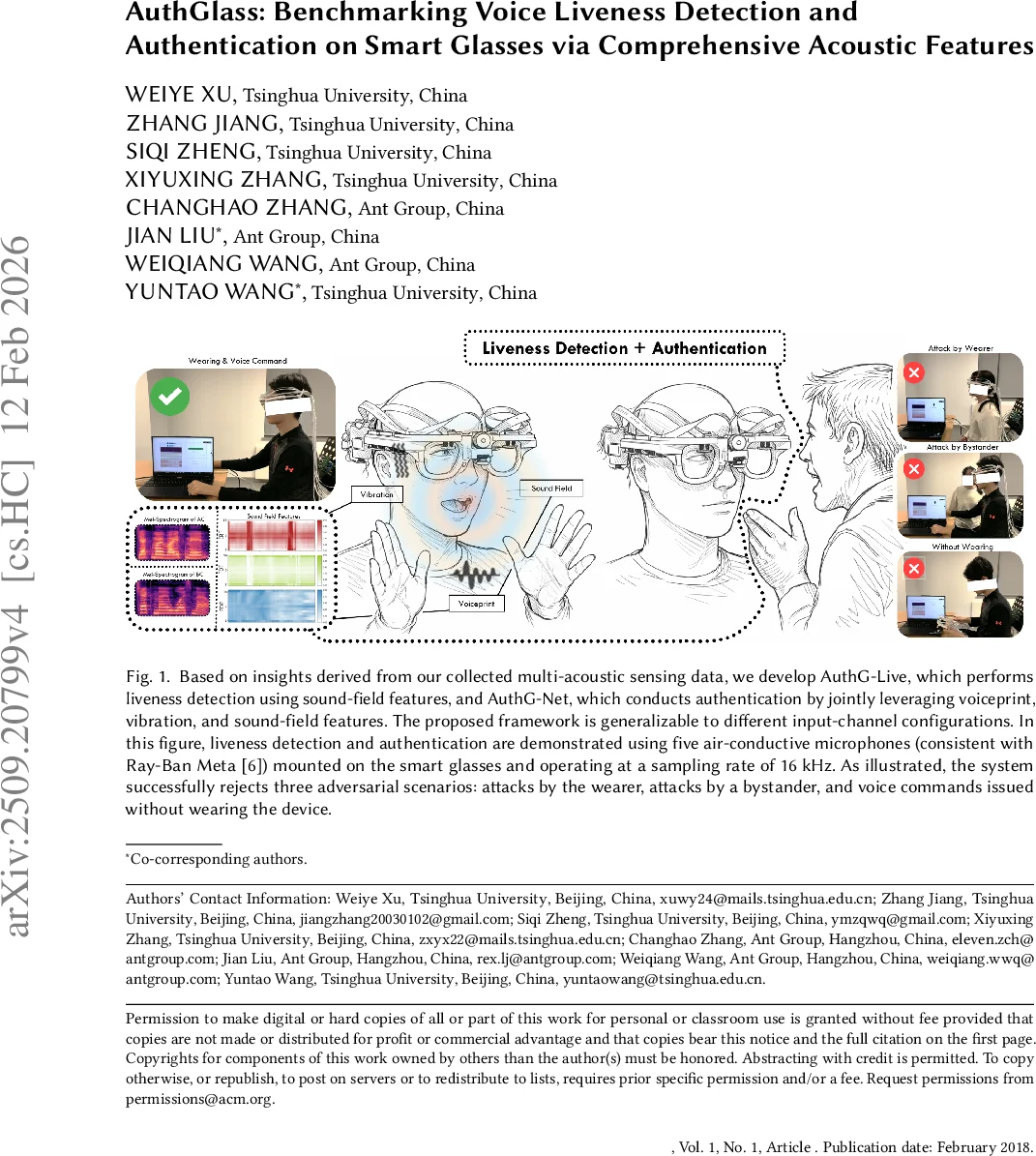

With the rapid advancement of smart glasses, voice interaction has been widely adopted due to its naturalness and convenience. However, its practical deployment is often undermined by vulnerability to spoofing attacks, while no public dataset currently exists for voice liveness detection and authentication in smart-glasses scenarios. To address this challenge, we first collect a multi-acoustic-modal dataset comprising 16-channel audio data from 42 subjects, along with corresponding attack samples covering two attack categories. Based on insights derived from this collected data, we propose AuthG-Live, a sound-field-based voice liveness detection method, and AuthG-Net, a multi-acoustic-modal authentication model. We further benchmark seven voice liveness detection methods and four authentication methods across diverse acoustic modalities. The results demonstrate that our proposed approach achieves state-of-the-art performance on four benchmark tasks, and extensive ablation studies validate the generalizability of our methods across different modality combinations. Finally, we release this dataset, termed AuthGlass, to facilitate future research on voice liveness detection and authentication for smart glasses.

💡 Research Summary

The paper addresses a critical gap in the security of voice‑enabled smart glasses by introducing a comprehensive dataset, novel detection and authentication models, and an extensive benchmark. Smart glasses such as Ray‑Ban Meta and Microsoft HoloLens increasingly rely on voice commands for hands‑free interaction, yet they are vulnerable to replay, synthesis, and injection attacks because of their proximity to the user’s mouth and the surrounding acoustic environment. Existing voice authentication and liveness detection research has focused on smartphones, earbuds, or single‑channel microphones, and no public dataset captures the unique multi‑microphone, multi‑modal acoustic signatures of a glasses‑form factor.

Dataset (AuthGlass).

The authors built a DIY smart‑glasses platform that integrates eight air‑conducted microphones and eight bone‑conducted (vibration) sensors around the frame, yielding 16 synchronized channels sampled at 96 kHz. They recruited 42 participants, each providing 15 command utterances at six volume levels, resulting in a rich set of genuine speech recordings. Two attack categories were collected: (1) physical replay attacks using external speakers placed near the user, and (2) synthetic attacks generated by state‑of‑the‑art text‑to‑speech and voice‑conversion models. In total the dataset contains over 12,600 audio samples, each annotated with speaker ID, attack type, volume, and environmental metadata. All recordings, hardware schematics, and software for data acquisition are released under an open‑source license, offering the first publicly available benchmark specifically for smart‑glasses voice security.

Proposed Methods.

-

AuthG‑Live (Liveness Detection).

The model exploits “sound‑field” features: inter‑microphone time‑difference of arrival (TDOA), cross‑correlation, and spatial spectral patterns derived from the 16‑channel array. These features are fed into a CNN‑LSTM architecture that learns to discriminate genuine, on‑device speech from spoofed or remote commands. By focusing on the physical propagation characteristics rather than the speech content, AuthG‑Live can detect attacks even when the acoustic content is indistinguishable from genuine speech. Experiments show >96 % accuracy with the full 16‑channel setup and >94 % accuracy when limited to the five‑channel configuration used in commercial glasses. -

AuthG‑Net (Authentication).

AuthG‑Net combines three modality‑specific subnetworks: (a) a conventional spectrogram‑based CNN for the air‑conducted voiceprint, (b) a 1‑D CNN processing bone‑conducted vibration signals that capture jaw and lip movements, and (c) the same sound‑field feature extractor used in AuthG‑Live. The three embeddings are concatenated and passed through fully‑connected layers to produce a speaker identity score. This multi‑modal fusion yields an average verification accuracy of 97.3 % across four test conditions (same utterance, different utterance, varying volume, and different acoustic environments) and an equal‑error‑rate (EER) of 1.1 %, outperforming baseline ASVspoof‑trained models by more than 1 % absolute.

Benchmarking and Comparative Evaluation.

The authors benchmarked seven existing liveness detection algorithms (including LFCC‑GMM, RawNet2, and anti‑spoofing CNNs) and four authentication systems (i‑vector, x‑vector, ECAPA‑TDNN, and a baseline multi‑channel CNN) on the AuthGlass dataset. Because most prior datasets are single‑channel and lack bone‑conducted signals, those methods suffered severe performance drops (70‑85 % accuracy) when applied to the multi‑modal glasses data. In contrast, AuthG‑Live and AuthG‑Net consistently achieved state‑of‑the‑art results, confirming that exploiting spatial and vibration cues is essential for robust smart‑glasses security.

Ablation Studies.

A series of ablations examined the impact of channel reduction, modality removal, and training data size. Reducing the array to two or four air‑conducted microphones decreased liveness accuracy only modestly (to ~92 % and ~94 % respectively), demonstrating that even minimal hardware can benefit from the proposed approach. Removing bone‑conducted channels caused a >5 % drop in both liveness and authentication performance, highlighting the importance of vibration sensing for anti‑replay robustness. Training with only 50 % of the data still yielded >95 % verification accuracy, indicating good data efficiency.

Practical Implications.

The work provides concrete design guidance for manufacturers: a modest 4‑mic configuration can already achieve >94 % liveness detection, while adding bone‑conducted sensors yields the highest security margin. The open‑source hardware files enable rapid prototyping, and the dataset facilitates reproducible research and the development of new attack vectors (e.g., ultrasonic injection, electromagnetic interference).

Conclusion.

AuthGlass delivers the first publicly available, high‑resolution, multi‑modal acoustic dataset for smart glasses, together with two novel deep‑learning models that set new performance benchmarks for voice liveness detection and user authentication in this emerging wear‑able class. By releasing both data and hardware designs, the authors lay a solid foundation for future research, standardization, and commercial deployment of secure voice interfaces on smart glasses.

Comments & Academic Discussion

Loading comments...

Leave a Comment