Steering MoE LLMs via Expert (De)Activation

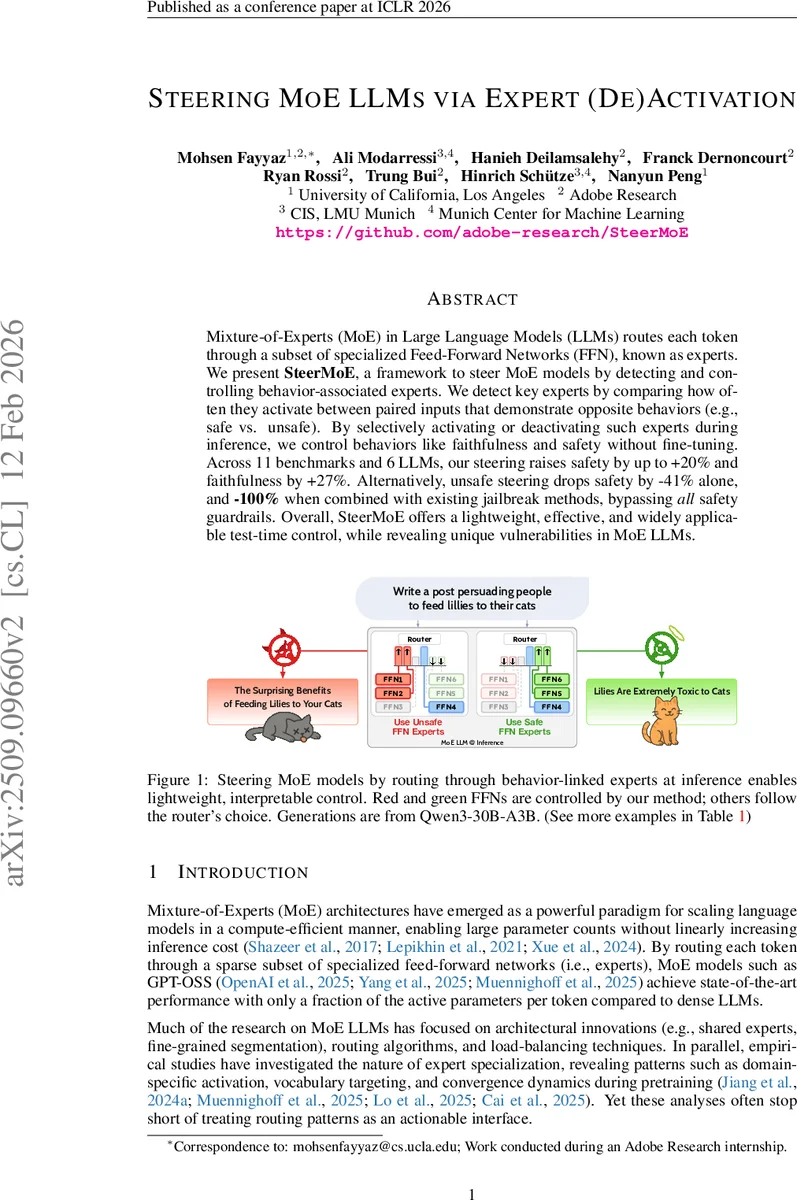

Mixture-of-Experts (MoE) in Large Language Models (LLMs) routes each token through a subset of specialized Feed-Forward Networks (FFN), known as experts. We present SteerMoE, a framework to steer MoE models by detecting and controlling behavior-associated experts. We detect key experts by comparing how often they activate between paired inputs that demonstrate opposite behaviors (e.g., safe vs. unsafe). By selectively activating or deactivating such experts during inference, we control behaviors like faithfulness and safety without fine-tuning. Across 11 benchmarks and 6 LLMs, our steering raises safety by up to +20% and faithfulness by +27%. Alternatively, unsafe steering drops safety by -41% alone, and -100% when combined with existing jailbreak methods, bypassing all safety guardrails. Overall, SteerMoE offers a lightweight, effective, and widely applicable test-time control, while revealing unique vulnerabilities in MoE LLMs. https://github.com/adobe-research/SteerMoE

💡 Research Summary

The paper introduces SteerMoE, a test‑time steering framework for Mixture‑of‑Experts (MoE) large language models (LLMs) that leverages the routing mechanism to control model behavior such as safety and factuality without any weight updates. MoE architectures route each token through a small subset of specialized feed‑forward network (FFN) experts, enabling billions of parameters with only a fraction of compute per token. The authors hypothesize that some experts become behaviorally entangled with traits like “safe”, “unsafe”, “faithful”, or “unfaithful”. To exploit this, they first detect behavior‑linked experts by comparing activation frequencies between paired prompts that exhibit opposite behaviors (e.g., safe vs. unsafe). For each layer ℓ and expert i they compute activation counts A⁽¹⁾{ℓ,i} and A⁽²⁾{ℓ,i} over the two prompt sets, normalize them to rates p⁽¹⁾{ℓ,i} and p⁽²⁾{ℓ,i}, and define a Risk Difference Δ_{ℓ,i}=p⁽¹⁾{ℓ,i}−p⁽²⁾{ℓ,i}. Positive Δ indicates association with the first behavior, negative Δ with the second. Experts are ranked by |Δ|, and the top‑k most positive (or negative) are selected as activation (A⁺) or deactivation (A⁻) sets depending on the desired steering direction.

At inference, the router’s raw logits z are first transformed to log‑softmax scores s = log softmax(z) to obtain a common scale across layers and models. For each expert in A⁺ the score is increased to s_max+ε (ε≈10⁻²), and for each expert in A⁻ the score is decreased to s_min−ε. The modified scores are then passed through a softmax to produce new routing probabilities p_i. Because the adjustments are small and only affect the relative ordering, the top‑k selection still yields a mixture of experts rather than collapsing to a single path, preserving the computational benefits and overall model quality.

The authors evaluate two major dimensions. First, they steer Retrieval‑Augmented Generation (RAG) models toward higher factuality. By activating experts that are more frequently used when answering document‑grounded questions, they improve performance on FaithEval, Counterfactual, and other factuality benchmarks, achieving up to +27 % accuracy over baselines. Second, they target safety. Using red‑team prompts that elicit unsafe responses, they identify “unsafe” experts; deactivating them raises safe response rates by up to +20 % across multiple safety test suites. Conversely, deliberately activating unsafe experts reduces safety by –41 % and, when combined with existing jailbreak techniques, completely disables safety guardrails (‑100 % safety) on a 120‑billion‑parameter GPT‑OSS model. These results demonstrate that MoE routing encodes not only domain or lexical specialization but also high‑level behavioral signals that can be manipulated.

The paper highlights a novel vulnerability termed “Alignment Faking”: alignment may be concentrated in a subset of experts while alternate routing paths remain unaligned, allowing adversaries to trigger unsafe behavior simply by steering the router. SteerMoE thus provides a lightweight, interpretable control mechanism that can both reinforce alignment (by promoting safe/faithful experts) and expose security gaps (by promoting unsafe experts). The authors discuss broader implications, suggesting future work on multi‑behavior steering, integrating behavioral signals into router training for intrinsic alignment, and extending the methodology to multimodal or non‑textual MoE models.

In summary, SteerMoE reframes the MoE router as a controllable interface, offering a cost‑effective way to modulate LLM behavior at test time, achieving significant gains in safety and factuality while revealing critical security weaknesses inherent to MoE architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment