Teaching LLMs According to Their Aptitude: Adaptive Reasoning for Mathematical Problem Solving

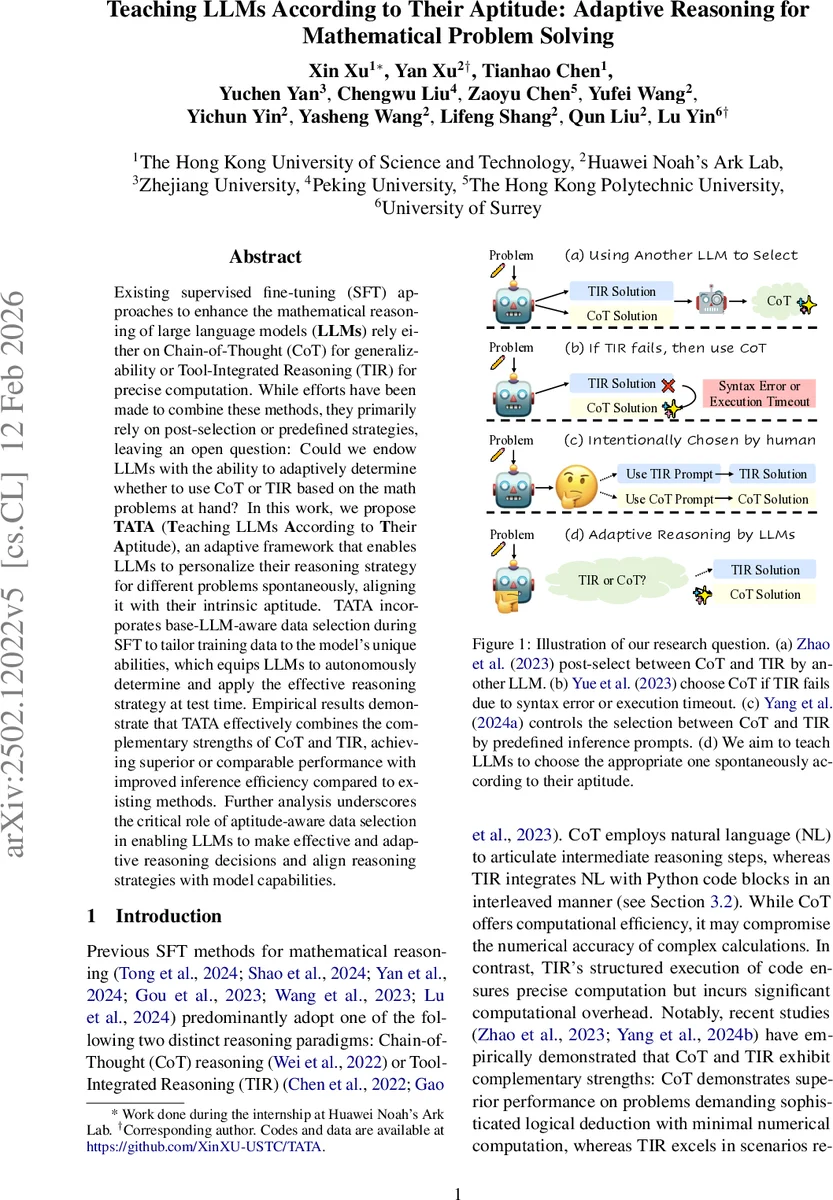

Existing approaches to mathematical reasoning with large language models (LLMs) rely on Chain-of-Thought (CoT) for generalizability or Tool-Integrated Reasoning (TIR) for precise computation. While efforts have been made to combine these methods, they primarily rely on post-selection or predefined strategies, leaving an open question: whether LLMs can autonomously adapt their reasoning strategy based on their inherent capabilities. In this work, we propose TATA (Teaching LLMs According to Their Aptitude), an adaptive framework that enables LLMs to personalize their reasoning strategy spontaneously, aligning it with their intrinsic aptitude. TATA incorporates base-LLM-aware data selection during supervised fine-tuning (SFT) to tailor training data to the model’s unique abilities. This approach equips LLMs to autonomously determine and apply the appropriate reasoning strategy at test time. We evaluate TATA through extensive experiments on six mathematical reasoning benchmarks, using both general-purpose and math-specialized LLMs. Empirical results demonstrate that TATA effectively combines the complementary strengths of CoT and TIR, achieving superior or comparable performance with improved inference efficiency compared to TIR alone. Further analysis underscores the critical role of aptitude-aware data selection in enabling LLMs to make effective and adaptive reasoning decisions and align reasoning strategies with model capabilities.

💡 Research Summary

The paper introduces TATA (Teaching LLMs According to Their Aptitude), an adaptive framework that enables large language models (LLMs) to automatically select between Chain‑of‑Thought (CoT) reasoning and Tool‑Integrated Reasoning (TIR) based on their intrinsic capabilities. Existing methods either rely on a fixed reasoning paradigm or use external selectors and predefined heuristics to switch between CoT and TIR, which limits model autonomy. TATA addresses this gap by incorporating base‑LLM‑aware data selection during supervised fine‑tuning (SFT).

First, an original math dataset is augmented with multiple CoT solutions via Rejection Fine‑Tuning. Each CoT solution is then transformed into a TIR version that preserves the logical flow while inserting Python code blocks for calculations, creating a candidate triplet set (question, CoT, TIR). An anchor set is constructed by clustering the original questions and selecting diverse representatives; this set serves as a validation proxy for measuring model performance.

For each candidate question, the framework evaluates the base model’s accuracy on the anchor set when the question is presented with either a CoT or a TIR one‑shot example. The average accuracies are recorded as S_k^CoT and S_k^TIR. The higher of the two scores indicates which reasoning style contributes more to the model’s performance for that specific problem. Using these contribution scores, TATA selects either the CoT or the TIR solution for inclusion in the final SFT training set, thereby tailoring the fine‑tuning data to the model’s aptitude.

During inference, the fine‑tuned model can spontaneously decide whether to generate a pure natural‑language chain of reasoning or an interleaved code‑augmented solution, without any external planner. This ability emerges from implicit instruction tuning: the one‑shot examples used during data selection act as latent prompts that bias the model toward the preferred reasoning format.

Experiments were conducted on six mathematical reasoning benchmarks (GSM‑8K, MATH, SVAMP, MAWPS, ASDiv, and a composite logic‑numeric set) using both a general‑purpose Llama‑3‑8B and a math‑specialized Qwen2.5‑Math‑7B as base models. TATA consistently outperformed or matched the best of pure CoT‑only and pure TIR‑only fine‑tuning, achieving average accuracy gains of 1.2–2.5 percentage points. Moreover, because the model selects CoT for logic‑heavy problems and TIR for computation‑heavy problems, overall inference latency was reduced by roughly 30% compared to a TIR‑only approach. Ablation studies showed that random mixing of CoT/TIR data degrades performance, confirming the importance of aptitude‑aware selection. Sensitivity analyses demonstrated that anchor set size and clustering quality significantly affect the final results.

The authors also discuss future extensions, including reinforcement‑learning‑based dynamic strategy refinement, integration of additional external tools beyond Python, and application of the aptitude‑driven selection paradigm to other domains such as science or programming. In summary, TATA provides a practical solution that endows LLMs with self‑aware, adaptive reasoning capabilities, improving both accuracy and efficiency in mathematical problem solving.

Comments & Academic Discussion

Loading comments...

Leave a Comment