Scale Contrastive Learning with Selective Attentions for Blind Image Quality Assessment

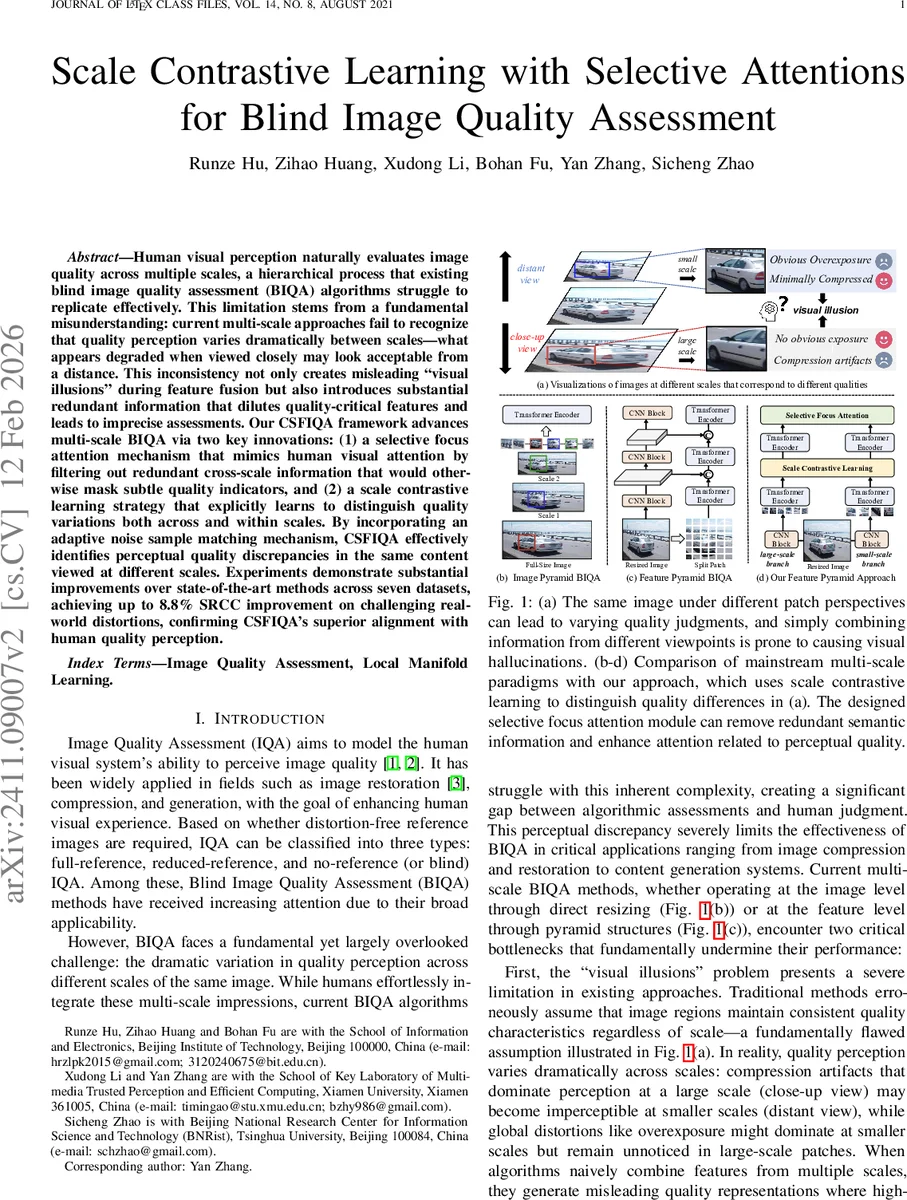

Human visual perception naturally evaluates image quality across multiple scales, a hierarchical process that existing blind image quality assessment (BIQA) algorithms struggle to replicate effectively. This limitation stems from a fundamental misunderstanding: current multi-scale approaches fail to recognize that quality perception varies dramatically between scales – what appears degraded when viewed closely may look acceptable from a distance. This inconsistency not only creates misleading ``visual illusions’’ during feature fusion but also introduces substantial redundant information that dilutes quality-critical features and leads to imprecise assessments. Our CSFIQA framework advances multi-scale BIQA via two key innovations: (1) a selective focus attention mechanism that mimics human visual attention by filtering out redundant cross-scale information that would otherwise mask subtle quality indicators, and (2) a scale contrastive learning strategy that explicitly learns to distinguish quality variations both across and within scales. By incorporating an adaptive noise sample matching mechanism, CSFIQA effectively identifies perceptual quality discrepancies in the same content viewed at different scales. Experiments demonstrate substantial improvements over state-of-the-art methods across seven datasets, achieving up to 8.8% SRCC improvement on challenging real-world distortions, confirming CSFIQA’s superior alignment with human quality perception.

💡 Research Summary

The paper introduces CSFIQA (Contrast‑Constrained Scale‑Focused Image Quality Assessment), a novel blind image quality assessment (BIQA) framework that explicitly models the scale‑dependent nature of human visual perception. Existing multi‑scale BIQA methods suffer from two fundamental issues: (1) “visual illusion,” where features from different scales are indiscriminately fused, causing the model to predict a uniform quality despite clear scale‑specific perceptual differences; and (2) “information dilution,” where redundant semantic information across scales overwhelms subtle distortion cues that are critical for quality assessment.

To address these problems, CSFIQA combines three key components:

-

Selective Focus Attention (SFA) – built on a Transformer encoder, SFA employs an Adaptive Filtering Selector (AFS) that retains only the top‑k self‑attention similarity scores, effectively discarding cross‑scale redundancy. The remaining attention maps are passed through an Information Concentrator that amplifies quality‑relevant signals while suppressing irrelevant semantics, mimicking the human tendency to focus on distortion‑rich regions.

-

Scale Contrastive Learning (SCL) – this module creates both intra‑scale and inter‑scale contrastive pairs based on Mean Opinion Score (MOS) distances. For a given patch, the pairwise Manhattan distance of MOS values across the mini‑batch is computed; samples with distance ≤ γ₁·max‑row are treated as positives, while those exceeding (1‑γ₂)·max‑row become negatives. An InfoNCE loss (temperature τ) forces representations of similar‑quality patches to be close regardless of scale, and pushes dissimilar‑quality patches apart, thereby aligning the feature space with human quality judgments.

-

Noise Sample Matching (NSM) – within a single image, CSFIQA identifies regions where quality differs most across scales. Feature maps are reshaped into spatial grids, a sliding window extracts small‑patch regions, and their corresponding large‑patch neighborhoods are located. The cosine similarity between each small‑patch feature and its large‑patch counterpart is computed; the pair with the lowest similarity is selected as a hard negative for contrastive learning. This mechanism directly combats the “visual illusion” effect by ensuring the model learns to distinguish scale‑specific quality discrepancies.

The overall pipeline proceeds as follows: an input image is partitioned into multi‑scale patches, each processed by a shared Vision Transformer encoder to obtain scale‑specific features. These features feed into SCL (producing positive/negative pairs) and NSM (producing hard negatives). The final encoder layer’s output is refined by SFA, then passed through an alignment layer and a Transformer decoder to predict a quality score. The total loss combines a regression term (L₁ between predicted and ground‑truth MOS) with the contrastive terms (L_scale and L_noise) weighted by λ.

Extensive experiments on seven benchmark datasets (LIVE, CSIQ, TID2013, KADID‑10k, KonIQ‑10k, LIVEFB, LIVEC) demonstrate consistent improvements over state‑of‑the‑art BIQA methods. Notably, CSFIQA achieves an 8.8 % SRCC gain on the challenging LIVEFB dataset, where real‑world distortions are prominent. Ablation studies confirm that removing any of the three modules degrades performance, highlighting their complementary contributions.

Despite its strengths, CSFIQA has limitations. The reliance on image resizing to generate scales may not fully capture the diversity of native multi‑resolution content encountered in practice. The Transformer backbone incurs high computational and memory costs, especially for high‑resolution inputs, which could hinder real‑time deployment. Moreover, the NSM strategy of selecting the “most dissimilar” patch as a negative may sometimes introduce overly hard negatives, potentially destabilizing training.

Future work could explore lightweight ViT variants or hybrid CNN‑Transformer designs to reduce overhead, dynamic scale selection mechanisms that adaptively choose the most informative resolutions per image, and more rigorous human‑subject studies to validate the perceptual alignment of the learned representations. Overall, CSFIQA offers a compelling approach to bridging the gap between algorithmic quality prediction and human visual experience by explicitly modeling scale‑aware quality perception.

Comments & Academic Discussion

Loading comments...

Leave a Comment